Key Takeaways

Agentic Memory: The Persistence Layer Beyond RAG

Stop rebuilding context every session. Start writing it once and remembering it forever.

Silent Semantic Errors Dominate Multi-Agent Failures: Eliminate the silent semantic drift behind 75.17% of multi-agent failures by anchoring agents to persistent state.

A-MEM Doubles Multi-Hop Reasoning Performance:Research from Xu et al. at NeurIPS 2025 shows the Zettelkasten-inspired A-MEM architecture delivers performance roughly two times higher than MemGPT, MemoryBank, and ReadAgent on multi-hop reasoning across the LoCoMo and DialSim benchmarks.

Stateful QA Is the Verification Layer: TestQuality leverages persistent memory principles to deliver test suites that learn application logic over time — eliminating the token drain of context reconstruction across QA cycles.

If RAG is an agent reading a textbook, agentic memory is the agent writing its own persistent lab notes. The difference in multi-agent reliability is exponential.

Agentic memory is the dynamic persistence layer that allows AI agents to store, retrieve, and update contextual information across sessions. Unlike RAG, which is read-only, agentic memory enables stateful agents to learn from past executions, adapt to evolving codebases, and maintain reasoning continuity. It is the architectural answer to context rot — the gradual degradation of context awareness that destroys reliability in long-running autonomous workflows. In the 2024 Stack Overflow Developer Survey, 63.3% of developers identified 'AI tools lack context of codebase' as a top challenge to using AI code assistants, and agentic memory is the engineering pattern that closes that gap by transforming stateless text generators into stateful digital collaborators.

What Is Agentic Memory in AI Development?

Agentic memory is the read-write-update persistence layer that allows AI agents to store experiences, retrieve historical context, and continuously refine their understanding across multiple independent sessions. It transforms stateless LLMs into stateful agents capable of long-running, multi-step engineering tasks.

At its core, agentic memory mimics how human professionals work. A senior engineer does not rebuild their understanding of a codebase every morning — they recall past decisions, remember which dependencies caused issues last sprint, and apply that learned context to new problems. Vanilla LLMs, by contrast, are amnesiacs. Each prompt is a blank slate. Agentic memory closes that gap by combining the LLM's short-term context window with external long-term storage like vector databases, knowledge graphs, and episodic logs.

Agentic memory is the persistence layer that makes the Agentic SDLC reliable across sessions. For the full framework, see our guide to the Agentic SDLC . While context engineering defines the rules for how agents receive and process information, agentic memory ensures those rules persist across sessions. Context engineering sets the playbook; agentic memory ensures the team remembers it.

Why Is RAG Alone Not Enough for Stateful AI Agents?

RAG is a read-only retrieval pattern that injects static external knowledge into prompts but does not learn from agent execution. Agentic memory is read-write-update — agents actively record their decisions, errors, and outcomes, so subsequent sessions inherit accumulated experience rather than restarting from zero.

Retrieval-Augmented Generation revolutionized AI by giving models access to external documentation, but it carries a fundamental architectural limitation. RAG vs agentic memory is the difference between an agent looking up a recipe in a cookbook and an agent remembering that the last time it made the dish, the oven runs hot and the recipe needed an extra five minutes. RAG retrieves; agentic memory learns.

The cost of stateless multi-agent collaboration is brutal. Three agents sequentially re-reading a document compounds token usage from 10,000 to 29,000 tokens — context reconstruction overhead is a massive drain on both budget and latency. Well-architected memory systems reduce token consumption and latency by using pre-computed embeddings to fetch only top-K relevant memory nodes, bypassing full context reconstruction entirely.

Platforms like Emergent illustrate this in production. They use continuous memory learning, where agents that struggled with an API integration one week succeed instantly the following week by relying on recorded execution memory. They no longer re-read the documentation; they remember the specific quirks of the implementation, the auth headers that broke last time, and the rate limit they hit on Tuesday afternoon.

| Dimension | No Memory (Vanilla LLM) | Standard RAG | Agentic Memory |

|---|---|---|---|

| Memory Type | Stateless / ephemeral | Read-only / external | Read-Write-Update |

| Persistence Across Sessions | None — full reset every prompt | Static knowledge base only | Continuous, evolving across runs |

| Context Retrieval Method | Prompt injection only | Vector similarity search | Top-K semantic + episodic routing |

| Token Efficiency | Poor — compounds across agents | Moderate — chunking helps | High — memory compaction |

| Primary Failure Mode | Context window saturation | Irrelevant chunk retrieval | Runaway evolution risk |

| Best Use Case | Zero-shot generation | Documentation Q&A | Stateful QA + Agentic SDLC |

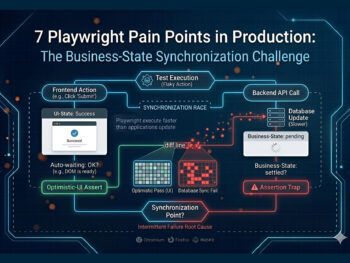

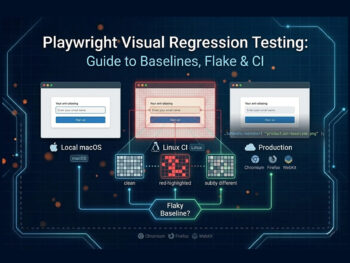

How Does Context Rot Destroy Multi-Agent Reliability?

Context rot is the gradual degradation of an AI agent's contextual awareness over long sessions. As context windows fill, attention mechanisms dilute early instructions, agents hallucinate file paths, forget constraints, and inject silent semantic errors that quietly corrupt multi-step autonomous workflows.

The pattern is visible to any team that has run an agent past the one-hour mark. The first ten minutes are brilliant — the agent follows the spec, respects the architecture, names variables consistently. By the second hour, things drift. Function signatures change subtly. Imports point to files that do not exist. The business logic still compiles, but it no longer matches the original requirements. This is context window degradation, and it is mathematical, not motivational.

The data is grim. A 2025 NeurIPS study by Cemri et al. analyzing 1,642 annotated multi-agent execution traces found that 75.17% of multi-agent failures manifest as silent gray errors — plausible-looking but unusable outputs that do not trigger system exceptions and are only caught on manual inspection. The researchers identified 14 distinct failure modes spanning system design, inter-agent misalignment, and task verification gaps — context loss alone accounts for 2.80%, but it compounds with reasoning-action mismatch (13.2%) and step repetition (15.7%) to produce the broader silent-failure pattern. The code passes its tests because the tests were written against the same drifted assumptions. The bug only surfaces in production. This explains why 66.2% of developers in the 2024 Stack Overflow Developer Survey cited 'don't trust the output or answers' as a top challenge to AI adoption — and why that distrust deepened in the 2025 Stack Overlow survey, with the same survey finding that 66% of developers now identify 'AI solutions that are almost right, but not quite' as their single biggest frustration with AI tools..

The trust gap is widening, not closing — adoption rose from 76% to 84% between the two surveys, while trust in AI accuracy fell sharply over the same window. The data tells a clear story: developers are using AI tools more and trusting them less, and the missing layer is persistent memory plus verification.

To solve this, the QA industry is shifting toward agentic QA ecosystems, where continuous verification catches semantic drift before it merges into production. Persistent memory is the upstream fix; agentic QA is the downstream safety net.

How Is Agentic Memory Architected From Short-Term to Long-Term Persistence?

Agentic memory architecture mirrors human cognition: a fast short-term working memory inside the LLM context window, a long-term episodic store of past experiences, and a semantic knowledge graph of facts and rules. Memory compaction and embedding-based retrieval bridge these layers efficiently.

Building persistent memory AI requires structuring the system the way the human brain structures recall. Short-term vs long-term agent memory mirrors working memory and consolidated memory in cognitive science. Short-term memory is the immediate context window — the scratchpad where the agent reasons about the current task. Long-term memory is external storage, typically a hybrid of vector databases like Pinecone or Weaviate and graph databases like Neo4j, where past executions, codebase rules, and architectural decisions reside permanently.

To bridge these two layers, modern systems use a Zettelkasten memory architecture. Originally a note-taking method developed by sociologist Niklas Luhmann, the digital implementation stores memories as atomic, interlinked notes. When an agent encounters a new problem, memory retrieval embeddings search this network for related historical context, surfacing not just the most similar memory but the chain of related insights connected to it.

To prevent the database from bloating into uselessness, systems employ memory compaction — a background process where smaller, cheaper LLMs summarize older logs into dense, high-value insights. The integration of RLAIF (Reinforcement Learning from AI Feedback) further refines this loop. Agents grade the usefulness of retrieved memories, continuously improving the retrieval algorithm itself. This sophisticated infrastructure forms the backbone of next-generation testing environments, where test agents possess deep, evolving understanding of application state.

How Does the A-MEM Architecture Work in Practice?

A-MEM is an advanced agentic memory framework that segments information into hierarchical stores — short-term working memory, long-term episodic logs, and a structured semantic knowledge graph — then dynamically routes queries through pre-computed embeddings. It outperforms older baselines by 2x on multi-hop reasoning.

While early frameworks like MemGPT introduced the concept of an operating-system-style memory hierarchy with explicit paging between fast and slow tiers, newer architectures push the boundary further. Research from Xu et al. at NeurIPS 2025 introduced A-MEM, a Zettelkasten-inspired memory system benchmarked against MemGPT, MemoryBank, and ReadAgent on the LoCoMo and DialSim datasets. On multi-hop reasoning tasks — where agents must retrieve and synthesize interconnected information across multiple past sessions — A-MEM's performance was usually two times higher than the established baselines. It achieves this by maintaining a richly structured relational graph rather than relying solely on flat vector embeddings, which lets it traverse chains of related memories in a single query.

But more capability brings new risk. The primary danger of advanced read-write memory systems is runaway evolution risk: dynamic memory updating can cause cascading uncontrollable changes that are very difficult to roll back. If an agent incorrectly updates a core memory node — say, marking a deprecated API as the current standard — every subsequent agent reading that memory inherits the error and may compound it further. The graph poisons itself, slowly, in production. This is why explicit verification phases are non-negotiable in any system that gives agents write access to long-term memory.

How Does Agentic Memory Fit Into the SDLC From Planning to Verified Execution?

Agentic memory weaves through the entire development lifecycle: the planning agent records architectural decisions, the coding agent queries those decisions for compliance, and the testing agent retrieves both the original plan and execution logs to generate targeted verification — closing the loop with persistent state.

The integration of LLM memory into the software development lifecycle changes how teams build and verify software, but the orchestration model is non-negotiable. Humans are always orchestrators. AI does not replace developers — it amplifies their capacity to plan, build, and verify across longer time horizons. The goal is a rigorous framework where context engineering dictates the boundaries and agentic memory handles the persistence. This structured approach is infinitely superior to unstructured vibe coding, which relies on hoping the LLM guesses correctly from a massive uncurated prompt.

In a stateful SDLC, an agentic planning tool records architectural decisions into long-term memory at design time. When the coding agent begins work, it queries this memory to ensure compliance with prior decisions. Finally, the testing agent retrieves both the original plan and the execution logs to generate highly targeted test cases. Cemri et al. demonstrated this empirically: adding a high-level task objective verification step to the ChatDev multi-agent framework on the ProgramDev coding benchmark improved task success rates by 15.6%, replacing superficial low-level checks like compilation validation with deterministic outcome verification. This continuous loop of execution and verification is the core of a robust LLM regression testing pipeline.

The mechanism behind that 15.6% lift matters as much as the number itself. Cemri et al. found that most multi-agent verification systems perform only low-level checks — does the code compile, does the function return without throwing — which allows plausible-looking but functionally unusable outputs to pass quality gates unnoticed. The corrective intervention was deliberate: replacing those superficial checks with explicit task-objective verification, where the system asks not 'did this run?' but 'did this achieve the original goal?'. For TestQuality users, this maps directly to the discipline of writing acceptance criteria as verification targets rather than treating tests as syntax validators.

Try It Now

Stop Rebuilding Context. Start Building Stateful Tests.

TestStory.ai uses agentic memory principles to generate test suites that learn your application's logic over time — no more context rot, no more hallucinated assertions. Paste any user story, architecture diagram, or acceptance criteria and watch the agent produce structured Gherkin scenarios in seconds. No account required to try.

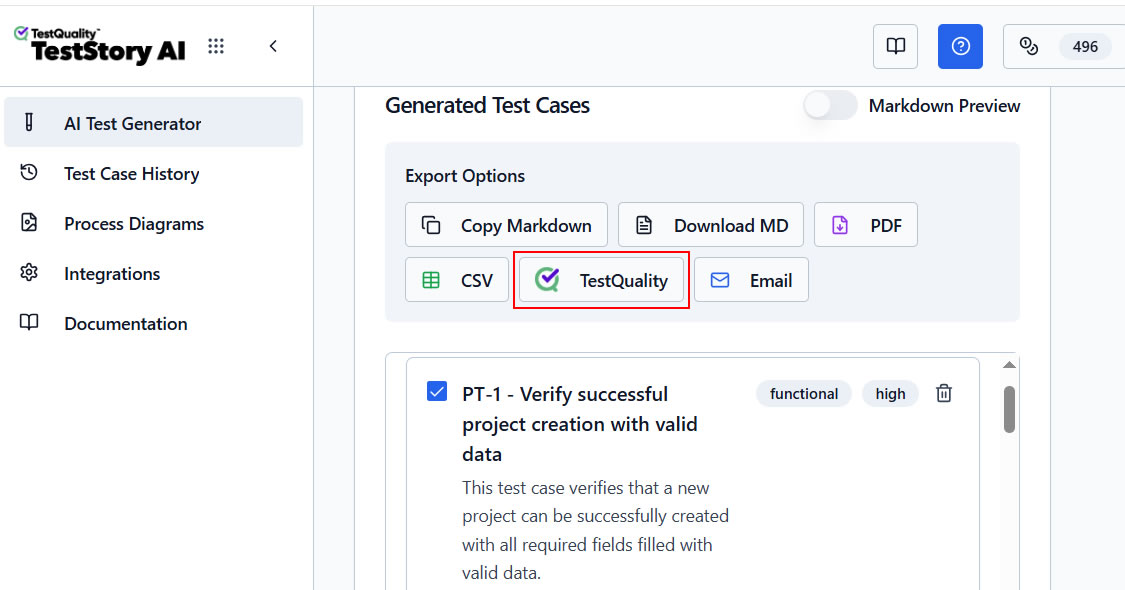

How Does TestQuality Deliver Stateful QA Verification Using Agentic Memory Principles?

TestQuality is working to apply agentic memory principles to QA workflows, maintaining persistent understanding of application architecture, historical defect patterns, and evolving requirements. Through its native AI test case creation layer TestStory.ai, it produces stateful test suites that improve over time rather than regenerating from scratch each sprint.

TestQuality is engineered as the QA verification layer that benefits directly from persistent agentic memory. By providing a structured, stateful environment for test management, TestQuality ensures the outputs of autonomous agents are rigorously verified, tracked, and stored. With native integrations for GitHub and Jira, the platform bridges the gap between AI-generated code and human-orchestrated release management.

The AI layer powering this ecosystem is TestStory.ai, an agentic test case generator included natively with TestQuality subscriptions. TestStory.ai applies memory principles to understand an application's historical defect patterns and evolving requirements. Instead of generating generic, stateless tests, it produces stateful test suites that improve over time, remembering the edge cases that broke last sprint and the integration quirks the team has already mapped.

Users get 500 TestStory.ai credits monthly with their TestQuality subscription, ensuring continuous access to stateful QA generation. For teams looking to experience these capabilities immediately, the Free AI Test Case Builder provides a powerful no-friction entry point to context-aware test generation.

Technical Deep Dive FAQ

Summary

Mastering Persistent Memory AI for the Agentic SDLC

Six engineering principles that separate stateful agents from stateless toys.

Move Beyond RAG: Transition from read-only static retrieval to dynamic read-write-update memory systems that learn from every execution.

Solve Context Rot: Mitigate the silent semantic drift documented across 75.17% of multi-agent failures (Cemri et al., NeurIPS 2025) by anchoring agents to persistent state and explicit verification.

Slash Token Overhead: Use top-K embeddings and memory compaction to avoid the 29,000-token context reconstruction trap of stateless multi-agent chains.

Adopt A-MEM Patterns: Leverage Zettelkasten-style interlinked memory nodes for the ~2x performance lift over MemGPT, MemoryBank, and ReadAgent on multi-hop reasoning (Xu et al., NeurIPS 2025).

Mitigate Runaway Evolution: Implement high-level task objective verification — Cemri et al. (NeurIPS 2025) measured a 15.6% task success improvement on the ProgramDev benchmark when superficial compilation checks were replaced with deterministic outcome validation.

Verify with Stateful QA: Use TestQuality and TestStory.ai to build test suites that learn application logic continuously rather than regenerating from zero each sprint.

Context engineering sets the rules. Agentic memory ensures the agent never forgets them. Stateful verification is the only path to trusting autonomous code in production.

Start Free Today

Build test suites with memory. Stop rebuilding context every sprint.

TestStory.ai generates structured test cases from your user stories, acceptance criteria, or architecture diagrams — then syncs them directly into TestQuality for execution, tracking, and team collaboration. Memory-driven QA means edge cases stay caught, regressions stay rare, and your team stops fighting the same fires every release.

✦ Get 500 TestStory.ai credits every month included with your TestQuality subscription — no extra cost.

No credit card required on either platform.