LLM regression testing is the automated process of evaluating large language model updates, prompt changes, and retrieval pipelines against baseline datasets to detect performance degradation. It relies on algorithmic scoring rather than human intuition to measure accuracy, relevance, and safety across iterative model versions.

At a Glance

Pipeline Components at a Glance

Stop guessing. Start benchmarking.

Evaluation Gold Sets: Curated input-output pairs that serve as the absolute ground truth for model benchmarking — the static baseline every regression delta is measured against.

The RAG Triad: Continuous measurement of Faithfulness, Answer Relevance, and Context Groundedness to prevent hallucination from being smuggled through a retrieval pipeline.

LLM-as-a-Judge: A high-parameter secondary model configured to programmatically score target model outputs against strict grading rubrics — AI evaluating AI at a scale no human team can match.

Semantic Similarity Scores: Vector-based mathematical comparisons that measure contextual closeness between a generated response and the expected baseline — regardless of vocabulary.

Continuous Prompt Regression: Automated CI/CD pipeline triggers that execute the full evaluation suite whenever system instructions, model weights, or context window parameters are modified.

The Agentic QA agents covered in Part 1 of this series are only as reliable as the LLM powering them. This pipeline is how you certify that brain before it touches your production pipelines.

What is Algorithmic Benchmarking — and why do Vibe Checks fail?

Algorithmic benchmarking replaces subjective, manual model interactions with deterministic evaluation frameworks that calculate exact regression deltas across accuracy, safety, and format compliance. Where a vibe check asks "does this feel right?", a benchmark calculates whether semantic similarity dropped 3.2 points after the latest prompt update — and blocks the merge if it did.

In traditional software quality assurance, inputs map to predictable, static outputs. If an engineer modifies a sorting algorithm, a unit test with an assertion like expect(output).toEqual([1, 2, 3]) provides immediate, binary feedback. Large Language Models break this paradigm entirely. Because inference generation relies on top-p sampling, temperature parameters, and next-token probabilities, the exact string output will vary even when the underlying meaning is entirely correct.

Historically, QA teams approached this unpredictability through vibe checks — manually typing a dozen prompts into a staging UI, reading the responses, and subjectively deciding whether the model felt accurate. This methodology is fundamentally unscalable. It relies on human fatigue levels, suffers from recency bias, and ignores the vast combinatorial space of edge cases a model encounters in production.

Algorithmic benchmarking replaces this guesswork with mathematical rigor. Automated evaluation pipelines execute thousands of targeted queries against a new model version or prompt update in minutes. If a developer lowers the temperature parameter or adjusts the system prompt to fix a specific formatting issue, the benchmark quantifies whether that adjustment degraded performance across fifty other historical use cases. This is the core principle: unit tests for probabilistic logic.

How do you build an Evaluation Gold Set?

Creating evaluation Gold Sets is the foundational step in LLM regression testing — a static benchmark of verified inputs and optimal outputs. Without a mathematically rigorous baseline dataset, CI/CD pipelines cannot reliably calculate performance deltas, measure response degradation, or detect context drift during continuous deployment cycles.

A Gold Set is a curated repository of test cases that define perfection for your specific AI use case. Unlike raw production logs, a Gold Set is annotated by domain experts to represent the exact behavior the LLM should exhibit when presented with varying degrees of complexity. Building this dataset requires a matrixed approach to data collection. Engineering teams should synthesize ideal responses for standard queries, adversarial edge cases (prompt injections), ambiguous inputs requiring clarification, and out-of-domain requests that the model should gracefully decline.

To prevent evaluation pipelines from becoming brittle, Gold Sets must be dynamically maintained. As the application scales and encounters novel real-world queries, those interactions should be reviewed, corrected if necessary, and merged back into the Gold Set. This creates a living repository that mirrors production realities rather than a static snapshot that ages out of relevance.

Formatting is equally critical. The Gold Set must be structured in machine-readable formats — typically JSONL — where each record contains the input_prompt, the expected_output, the required_context, and the evaluation_criteria. This structured taxonomy allows the evaluation pipeline to parse thousands of records asynchronously, feeding them into the target model and capturing raw inference outputs for downstream algorithmic scoring.

What is the optimal Gold Set size?

A production Gold Set typically contains between 100 and 300 highly diverse, mutually exclusive prompt-response pairs. This size provides statistical significance for calculating regression metrics without introducing excessive computational overhead during continuous CI/CD runs. Teams should regularly rotate out stale queries and inject recent production edge cases to keep the baseline representative.

How does the RAG Triad prevent hallucination in retrieval pipelines?

The RAG Triad secures Retrieval-Augmented Generation architectures by continuously scoring output against three definitive vectors: Faithfulness, Answer Relevance, and Context Groundedness. This framework guarantees the LLM only generates assertions backed by retrieved documents, directly answers the user prompt, and extracts the correct context from the vector database.

When evaluating a model that relies on external knowledge bases, measuring general text fluency is insufficient — the primary risk factor is hallucination disguised as confident fact. The RAG Triad deconstructs the generation process to isolate exactly where a retrieval pipeline fails.

Faithfulness measures whether the final generated response can be directly traced back to the retrieved context. If the model outputs a statistic or claim that does not exist within the injected payload, the faithfulness score drops to zero. This is the primary defense against internal model hallucinations overriding retrieved facts.

Answer Relevance evaluates the contextual alignment between the user's initial prompt and the final output. A model might generate a perfectly faithful summary of a retrieved document — but if that document does not answer the user's specific question, the system has still failed. This metric penalizes models that exhibit tangential rambling or fail to address multi-part queries concisely.

Context Groundedness inspects the middle tier of the architecture: the vector retrieval itself. It evaluates whether the chunks retrieved from the database actually contain the information necessary to satisfy the prompt. If groundedness is low, the QA failure is not the fault of the LLM's reasoning — it lies in the chunking strategy, the embedding model, or the similarity search parameters.

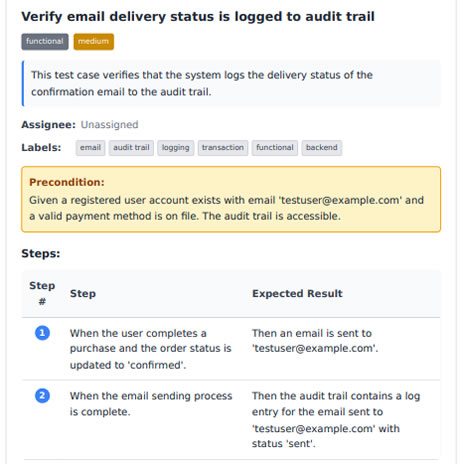

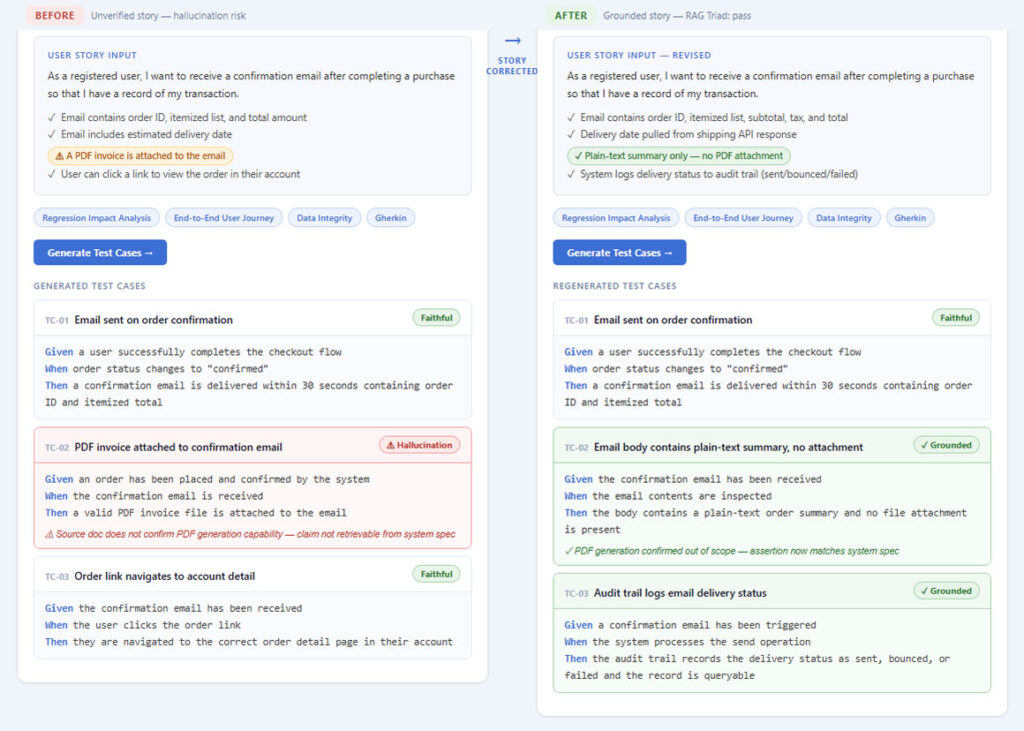

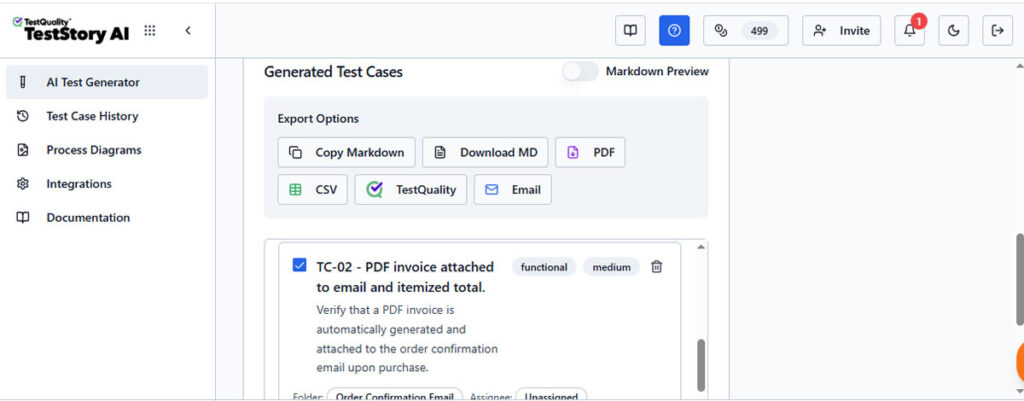

(Img 1) - Using requirement mapping to trace generated outputs back to source documents, verifying RAG Triad compliance and pinpointing exactly where context retrieval failed in the pipeline.

RAG Triad result: Before, Faithfulness score 0 on TC-02 (claim not present in retrieved context). After, all three test cases score Faithful + Grounded. The hallucinated PDF attachment test is replaced by two requirements-backed cases.

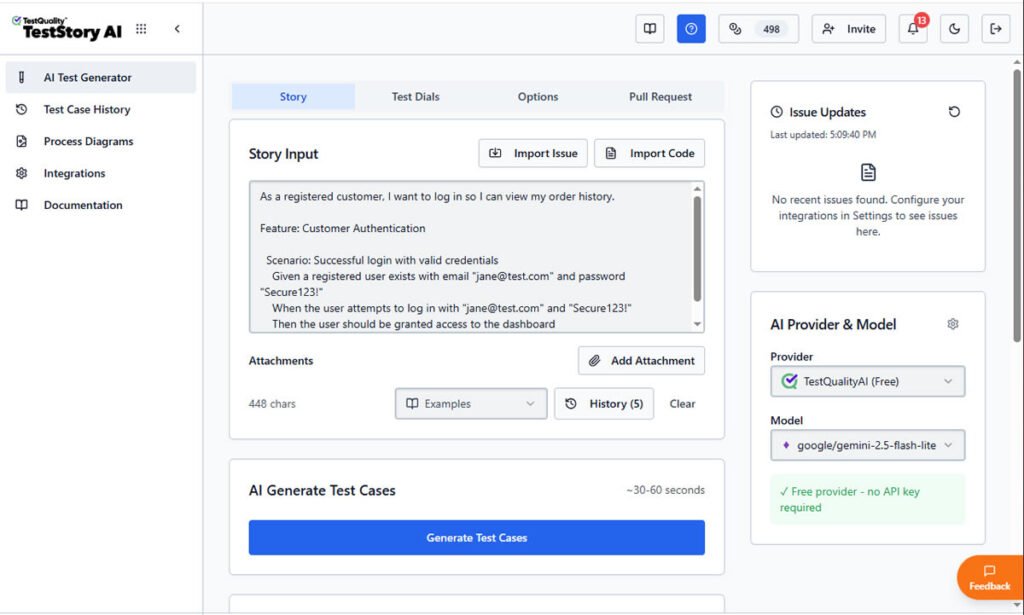

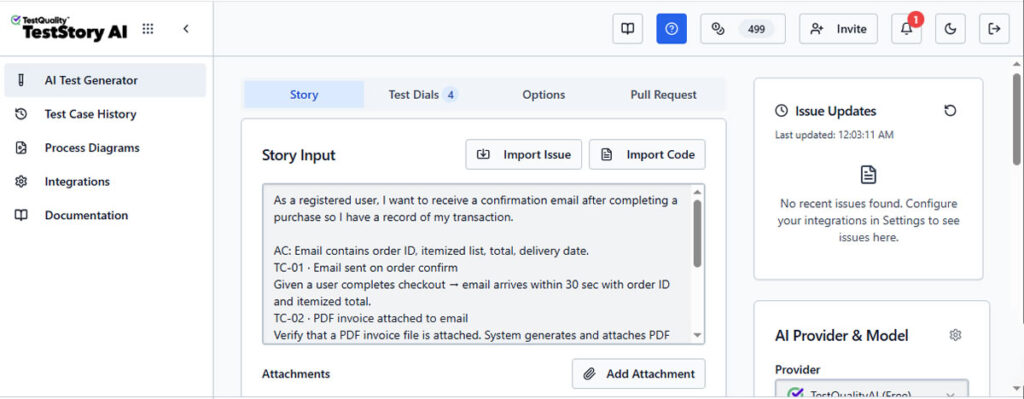

The TestStory.ai generated test case that references the PDF invoice attachment. This is the hallucination target, because the user story says "PDF invoice is attached" but a real payment system may not generate PDFs at the point of purchase. The test case will faithfully mirror the input claim without questioning whether the system actually produces a PDF.

Below, it's the regenerated test cases. Now, the PDF attachment test case is gone, replaced by a plain-text summary test and an audit log test. This is the "after", the retrieval pipeline now only generates assertions backed by what the system actually delivers.

This example shows the two states: the red/hallucination flag on TC-02 in the before (Img 1- left column), and the green/grounded badge on the corrected version after (Img 1 - right column).

Try It Now

Build your first LLM Evaluation Gold Set — in under 60 seconds.

Paste any user story or acceptance criteria into TestStory.ai and watch the evaluation layer generate structured, baseline-ready test cases instantly — covering happy paths, adversarial edge cases, and the failure scenarios your team would typically miss. Results sync directly into TestQuality for execution tracking and coverage analytics. No account required to start.

No credit card required on either platform.

How does LLM-as-a-Judge automate output scoring at scale?

LLM-as-a-Judge pipelines utilize a superior, high-parameter model to programmatically grade the outputs of your primary application model. By applying strict prompt rubrics and outputting discrete Semantic Similarity Scores, this architecture automates qualitative analysis at a scale impossible for human QA teams to match.

Because exact-match string assertions fail in non-deterministic environments, engineering teams must leverage AI to evaluate AI. In an LLM-as-a-Judge architecture, a smaller, faster model — such as Llama 3 8B or GPT-4o-mini — handles the actual user-facing application logic. The outputs of this model are piped into a massive, highly capable model — such as GPT-4 or Claude 3.5 Sonnet — which acts as the automated QA engineer.

The judge model receives a rigorous grading rubric via its system prompt. It compares the target model's generated response against the expected output in the Gold Set, then outputs standardized Semantic Similarity Scores — utilizing a combination of cross-encoder models and LLM reasoning to determine whether the meaning of the two strings is identical, regardless of vocabulary differences.

To ensure the judge's reliability, engineers must actively mitigate known algorithmic biases. LLMs exhibit position bias (favoring the first answer they see) and verbosity bias (scoring longer answers higher, even if they are less accurate). Advanced regression pipelines counteract this by using few-shot prompting for the judge, swapping the order of the baseline and generated responses between runs, and forcing the judge to output a chain-of-thought rationale before generating the final numeric score. This creates a transparent, auditable trail for every pass/fail decision in the pipeline.

How does LLM Regression Testing connect to Agentic QA?

Robust, benchmarked foundational models are the prerequisite for reliable agentic test automation. Only when the underlying inference engine is proven stable through rigorous algorithmic benchmarking can higher-level AI agents effectively navigate complex UI workflows, generate sound Gherkin scripts, and produce compilation rates above 94%.

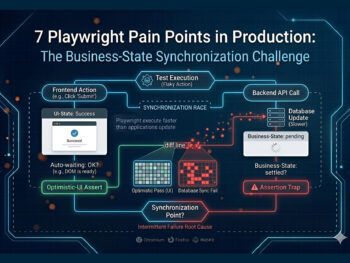

There is a critical architectural distinction between testing the "brain" of an AI and using that brain to test other software. The LLM regression pipelines in this article evaluate the raw reasoning, safety, and retrieval accuracy of the language model itself. That foundational layer is exactly what powers the autonomous testing agents described in Part 1 of this series: Agentic QA Architecture.

When engineering teams deploy agentic QA workflows — where AI agents dynamically read the DOM, generate Playwright scripts, and independently navigate web applications — the underlying LLM must already be certified. If that model suffers from context drift, hallucination, or degraded reasoning, the agent will generate broken test scripts and report false positives. A 2% drop in faithfulness scores at the model layer translates directly into flaky tests and unreliable CI/CD pipelines at the application layer.

LLM regression testing therefore acts as the necessary precursor. By running the RAG Triad and LLM-as-a-Judge benchmarks first, you certify the cognitive engine. Once the Gold Set metrics confirm the model's reasoning is sound, you can confidently deploy that model into the agentic framework from Part 1 — knowing it has the baseline reliability required to autonomously evaluate your application's user interface without babysitting.

How does Continuous Prompt Regression catch drift before production?

Continuous prompt regression operates as the final automated gatekeeper — executing the entire evaluation suite whenever system prompts, chunking strategies, or model versions change. This CI/CD integration detects behavioral drift instantaneously, ensuring that minor prompt tweaks intended to fix one edge case do not silently break ten existing features.

Prompts are essentially compiled code for language models. Modifying a few adjectives in a system prompt to correct a specific formatting issue can drastically alter the model's attention mechanisms, causing catastrophic forgetting across other domains. Continuous prompt regression treats prompts as version-controlled artifacts within a Git repository — the same rigor applied to application code.

Whenever a developer opens a pull request that modifies a .prompt file, changes an embedding threshold, or updates the underlying model version via API, a webhook triggers the CI/CD pipeline. The pipeline pulls the Gold Set, runs the new configuration through the test matrix, invokes the LLM-as-a-Judge, and compiles the Semantic Similarity Scores. It then generates a regression report comparing the new metrics against the main branch.

If the overall accuracy drops below a predefined threshold — commonly less than 95% semantic similarity — or if the hallucination rate spikes above tolerance, the pipeline automatically blocks the merge request. This eliminates hope-based deployments entirely, replacing them with empirical, data-driven release engineering. No prompt ships without a passing benchmark.

| Evaluation Method | Vibe Checks (Manual) | Exact-Match Assertions | Algorithmic Benchmarking (RAG Triad + LLM-as-a-Judge) |

|---|---|---|---|

| Scoring Mechanism | Human intuition, session-by-session | Binary string comparison | Semantic Similarity Scores + cross-encoder models |

| Handles Non-Determinism | No — subjective per reviewer | No — fails on correct paraphrases | Yes — scores meaning, not exact vocabulary |

| Hallucination Detection | Inconsistent — depends on reviewer knowledge | None | Systematic — RAG Triad Faithfulness score isolates every unsupported claim |

| Scale | 10–20 prompts per session | Hundreds — breaks on model update | Thousands — runs asynchronously in CI/CD |

| Prompt Version Control | None | None | Git-integrated — triggers on every .prompt file change |

| RAG Pipeline Coverage | None — retrieval quality invisible | None | Full — Faithfulness, Relevance, and Groundedness scored separately |

| CI/CD Integration | None — manual, pre-deploy | Partial — breaks on non-deterministic output | Native — blocks merge on regression below threshold |

| Audit Trail | None | Test logs only | Full chain-of-thought rationale per score, every run |

| Bias Mitigation | None — human bias uncontrolled | N/A | Position and verbosity bias counteracted via few-shot calibration + order swapping |

Technical Deep Dive FAQ

Start Free Today

Move beyond vibe checks. Build your first LLM Evaluation Gold Set.

TestStory.ai generates structured, baseline-ready test cases from your user stories, acceptance criteria, or architecture diagrams — then syncs them directly into TestQuality for execution, regression tracking, and team collaboration. The gap between a prompt change and a certified model update has always been a manual bottleneck. In 2026, it does not have to be.

✦ Exclusive offer for TestQuality subscribers

Get 500 TestStory.ai credits every month included with your TestQuality subscription — no extra cost. Simply create a TestStory.ai account with the same email as your TestQuality account to activate automatically. Includes all Pro 500 features.

Not a TestQuality subscriber yet? Both platforms offer free plans — sign up for TestStory.ai to generate your first evaluation cases, then connect your free TestQuality account to sync, execute, and track them without ever leaving your workflow.

No credit card required on either platform.