Key Takeaways

The Discipline That Separates Reliable AI Agents From Technical Debt Factories

Programmatic boundaries. Builder-validator chains. Verified outputs.

Context engineering replaces vibe coding by enforcing programmatic boundaries, modular task decomposition, and strict builder-validator chains that eliminate AI technical debt at the source.

Silent semantic errors drive 75.17% of multi-agent failures, requiring continuous verification loops and context window management to ensure AI-generated code actually satisfies business logic, not just the compiler.

TestQuality enforces context engineering at the QA layer, bridging the planner-coder gap by anchoring Jira requirements to GitHub-verified test cases through TestStory.ai.

Vibe coding generates code. Context engineering generates software. The difference is the verification layer.

Context engineering is the systematic design of programmatic boundaries, modular task decomposition, and continuous verification loops that make AI-generated code deterministic rather than probabilistic. It is the execution discipline that transforms loose LLM prompting into reliable software engineering, and it is the antidote to vibe coding. Without it, AI agents produce code that compiles but violates business logic, accumulating technical debt at a rate no human review cycle can absorb. Context engineering is the execution discipline that makes the Agentic SDLC reliable. For the full framework, see our guide to the Agentic SDLC.

What Is Context Engineering?

Context engineering is the systematic design of programmatic boundaries, modular task decomposition, and continuous verification loops within AI workflows. It ensures large language models operate deterministically by controlling the environment, inputs, and state management required to produce reliable, verifiable code at scale.

Unlike prompt engineering, which focuses on phrasing a single instruction to elicit a good output, context engineering is a systems architecture discipline. It treats the LLM as a computational engine embedded inside a broader pipeline, where agentic memory, state preservation, and environmental constraints matter more than the wording of any individual request. Search volume data reveals 5,400 monthly searches for "context engineering" with +4,809% year-over-year growth and low competition, signaling a rapid industry pivot away from conversational prompting toward engineered, verifiable AI workflows.

The core premise is simple: an LLM will only be as reliable as the context it operates within. Give it ambiguous instructions, irrelevant files, and no verification loop, and it will hallucinate. Give it programmatic boundaries, a pruned context window, and an independent validator agent, and it becomes a deterministic component in the Agentic SDLC. Humans remain the orchestrators at every layer, but context engineering is the framework that makes that orchestration scalable.

Why Vibe Coding Is Creating a Global Technical Debt Crisis

Vibe coding, the practice of feeding open-ended prompts into an LLM and accepting whatever comes back, is structurally incompatible with production software. It optimizes for the feeling of speed while quietly compounding technical debt across the codebase. The industry data on this failure mode is stark.

Currently, 95% of enterprise GenAI pilots have failed to deliver measurable ROI, according to MIT research on corporate AI adoption. This is the direct downstream cost of scaling vibe coding without architectural boundaries. Fragile prototypes pass demos but collapse against production edge cases because no one defined what "correct" meant before generation began.

The trust deficit compounds the problem. A full 66.2% of developers cite "don't trust the output" as their primary challenge when working with AI coding assistants, and 45.2% report that debugging AI code takes longer than writing it from scratch. When developers have no visibility into the AI's context window, reverse-engineering its reasoning becomes a bottleneck worse than the original task. Independently, Stack Overflow's 2024 Developer Survey found that 82% of developers use AI to write code but only 27.2% trust it to test that code — a trust gap that makes builder-validator separation non-negotiable.

Seasoned engineers report being 19% slower when acting as "AI babysitters," spending an average of 11 hours per week debugging hallucinations from unconstrained agentic generation. Meanwhile, AI pull requests average 10.8 issues compared to 6.4 in human-written code, and research from Veracode shows that 45% of AI-generated Java code contains OWASP Top 10 vulnerabilities. Without programmatic boundaries enforcing security and architectural standards, vibe coding actively degrades the systems it was meant to accelerate.

The 5 Core Principles of Context Engineering

Context engineering rests on five principles that together convert unreliable LLM output into deterministic engineering artifacts. These principles apply whether you are building a single AI-assisted feature in GitHub or operating a full multi-agent pipeline inside the Agentic QA Architecture.

Principle 1: Programmatic Boundaries Over Open-Ended Prompts

AI models require strict constraints to operate predictably. Programmatic boundaries define the exact input schema, required libraries, forbidden functions, and expected output format before any generation begins. This eliminates the hallucination space and forces the agent to stay inside your existing architecture rather than inventing plausible-sounding but incompatible code.

Principle 2: Modular Task Decomposition Before Execution

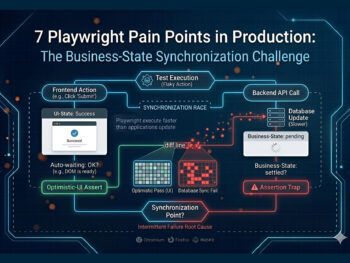

Research shows that 75.3% of multi-agent failures stem from the planner-coder gap, the disconnect between high-level intent and low-level execution. Context engineering closes this gap by requiring every Jira ticket to be decomposed into atomic, bounded subtasks. The human orchestrator reviews this decomposition before any code is generated, ensuring the AI is solving the right problem at the right resolution.

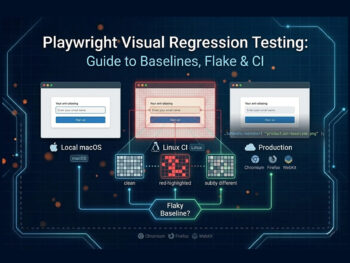

Principle 3: Context Window Management and Anti-Rot Strategies

Long-running agents suffer from context rot, the gradual degradation of instruction adherence as the context window fills with irrelevant tokens. Context engineering treats agentic memory as an active resource. That means dynamically pruning the context window, loading only the specific files needed for the current atomic task, and resetting agent state between modular steps to preserve the system prompt's authority.

Principle 4: Builder-Validator Separation

A foundational rule: the agent that writes the code cannot be the agent that tests the code. With 82% of developers using AI to write code but only 27.2% trusting it to test code, confirmation bias in a single-agent loop is a structural risk. A separate validator agent, operating from the original requirements rather than the generated code, produces independent verification instead of self-justifying approval.

Principle 5: Continuous Verification Loops

Adding explicit verification phases increases task success rates by up to 15.6%. Context engineering operationalizes this through modular test-driven development: the validator generates and executes unit tests against the builder's output, and failures feed back into the context as explicit error signals. The loop repeats until the programmatic boundaries are satisfied, rather than relying on a human to spot the problem post-hoc. For an end-to-end implementation pattern, see our LLM regression testing pipeline guide.

How Does Context Engineering Prevent Silent Semantic Errors?

Context engineering prevents silent semantic errors by enforcing a strict builder-validator chain that cross-references AI-generated output against business logic, not just compiler rules. It uses modular test-driven development to verify that generated code satisfies the original Jira requirements before any human sees the pull request.

Silent semantic errors are the most dangerous form of AI technical debt because they pass every surface-level check. Code compiles, tests run, linters stay quiet — yet the logic is subtly wrong. Industry research attributes 75.17% of multi-agent failures to exactly this class of error. Vibe coding cannot catch them because the developer assumes compilation equals correctness. Context engineering catches them by binding every generated line back to a verified, executable test case derived from the original acceptance criteria, not from the generated code itself.

Try It Now

Stop vibe coding. Start verifying every AI-generated test case.

Paste any user story into TestStory.ai and watch the orchestration layer generate structured, Gherkin-formatted test cases instantly — covering happy paths, edge cases, and the failure scenarios your team would typically miss. No account required.

No credit card required.

Context Engineering in Practice — From Jira Ticket to Verified Code

Implementing context engineering requires a workflow that wires your existing toolchain into a deterministic pipeline. The process starts in Jira, where the human orchestrator defines acceptance criteria. Instead of pasting that ticket into an LLM, the framework extracts the criteria programmatically and uses them to establish the boundaries for generation.

From there, the system queries GitHub to assemble agentic memory, pulling only the files relevant to the specific ticket to prevent context rot. The builder agent generates the code against those boundaries, while a separate validator agent generates test cases directly from the Jira criteria — never from the builder's output. Only when the continuous verification loop passes does anything reach a pull request, meaning the human orchestrator reviews verified logic rather than playing AI babysitter. This pattern is what turns the hub-level Agentic SDLC into a repeatable operational discipline.

How Does TestQuality Enforce Context Engineering at the Verification Layer?

TestQuality enforces context engineering by acting as the deterministic verification engine in the builder-validator chain. Its native Jira and GitHub integrations anchor every test case to business requirements and version-controlled code, so AI output is always evaluated against real-world constraints, not its own assumptions.

Through TestStory.ai, TestQuality automates the validator side of the chain. When AI-generated code heads toward merge, TestStory.ai generates test cases derived from the original Jira acceptance criteria, enforcing modular test-driven development without requiring engineers to hand-author every scenario. It bridges the planner-coder gap by ensuring the intent defined in Jira is mathematically verified in GitHub before deployment. Teams new to this verification model can start experimenting with the free test case builder before rolling it into their full pipeline.

Technical Deep Dive FAQ

Summary: Mastering Context Engineering

From Unpredictable AI Output to Deterministic Software Assets

Six disciplines that separate reliable engineering from AI babysitting.

Reject vibe coding: Unstructured prompting creates AI technical debt and forces engineers to spend roughly 11 hours per week debugging hallucinations from unconstrained generation.

Enforce boundaries: Define strict input schemas, required libraries, and forbidden functions before allowing any AI code generation against your architecture.

Decompose tasks: Bridge the planner-coder gap, which causes 75.3% of multi-agent failures, by forcing modular task decomposition before any code is generated.

Manage context rot: Actively prune the context window and reset agentic memory between atomic tasks to preserve instruction adherence across long workflows.

Separate builder from validator: The agent that writes code cannot be the agent that tests it; independent validation eliminates confirmation bias in the 82% of workflows where AI writes code but only 27.2% trust it to test.

Automate verification: Explicit verification phases raise task success rates by up to 15.6%, and TestQuality operationalizes that loop at the QA layer to catch silent semantic errors before merge.

Humans remain the orchestrators. Context engineering gives them the framework to orchestrate reliably, turning unpredictable AI output into deterministic software assets.

Start Free Today

Stop vibe coding. Start engineering deterministic AI workflows.

TestStory.ai generates structured test cases from your user stories, acceptance criteria, or architecture diagrams — then syncs them directly into TestQuality for execution, tracking, and team collaboration. Context-engineered QA means silent semantic errors get caught before merge, the planner-coder gap closes, and your team stops shipping AI technical debt to production.

✦ Get 500 TestStory.ai credits every month included with your TestQuality subscription — no extra cost.

No credit card required on either platform.