At a Glance

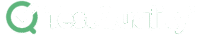

Five diagnostic patterns. One decision tree.

A senior practitioner's triage playbook for Playwright flakiness in 2026.

Flakiness is architectural, not framework-borne: Almost every flake traces back to async state, locator drift, session pollution, environment variance, or AI-agent non-determinism — not to Playwright itself.

The fix is bigger than the diagnosis: Replace static waits with expect.poll, swap CSS selectors for role-based locators, inject sessions via storageState, and pin CI to Microsoft's official Playwright Docker image.

Triage requires named thresholds: A 10-run test failing 3 times is officially flaky; 4 failures in 20 runs signals an environmental pattern, not a defect — and AI agents introduce token-cost retry abandonment that no static threshold catches.

The job of a 2026 QA architect isn't to eliminate flakiness — it's to operationalize a decision tree that fixes, retries, or quarantines each flake before it erodes pipeline trust.

What actually causes Playwright flaky tests in 2026?

Playwright flaky tests in 2026 are almost never framework bugs. They are architectural symptoms of asynchronous state mismatches, brittle DOM coupling, polluted authentication contexts, cross-environment rendering variance, or — increasingly — AI agents introducing non-deterministic execution into Model Context Protocol (MCP) workflows. Fixing flakiness in 2026 means diagnosing which of these five patterns is firing, then applying a structural fix rather than padding a timeout. This playbook gives you the symptom-to-fix mapping for each.

When a test passes locally but fails in continuous integration, the reflexive response is to blame the runner or insert a hard wait. At an enterprise scale, that approach degrades suite performance and masks underlying application issues. Test code deserves the same architectural rigor as production code — flakiness is a design problem, not a configuration knob.

A diagnostic approach starts with categorizing the failure before touching the code. Is it deterministic under specific load? Tied to a third-party rate limit? Triggered when components render before their event listeners attach? Each of the five patterns below has a distinct symptom signature and a distinct fix. The remaining sections walk through each, weighted roughly 30% diagnosis, 70% fix — because by 2026, the diagnosis is largely solved and the flaky fix is what earns engineering trust and avoids loosing productivity.

How do you diagnose async-state and race-condition flakiness?

Async-state flakiness shows up as tests that pass locally but fail intermittently in CI when network latency or hydration timing slips. The root cause is almost always static timeouts or assertions that fire before the application's data state has resolved. The fix is dynamic polling — expect.poll against the source of truth, or page.waitForResponse() on the specific async payload.

Async-state is the most-cited flakiness category in the industry, and we treat it in full architectural depth in our Playwright pain points pillar — including the documented Slack and GitHub reliability gains from eliminating static waits. Here we focus on the diagnostic and fix mechanics.

The pattern: an action triggers an asynchronous backend job, and the test asserts on a UI element before the data has propagated. Standard auto-waiting doesn't help, because the DOM element exists — it just contains stale data. The resilient pattern shifts the assertion off the UI and onto the source of truth.

// Brittle: static wait + UI assumption

await page.getByRole('button', { name: 'Process' }).click();

await page.waitForTimeout(5000);

expect(await page.locator('.status-badge').innerText()).toBe('Complete');

// Resilient: dynamic polling against application state

await page.getByRole('button', { name: 'Process' }).click();

await expect.poll(async () => {

const response = await page.request.get('/api/job-status');

return (await response.json()).status;

}, { timeout: 10000 }).toBe('Complete');expect.poll continuously queries the source of truth until the condition resolves or the timeout fires. Alternatively, page.waitForResponse() decouples test pacing from CI execution speed entirely, ensuring the test only proceeds after the specific async payload arrives. Either pattern eliminates the entire class of "wait long enough and hope" failures.

How do you diagnose locator drift and DOM-coupling flakiness?

Locator drift flakiness fires when minor UI changes break tests coupled to brittle CSS classes or deep XPath structures. The diagnostic signature is a TimeoutError where the element exists visually but the framework cannot resolve its structural path. The fix is migrating to user-centric locators — getByRole, getByText, getByLabel — with data-testid as the disciplined fallback.

Modern frontend frameworks generate dynamic class names (Tailwind atomic classes, CSS Modules hashes) and frequently restructure the DOM during component refactoring. Tests coupled to those implementation details flake on every layout adjustment. The selector wasn't wrong yesterday — the developer simply moved a <div>.

The fix standardizes on locators that mirror how a real user (or assistive technology) interacts with the page. These are inherently resilient to structural DOM changes because they target semantic intent, not visual structure. When semantic roles aren't available, a data-testid contract acts as an explicit interface between developers and QA.

// Brittle: coupled to DOM structure and styling

await page.locator('div.main-container > ul > li:nth-child(3) > button.btn-primary').click();

// Resilient: coupled to accessibility and user intent

await page.getByRole('button', { name: 'Submit Order' }).click();

// Resilient fallback: coupled to a dedicated testing contract

await page.getByTestId('order-submit-action').click();Enforcing this across a large suite usually requires linting rules — eslint-plugin-playwright can flag every page.locator() call where a getBy* alternative exists. Divorcing test automation from the DOM's visual structure eliminates the largest single source of maintenance overhead and false-positive flakiness in any mature suite.

How do you diagnose authentication and session flakiness?

Authentication flakiness manifests as unpredictable login failures, session timeouts, or rate-limit blocks when tests run in parallel. The symptom is a test that fails on step one — the login screen. The fix is to bypass the UI for authentication entirely by generating a session token via API and injecting it into the browser via Playwright's <code>storageState</code>.

We covered the broader architectural impact of session management in our pain points analysis. Diagnostically, the smoking gun is a suite where every test starts by navigating to /login, filling credentials, and submitting a form. That pattern multiplies failure vectors: network blips, identity-provider rate limits, CAPTCHA triggers, and shared browser contexts polluting cookies across parallel workers.

The resilient pattern authenticates once, saves the session state to a JSON file during global setup, and injects it into every test's browser context. Authentication becomes O(1) per suite run rather than O(n) per test.

// Brittle: UI login inside the test block

test('Dashboard loads', async ({ page }) => {

await page.goto('/login');

await page.fill('#user', 'admin');

await page.fill('#pass', 'secret');

await page.click('#login-btn');

await expect(page.getByRole('heading', { name: 'Dashboard' })).toBeVisible();

});

// Resilient: API auth in global setup, injected via storageState

test('Dashboard loads', async ({ page }) => {

// browser context is already authenticated on launch

await page.goto('/dashboard');

await expect(page.getByRole('heading', { name: 'Dashboard' })).toBeVisible();

});For multi-role suites (admin vs. standard user), generate multiple storageState JSON files during global setup and have tests declare their requirement via test.use({ storageState: 'admin.json' }). Parallel workers stay isolated, session pollution disappears, and rate-limit blocks against your auth provider stop happening entirely.

<!-- CUSTOM HTML BLOCK: MID-ARTICLE CTA -->

<div style="background:#eef4ff;border:1px solid #c0d4f5;border-radius:6px;padding:36px 40px;margin:48px 0;text-align:left;">

<p style="font-size:1.1em;font-weight:700;color:#3b6fd4;text-transform:uppercase;letter-spacing:0.1em;margin:0 0 12px 0;">

Try It Now

</p>

<p style="font-size:1.35em;font-weight:700;color:#1a1a2e;margin:0 0 16px 0;line-height:1.3;">

Stop chasing false positives — generate test cases that actually cover your edge paths

</p>

<p style="color:#333;line-height:1.8;margin:0 0 28px 0;">

Paste any user story into <strong>TestStory.ai</strong> and watch the orchestration layer generate structured, Gherkin-formatted test cases instantly — covering happy paths, edge cases, and the failure scenarios your team would typically miss. No account required.

</p>

<div style="display:flex;flex-wrap:wrap;gap:12px;justify-content:flex-start;">

<a href="https://testquality.com/free-test-case-builder/" target="_blank" rel="noopener"

style="display:inline-block;background:#3b6fd4;color:#fff;padding:15px 34px;border-radius:4px;font-weight:700;text-decoration:none;">

Try TestStory.ai Free →

</a>

</div>

<p style="margin:16px 0 0 0;font-size:.85em;color:#6b7fa8;">

No credit card required.

</p>

</div>How do you diagnose cross-environment and rendering flakiness?

Cross-environment flakiness fires when tests pass on a developer's macOS laptop but fail on a Linux CI runner. The root cause is variance in system-level font rendering, anti-aliasing algorithms, GPU acceleration, or browser-binary drift between local and pipeline environments. The fix is to standardize execution inside Microsoft's official Playwright Docker image — for both baseline generation and CI runs.

Visual regression tests via toHaveScreenshot are the most vulnerable to this pattern. macOS renders fonts with different anti-aliasing algorithms than Ubuntu; a baseline captured locally and compared against a CI execution will fail on microscopic pixel differences even when the application is functionally identical. The pattern also extends to timezone discrepancies, locale-specific date formats, and currency symbol variance.

Microsoft's official Playwright documentation explicitly recommends running CI inside the Microsoft Playwright Docker image (mcr.microsoft.com/playwright) to pin the operating system, font libraries, and browser binaries to a known state. Independently, configuring a reasonable pixel threshold absorbs the residual rendering noise that even a normalized environment can't fully eliminate.

// Brittle: strict visual comparison across OS environments

await expect(page).toHaveScreenshot('dashboard.png');

// Resilient: tolerating minor rendering variances

await expect(page).toHaveScreenshot('dashboard.png', {

maxDiffPixels: 150,

threshold: 0.2, // allows slight anti-aliasing differences

});Beyond snapshots, environment flakiness frequently stems from timezone drift between local machines and CI servers. Explicitly setting timezoneId and locale in playwright.config.ts ensures date pickers, currency formatters, and any locale-aware UI behave deterministically across all runners. Pin your CI to a single timezone the same way you pin your Docker image — both are configuration debt that prevents real flakes.

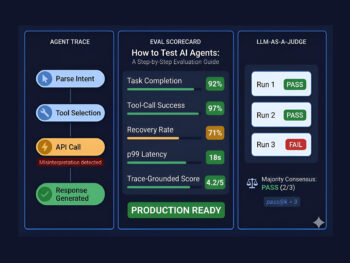

How does AI-driven testing introduce new flakiness patterns?

AI-driven testing introduces flakiness through non-deterministic LLM outputs, external API latency, token-cost-driven retry abandonment, and structural blind spots like Shadow DOM. Diagnosis means tracking agent re-authentication loops, accessibility-tree drift, context-window exhaustion in MCP environments, and the long-horizon tracking failures that plague autonomous agents past roughly 30 interaction turns.

Integrating AI shifts the failure domain from code logic to model interpretation. Currents.dev's 2026 ecosystem analysis documents the token-economics problem first: MCP streams full accessibility trees inline rather than persisting them to disk, which forces the LLM to process roughly 114K tokens per test versus 27K for equivalent CLI workflows. At scale, that cost differential triggers retry abandonment — agents simply give up on long-horizon tasks rather than burn budget.

Bug0's research into MCP servers surfaces a second pattern: Shadow DOM blindness. Modern design systems (Shoelace, Lit, corporate component libraries) hide elements inside shadow roots, and standard accessibility-tree snapshots cannot see through them. The agent hallucinates an interaction, attempts to click an element nested several shadow layers deep, and the framework can't resolve the target.

// Brittle: AI agent attempting interaction via standard accessibility tree

// fails because the element is inside a closed shadow root

await agent.click('Checkout Button');

// Resilient: drop into Playwright's native locator chain

// Playwright handles open shadow roots natively

await page.locator('shopping-cart').locator('button.checkout').click();A third vector is agent re-authentication loops: AI agents rarely persist session state out-of-the-box, so they re-authenticate on every step, hitting backend rate limits and triggering security blocks. The fix mirrors the human-suite pattern — wire storageState into the agent's workflow rather than letting it improvise. A fourth vector, documented by Anton Angelov's "Testing Frontier" research, is long-horizon tracking failure: comprehensive autonomous web testing requires dozens of interaction turns, and even the strongest 2026 models fail to exceed an F1 score of 30% on real web testing because they lose track of prior application states past ~30 turns [S056]. Mitigation: cost-aware strategies, critical-path-first testing, and tiered model selection rather than exhaustive coverage.

A fifth vector — and the one that destroys defect pipelines — is high false-positive rate combined with default-correctness bias. LLMs frequently mistake transient UI rendering delays for defects (precision around 30%) while simultaneously defaulting to a "Pass" judgment when they don't see explicit failure evidence (recall under 25%). The mitigation is structural: separate test planning from execution. Humans define the oracle as a structured checklist; AI agents handle execution against that checklist, never authoring the pass/fail criteria themselves.

Finally, framework-immaturity overhead matters at the suite level. ScrollTest's analysis is direct: if your Playwright suite is under 200 tests, the complexity overhead of MCP integration is almost certainly not justified [SC-MCP-26]. Build the foundation first, add intelligence later.

For the full architectural treatment of test agents and MCP, see our forthcoming Article 5 on test agents and MCP architecture. For a deeper look at AI-specific failure modes including Shadow DOM and re-auth loops, see the AI flakiness deep dive when published. For the macro view, see our Agentic QA pillar and Agentic SDLC hub.

When should you fix a flaky test, retry it, or quarantine it?

Decide by historical pattern, not by the most recent failure. Fix tests with deterministic root causes (locator drift, missing storageState). Retry tests with transient external dependencies (use trace: 'on-first-retry'). Quarantine tests exceeding a probabilistic flakiness threshold — typically 4 failures in 20 runs — until the underlying cause is diagnosed and fixed.

Without a formalized decision tree, engineers waste hundreds of hours guessing. Steve Kinney's CI failure triage workflow is the discipline: before touching code, (1) read the failure summary, (2) open the trace, (3) use the action list to find the failing step, (4) compare snapshots and network logs. Only after that evidence review do you decide whether the bug is in the test, the app, the config, or the environment (stevekinney.com).

Operationalizing the decision tree requires named numeric thresholds. The Testing Academy's baseline rule: run a test 10 consecutive times — if it passes 7 times and fails 3, flag it officially as flaky. TestDino's broader rule: 4 failures in the last 20 runs, especially with different failure modes, indicates a flaky pattern rather than a hard defect. For suites past 500 tests, binary pass/fail is insufficient — build a Probabilistic Flakiness Score that grades reliability on a spectrum.

Independently, Microsoft's official Playwright CI documentation recommends configuring workers: 1 on resource-constrained CI runners and setting a maxFailures ceiling to prevent CPU saturation from manufacturing fake flakes. Microsoft also advises trace: 'on-first-retry' — passing tests stay fast and cheap, while a failing test automatically generates a diagnostic artifact exactly when a retry is triggered. The Slack Engineering team has separately published their auto-suppression workflow for tests exceeding internal failure thresholds, treating quarantine as a default action rather than a manual escalation.

Flakiness Triage Decision Matrix

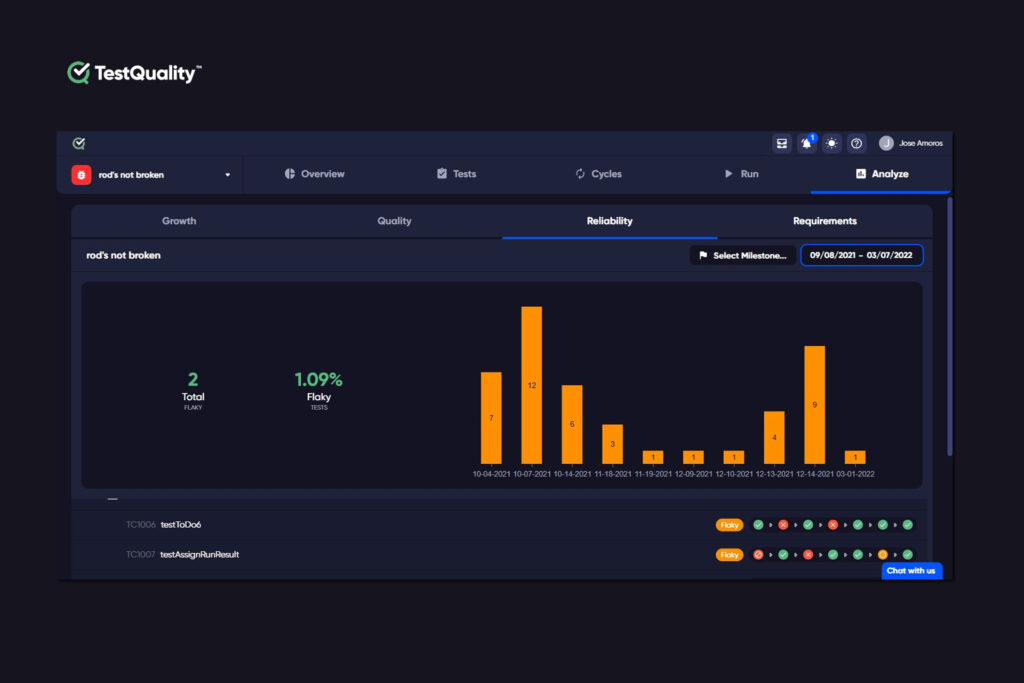

Operationalizing this triage at scale requires a management plane.

The standard workflow: configure Playwright's reporter to emit JUnit XML (reporter: [['junit', { outputFile: 'results.xml' }]] in playwright.config.ts), then run testquality upload_test_run to push results into a named TestQuality project and cycle.

Once results are ingested, TestQuality aggregates historical pass/fail across runs, surfaces tests that match the 4-in-20 threshold, and lets QA leads quarantine unstable tests at the management layer. The "Test reliability" TestQuality's tab, will help you identify those tests that are flaky. In the graph each test's flakiness is displayed as icons.

When a tester confirms a genuine defect (rather than a flake or expected change), they log it in TestQuality and the GitHub or Jira integration, syncs the defect record to the team's tracker — meaning developers only spend cycles on real application issues, not environmental noise.

Technical Deep Dive FAQ

Key Takeaways

The 2026 Playwright flakiness playbook in six lines.

Diagnose the pattern. Apply the fix. Operationalize the threshold.

Async state: Replace waitForTimeout() with expect.poll against the source of truth or page.waitForResponse() on the specific async payload.

Locator drift: Migrate to getByRole, getByText, getByLabel; enforce with linting; fall back to data-testid as a developer-QA contract.

Auth pollution: Bypass UI logins via storageState JSON files generated in global setup; declare role-specific state per test.

Environment drift: Pin CI to mcr.microsoft.com/playwright; configure maxDiffPixels, timezoneId, and locale for determinism.

AI agent flakiness: Watch for Shadow DOM blindness, re-auth loops, token-cost retry abandonment, and the documented sub-30% F1 ceiling on long-horizon agent runs.

Triage policy: Fix deterministic flakes; retry transient failures with trace: 'on-first-retry'; quarantine tests exceeding 4 failures in 20 runs.

Flakiness eradication isn't a Playwright config problem — it's a discipline problem. The teams that ship reliably in 2026 are the ones who built the decision tree before they needed it.

Start Free Today

Operationalize your flakiness triage — stop relitigating the same failures every sprint.

TestStory.ai generates structured test cases from your user stories, acceptance criteria, or architecture diagrams — then syncs them directly into TestQuality for execution, tracking, and team collaboration. The TestQuality CLI ingests Playwright's JUnit XML output into a named project and cycle, surfaces historical flake patterns across runs, and makes the 4-in-20 quarantine threshold a one-click policy rather than a manual triage cost.

✦ Get 500 TestStory.ai credits every month included with your TestQuality subscription — no extra cost.

No credit card required on either platform.

Sources

- The Testing Academy, "Selenium Is Not Enough in 2026 – You Need Playwright +AI" — YouTube (2026)

- TestDino, "The Playwright reporting gap: why test reports don't scale" — TestDino (2026)

- Artem Bondari, "3 Reasons for Playwright Flaky Tests (and How To Fix It)" — @ArtemBondarQA (2026)

- Microsoft, "Trace viewer" — Playwright Official Docs

- Microsoft, "Playwright Parallelism & CI Documentation" — Playwright Official Docs (2026)

- Steve Kinney, "The Playwright Trace Viewer | Self-Testing AI Agents" — stevekinney.com (2026)

- Currents.dev, "State of Playwright AI Ecosystem in 2026" — Currents.dev (2026)

- Bug0, "6 most popular Playwright MCP servers for AI testing in 2026" — Bug0 (2026)

- Anton Angelov, "The Testing Frontier: Research Brief #13 — No AI Model Can Break 30% on Real Web Testing. Here Is Why." — LinkedIn (2026)

- Skyvern, "Playwright MCP Reviews and Alternatives 2025" — Skyvern (2026)

- Pramod Dutta, "Playwright MCP + LLM Architecture: Building AI-Augmented Test Automation That Actually Works" — ScrollTest.com (2026)