At a Glance

Playwright Test Agents and MCP — A 2026 Architecture Decision

Strategic guidance for engineering leaders evaluating agentic Playwright workflows

Definition: Playwright test agents are LLM-driven execution loops that interpret high-level intent via the Model Context Protocol (MCP), rather than executing hardcoded selectors.

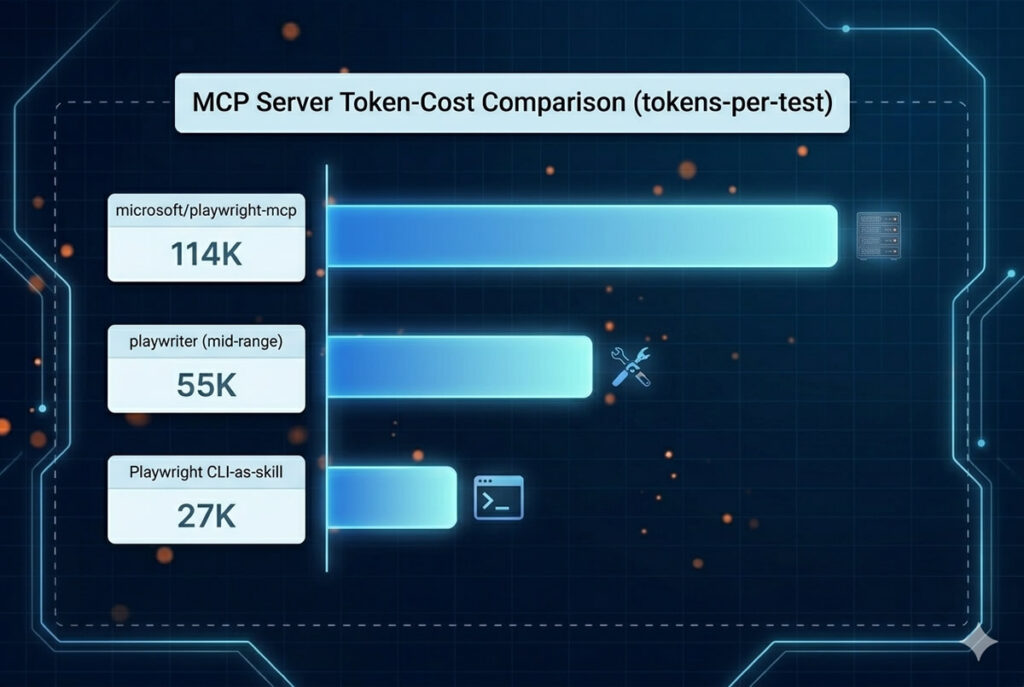

Token economics: Microsoft's MCP server consumes ~200–400 tokens per accessibility-tree snapshot, while full agent test runs can reach ~114K tokens vs. ~27K for CLI-skill workflows.

Readiness threshold: Practitioner guidance puts the adoption floor at roughly 200 stable tests — below that, agent complexity overhead exceeds the maintenance savings.

Test agents are not a replacement for a mature Playwright framework — they are a force-multiplier layered on top of one. The architectural decision is not whether to adopt them, but when, with which MCP server, and under what cost-and-quarantine guardrails.

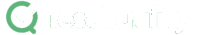

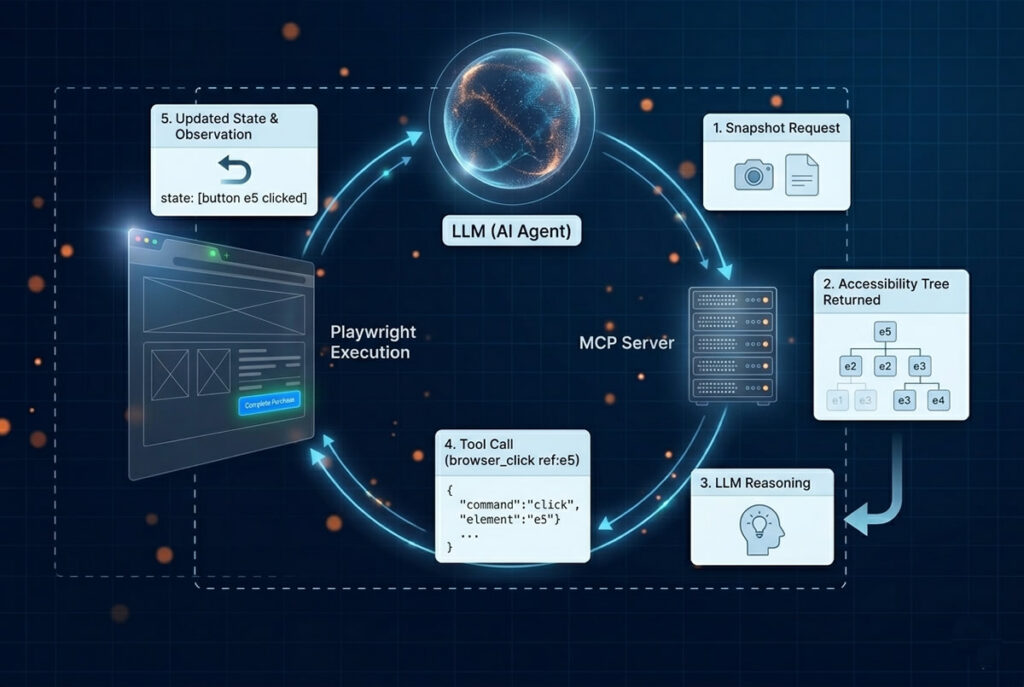

Playwright test agents are LLM-driven execution loops that wrap Playwright's browser automation in a goal-oriented reasoning layer. Instead of executing pre-written scripts, an agent receives high-level intent ("complete checkout and verify the success modal"), inspects the page's accessibility tree, and chooses which Playwright tool to invoke next. The Model Context Protocol (MCP) is the standardized bridge that exposes Playwright capabilities to the LLM and returns structured page context back. In 2026, MCP has consolidated the agentic-Playwright ecosystem around a single protocol, with Microsoft's reference server as the default and specialized servers competing on token efficiency. The architectural decision is no longer whether to adopt — it's how, when, and with which constraints.

What are Playwright test agents and how do they differ from traditional automation in 2026?

Playwright test agents differ from traditional automation in three structural ways: they are intent-driven rather than step-driven, they reason about page state through the accessibility tree, and they operate through a tool-use loop mediated by the Model Context Protocol — replacing rigid selectors with dynamic decision-making at runtime.

For engineers transitioning from deterministic frameworks, the conceptual delta is significant. A traditional Playwright script is procedural — it dictates the how. If a selector changes or an unexpected modal interrupts the flow, the script fails. A Playwright test agent dictates the what. Given the same checkout-verification task, the agent queries the page state, interprets the accessibility tree, decides which interaction sequence is appropriate, and re-plans when blocked. The accessibility tree is the unlock — it gives the LLM a semantic, low-token view of the page rather than forcing it to parse raw HTML.

Two patterns dominate 2026 production deployments. The first is the tri-agent orchestration loop popularized by Rahul Shetty — a Planner agent explores the application and emits a Markdown test plan, a Generator agent compiles that plan into Playwright TypeScript, and a Healer agent reruns failures to repair broken locators or strict-mode violations. The second is the two-stage decomposition described by Anton Angelov and Fanheng Kong — separating checklist generation from defect detection to mitigate the LLM's well-documented default-correctness bias.

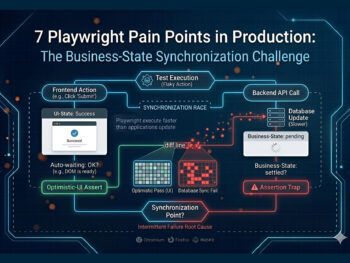

Readers seeking the underlying maintenance burdens that make agentic patterns attractive in the first place should reference our breakdown of Playwright pain points in production. The short version: brittle locators, async-state interleaving, and selector churn account for the bulk of script-level rework — and these are precisely the failure modes agentic execution targets.

How does the Model Context Protocol (MCP) architecture work with Playwright?

MCP is a host-client protocol where the LLM is the client and the MCP server is the host. The server exposes Playwright capabilities — navigation, clicks, fills, screenshots, assertions — as discrete "tools." The LLM requests an accessibility-tree snapshot, decides on an action, invokes the tool, receives the updated state, and iterates.

The critical architectural advancement is the accessibility-tree-as-context pattern. Instead of flooding an LLM's context window with thousands of lines of raw HTML, the MCP server returns a structured semantic representation — buttons, inputs, headings, and ARIA roles, each tagged with a unique reference ID like e5. Agents navigate by reference ID rather than by visually-tuned selectors, which is both more deterministic and dramatically cheaper. Microsoft's playwright-mcp consumes roughly 200–400 tokens per snapshot, compared to the thousands a raw-DOM approach would require.

The tool-use loop mechanics matter for understanding cost behavior. Each agent turn is: snapshot → LLM reasoning → tool call → execution → updated snapshot. A complex multi-step flow can easily reach 30+ turns, which compounds token usage quickly — a point we'll return to in the deployment section.

// Traditional Playwright: explicit, procedural

await page.locator('#checkout-btn').click();

await expect(page.locator('.success-modal')).toBeVisible();

// Agentic Playwright via MCP: intent-driven, tool-use loop

const snapshot = await mcpClient.callTool('browser_snapshot', {});

await mcpClient.callTool('browser_click', { ref: 'e5' });

await mcpClient.callTool('browser_snapshot', {});

// LLM evaluates state, decides next action, repeats until goal reached

MCP became the consolidating protocol in 2026 precisely because it standardizes this negotiation. Teams can swap foundational models — Claude, GPT, Gemini — without rewriting the Playwright integration layer. The protocol boundary is the durable interface; the model behind it is interchangeable.

Which Playwright MCP server should your team adopt in 2026?

Most teams should start with microsoft/playwright-mcp — it has the broadest API coverage, the strongest documentation, and the most active community. Specialized servers like playwriter or the Playwright-CLI-as-skill pattern win on token efficiency and are worth considering for high-volume execution matrices or token-constrained agent platforms.

The default choice is microsoft/playwright-mcp, It maps the full Playwright surface area, ships with the accessibility-tree pattern built in, and receives upstream updates aligned with Playwright releases. The trade-off is breadth — exposing a wide tool surface means agents can spend context-window budget exploring tools they don't need.

A second architectural option is emerging from the high-throughput coding-agent ecosystem: invoking the Playwright CLI as an AI "skill" rather than maintaining a persistent stateful MCP server. Goodness Eboh at Currents.dev measured this trade-off directly — MCP-driven runs consumed roughly 114K tokens per test while CLI-skill workflows came in at 27K tokens for comparable coverage.

The implication: when an agent only needs to execute a test rather than reason iteratively about page state, the CLI-skill pattern can be four-times-plus cheaper. Specialized servers like playwriter sit between these extremes — they preserve the MCP interface but prune aggressively to keep snapshots small.

Playwright MCP Server Comparison (2026)

| Server / Pattern | Primary Use Case | Token Efficiency | Best For |

|---|---|---|---|

| microsoft/playwright-mcp | General-purpose agentic execution; reference implementation | Moderate — full API surface | Teams starting MCP adoption; broadest community support |

| Specialized MCP servers (e.g., playwriter) | Token-pruned accessibility-tree interactions | High — aggressive DOM pruning | High-volume agent runs; cost-constrained pipelines |

| Playwright CLI as "skill" | Coding agents invoking tests via CLI rather than stateful MCP | Very high — ~27K vs ~114K tokens per test | Claude Code, Cursor, and similar large-context coding agents |

Token figures sourced from Currents.dev's "State of Playwright AI Ecosystem in 2026" benchmark and Microsoft's official MCP documentation.

The decision rule is operational, not theoretical: if your agent is reasoning iteratively about page state, you need a stateful MCP server. If your agent is executing pre-planned tests, the CLI-skill pattern is meaningfully cheaper at scale.

How do you deploy production-grade Playwright test agents?

Production deployments require four guardrails: session persistence via storageState so agents don't burn tokens re-authenticating, hard caps on max_tokens and max_tool_iterations to prevent runaway costs, fallback LLM routing for API throttling, and proxy-layer secret management so credentials never enter the tool-use loop.

A naive agent deployment — API key, starting URL, no constraints — fails predictably. The two most common failure modes are infinite re-authentication loops, where the agent repeatedly re-logs-in because session state isn't persisted, and runaway tool-iteration loops, where the agent gets stuck trying to click an element that isn't actionable. The fix for the first is storageState: a setup project authenticates once, writes cookies and localStorage to disk, and downstream agents inject that state — Stefan Judis's analysis demonstrates roughly five seconds saved per test, but the more meaningful saving is the token cost of every re-authentication turn that no longer happens. For the full session-persistence pattern and other agent failure modes — Shadow DOM blindness, long-horizon tracking failures, re-authentication storms — see our Playwright flaky tests diagnostic playbook.

Token-cost management is its own discipline. Joe Colantonio and Ben Fellows report engineering teams spending "hundreds of dollars per month per engineer" on premium model usage, with per-engineer tooling budgets reaching $250+. These aren't theoretical numbers — they're the operating reality for teams running agentic Playwright at scale. Capping max_tokens per session and max_tool_iterations per test are the two most effective hard guardrails. For the deeper rationale on context-window discipline as a reliability lever, see our context engineering framework for reliable AI agents.

// Brittle: unbounded context, no persistence, no fallback

{

"model": "claude-opus-4-7",

"max_tokens": null,

"tool_iterations": "unlimited",

"auth": "login-per-test"

}

// Resilient: cost-bounded, persistent, with fallback routing

{

"primary_model": "claude-opus-4-7",

"fallback_model": "claude-haiku-4-5",

"max_tokens_per_turn": 2000,

"max_tool_iterations": 8,

"storageState": "playwright/.auth/user.json",

"secrets": { "source": "proxy_vault", "inline": false }

}The PII boundary deserves explicit treatment when agents interact with production-derived data. Tumi's QA-architect work on production-log-mining emphasizes hashing PII locally before any payload reaches an external LLM API — an enterprise-data-privacy requirement that no agent architecture should treat as optional.

Try It Now

Before going agentic, get the deterministic baseline right.

Paste any user story into TestStory.ai and watch the orchestration layer generate structured, Gherkin-formatted test cases instantly — covering happy paths, edge cases, and the failure scenarios your team would typically miss. No account required.

No credit card required.

When is your Playwright suite mature enough to adopt test agents?

The practitioner readiness floor is roughly 200 stable tests with normalized CI, deterministic locator strategies, and standardized reporting. Below that volume, the integration overhead of an MCP-backed agent network exceeds the maintenance burden agents are designed to relieve. Adopting agents on top of a brittle framework merely automates the brittleness.

The 200-test threshold reflects a clear cost-curve crossover that has emerged as practitioner consensus among senior QA architects working on agentic Playwright rollouts. At suites under 200 tests, the architectural complexity of MCP integration — server hosting, token-budget management, fallback routing, quarantine policies — outweighs the per-test maintenance savings. Past 200, agents start to compound: each additional test benefits from the same infrastructure investment, and the marginal cost of adding agentic coverage drops sharply.

Framework maturity prerequisites are non-negotiable. The locator strategy needs to be role-based and resilient (Playwright's getByRole-style locators) — agents that have to reason about CSS hierarchies waste turns. CI environments need to be normalized — agents amplify environmental noise rather than absorbing it. Reporting infrastructure needs to be unified — without a single source of truth for run results, agent-emitted failures and human-authored failures bifurcate, making triage impossible.

The widely-circulated practitioner claims of 30–40% faster test creation and 50–60% maintenance reduction should be read with appropriate skepticism. These figures are achievable, but only when the agent operates against a framework where setup, teardown, and data provisioning are already deterministic. In environments where backend fixtures are flaky, those gains evaporate — the agent ends up debugging your test data instead of your application.

The State of JS 2025 survey underscores the broader context: respondents report using an average of 4.4 testing tools concurrently, a fragmentation pattern that makes premature agent adoption particularly risky. Layer agents onto a stable foundation, not onto a multi-tool patchwork. For the broader picture of how this fits the modern delivery lifecycle, see our Agentic SDLC guide.

Adoption-readiness checklist:

- 200+ tests with stable, non-flaky pass rates over the last 90 days

- Role-based locators or equivalent semantic strategy in production

- CI runtime variance under 10% across runs

- Single reporting destination (e.g., TestQuality) for all Playwright JUnit XML output

- Documented test data provisioning that is deterministic per cycle

- Defined token-budget cap per test run and per engineer-month

- Quarantine policy in place for agent-authored tests separate from human-authored

What does the practitioner community say about Playwright test agents in 2026?

The 2026 practitioner consensus is broadly positive on agentic Playwright for accelerating test creation and reducing locator maintenance, but consistently skeptical of full autonomy claims. Senior engineers converge on a "constrained co-pilot" framing — agents are augmenting force-multipliers, not replacements for human architectural judgment.

The most rigorous adoption signal comes from Anton Angelov's "Testing Frontier" research, which evaluated leading models on real-world web testing. No model achieved an F1 score above 30%. GPT-5.1 hit 26.4% F1 while consuming 0.87M tokens over 30.3 turns. When humans supplied a written checklist (the "oracle setting"), Claude Sonnet 4.5 jumped to 49.2% F1 — nearly double, on the same models, with the same execution engine. The practitioner implication is direct: agents are competent executors but unreliable planners. The framework that maximizes value separates checklist generation (human-led) from defect detection (agent-led).

Tom Piaggio's Autonoma analysis reinforces the economic motivation. Playwright test maintenance already consumes 30% of sprint capacity (versus 41% for Selenium), and the annual maintenance cost for a five-person QA team runs roughly $60,000 against Selenium's $82,500. Those numbers are why teams are evaluating agents at all — not because the technology is novel, but because the maintenance economics demand a change.

Joe Colantonio and Ben Fellows have documented the shift in QA role explicitly: AI tools generate 80–90% locator accuracy, compressing 3–4 hours of test authoring into 15–20 minutes, but the surplus capacity goes into review and architecture, not into eliminating the engineer. Independently, the Currents.dev "State of Playwright AI Ecosystem in 2026" analysis shows token economics — not capability — as the dominant production constraint, with MCP-driven workflows consuming roughly four times the tokens of CLI-skill alternatives for comparable coverage.

For the broader strategic context on how agentic patterns reshape the QA function, see our coverage of the shift to Agentic QA.

How do you operationalize agent test runs at scale?

Operationalizing agent test runs at scale means treating agent-emitted JUnit XML identically to human-authored JUnit XML in your management plane, while layering on agent-specific observability — token-cost-per-run tracking, hallucination-vs-regression triage, and quarantine policies for unreliable agent-authored tests. The TestQuality CLI handles the upload mechanics; the analytical layer differentiates the failure modes.

The operational pattern is unchanged at the protocol level. Whether a Playwright test is authored by an engineer or generated by an MCP-backed agent, it emits standard JUnit XML when the reporter is configured correctly — reporter: [['junit', { outputFile: 'results.xml' }]] in playwright.config.js. From there, the testquality upload_test_run CLI command pushes the results into a named project and cycle. TestQuality ingests the run, runs historical pattern detection, and surfaces trends. A tester then reviews failures, confirms which represent genuine defects (as opposed to agent hallucinations, transient flakes, or expected changes), and logs defects manually. Once a defect is logged, the GitHub and Jira integrations sync it to the team's tracker.

What changes with agents is the failure-mode taxonomy. Human-authored Playwright failures cluster around locator drift, async-state interleaving, and environmental flakiness. Agent-authored failures cluster around three distinct patterns: hallucinated assertions (the agent invented a verification step that doesn't match the acceptance criteria), tool-loop exhaustion (the agent hit max_tool_iterations before reaching the goal), and context-window degradation (the agent's reasoning quality drops as the conversation grows). These do not present as standard Playwright timeouts in the JUnit XML — they require metadata-level inspection of agent run logs to distinguish from genuine application regressions. Quarantine thresholds for agent-authored tests should be tracked separately from human-authored quarantines, because mixing them obscures both signal sets.

Operationally: route all agent runs into a dedicated TestQuality cycle (e.g., agent-runs-Q1-2026), tag agent-authored tests with metadata distinguishing them from human-authored, track token-cost-per-run as a first-class metric, and apply quarantine policies that differ from the human-authored equivalent we cover in detail in the flaky tests diagnostic playbook. Distinguishing application flakiness from LLM context failure will be the central observability challenge of agentic Playwright in 2026 — a topic we'll explore in depth in our forthcoming guide on a new blog post.

Technical Deep Dive FAQ

Key Takeaways

What to remember when planning your agentic Playwright rollout

Six data-backed conclusions for engineering leaders

MCP is the consolidating protocol: Microsoft's playwright-mcp consumes ~200–400 tokens per accessibility-tree snapshot and remains the recommended starting point for most teams.

Token economics are the real constraint: Full MCP agent runs reach ~114K tokens per test versus ~27K for CLI-skill workflows — a 4x cost gap that compounds at scale.

The 200-test readiness floor is real: Below that suite size, MCP integration overhead exceeds the maintenance savings agents are designed to deliver.

Agents are competent executors, unreliable planners: No leading model exceeds 30% F1 on real-world web testing without a human-supplied checklist; with one, Claude Sonnet 4.5 reaches 49.2% F1.

Maintenance economics drive adoption: Playwright suites already consume ~30% of sprint capacity in maintenance; agent-assisted authoring compresses 3–4 hour tasks into 15–20 minutes with ~80–90% locator accuracy.

The management plane unifies, the quarantine policy splits: Agent and human-authored JUnit XML flow through the same TestQuality CLI pipeline, but agent-authored tests need separate quarantine thresholds because they fail differently.

Treat test agents like incredibly fast junior engineers — fast enough to compress hours into minutes, junior enough that the architecture, the checklist, and the quarantine policy still have to come from you.

Start Free Today

Operationalize agent test runs without rebuilding your management plane.

TestStory.ai generates structured test cases from your user stories, acceptance criteria, or architecture diagrams — then syncs them directly into TestQuality for execution, tracking, and team collaboration. Whether your tests are authored by engineers or executed by MCP-backed agents, JUnit XML flows through the same CLI pipeline, the same named project and cycle, the same historical pattern detection — giving you one source of truth across both authoring modes.

✦ Get 500 TestStory.ai credits every month included with your TestQuality subscription — no extra cost.

No credit card required on either platform.

Sources

- Rahul Shetty, "What are inbuilt Playwright Agents? Supercharge UI Automation with AI Agents" — Rahul Shetty Academy (2026)

- Microsoft / Playwright, "Playwright MCP" — GitHub: microsoft/playwright-mcp (2026)

- Goodness Eboh, "State of Playwright AI Ecosystem in 2026" — Currents.dev (2026)

- Joe Colantonio & Kareem, "AI Test Automation: Ship Twice as Fast with 10x Coverage" — TestGuild (2026)

- Joe Colantonio & Tumi, "AI Testing from Production Logs: Generate Smarter Regression Tests" — TestGuild (2026)

- Anton Angelov / Fanheng Kong, "The Testing Frontier: Research Brief #13 — No AI Model Can Break 30% on Real Web Testing" — LinkedIn / Automate The Planet (2026)

- Sandeep Panda (Bug0), "How to shard your Playwright tests: from 60 minutes to 8" — Bug0 (2026)

- Steve Kinney, "The Playwright Trace Viewer | Self-Testing AI Agents" — stevekinney.com (2026)

- Joe Colantonio & Ben Fellows, "Playwright, Cursor & AI in QA (How to Save Hours)" — TestGuild (2026)

- Stefan Judis, "How to Speed up your Playwright Tests with shared 'storageState'" — Stefan Judis YouTube (2026)

- Tom Piaggio (Autonoma), "Selenium vs Playwright vs Cypress (2026)" — Autonoma (2026)

- State of JS 2025, "Testing Tools Pain Points" — stateofjs.com (2025)