Agentic QA architecture is the technical framework that enables autonomous software testing without human authorship at each step. It consists of three interconnected layers: an Orchestration Layer that runs continuous Plan-Act-Verify reasoning loops to generate, execute, and validate test cases from plain-language requirements; an Integration Layer that connects product intent in Jira and GitHub directly to structured Gherkin output in your test management system; and a Self-Healing Execution Layer where AI-powered DOM selectors automatically adapt to UI changes rather than breaking. Together, these layers eliminate the two structural failure modes of traditional test automation (coverage debt and maintenance tax) by making test case generation and upkeep autonomous rather than human-gated. When all three layers operate together, teams eliminate the human bottleneck between a product requirement and a running test entirely. This guide unpacks how each layer operates at the compilation and orchestration levels.

According to McKinsey's 2025 State of AI survey, 62% of organizations are at least experimenting with AI agents, with high-performing enterprises differentiating themselves by fundamentally redesigning their entire software workflows. The difference between a brittle AI wrapper and a resilient, enterprise-grade autonomous software testing framework lies entirely in its architectural foundation.

Independently, Forrester renamed its entire testing category from "Continuous Automation Testing Platforms" to "Autonomous Testing Platforms" in Forrester's Q4 2025 Wave report — a signal that the industry has formally recognized the shift from scripted automation to autonomous execution as the new standard.

At a Glance

Key Architecture Takeaways

Stop writing scripts. Start orchestrating outcomes.

Agentic Orchestration Layer: Replaces static scripts with dynamic Plan-Act-Verify reasoning loops to autonomously manage test generation, prioritization, and execution.

Brain-to-Muscle Execution: By treating frameworks like Playwright as the "muscles" and the LLM as the "brain," teams eliminate inference latency in QA while scaling coverage deterministically.

Maintenance Eradication: Self-healing DOM selectors automatically adjust to UI drift, bridging the gap between nondeterministic AI testing and reliable, enterprise-grade CI/CD pipelines.

The difference between a brittle AI wrapper and a resilient, enterprise-grade autonomous software testing framework lies entirely in its architectural foundation.

What is an Agentic Orchestration Layer?

An Agentic Orchestration Layer is the centralized intelligence hub within an autonomous software testing framework. It sits directly above execution engines to continuously parse requirements, generate structured test scenarios, trigger automated execution, and autonomously interpret results without requiring human intervention.

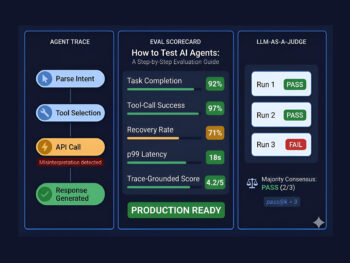

In a traditional setup, humans act as the orchestration layer — reading a Jira ticket, writing a script, and triggering a build. In a 2026 agentic architecture, tools like TestStory.ai assume this role. The orchestration layer decouples the intent (what needs to be tested) from the execution (how the browser clicks), allowing the AI to manage the holistic lifecycle of a test suite.

This architectural shift has a measurable operational consequence: test coverage is no longer gated on human availability. When a product manager merges an updated acceptance criterion at 11pm, the orchestration layer responds immediately — not on the next working morning. The pipeline never sleeps, and neither does coverage.

How do Reasoning Loops work in QA?

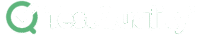

Reasoning Loops in QA operate on a continuous Plan-Act-Verify cycle. The AI agent plans by analyzing user stories, acts by coding executable test scripts, and verifies by evaluating the output against expected behaviors — allowing it to dynamically correct any test failures.

Historically, nondeterministic testing — where the same LLM prompt produces wildly different test scripts — has been the Achilles' heel of AI in QA. Reasoning loops solve this by anchoring the generation process to a strict architectural standard:

- Plan: The agent ingests unstructured acceptance criteria and maps out state dependencies, including implicit edge cases that an experienced QA engineer would catch but a junior might miss.

- Act: It writes deterministic, BDD-compliant Gherkin scenarios (Given/When/Then) — human-readable for product managers, machine-executable for CI/CD pipelines.

- Verify: It validates the syntax against the existing codebase. If a step definition is missing, the reasoning loop iterates and corrects the output before pushing to the repository.

By maintaining a strict feedback loop, organizations can guarantee high LLM reliability metrics, ensuring that autonomous agents produce code that actually compiles and runs predictably. Teams with mature agentic pipelines typically target a compilation rate above 94% and a regeneration frequency below 1.2 iterations per scenario — two benchmarks that indicate the reasoning loop is functioning correctly rather than thrashing.

How does the Integration Layer connect intent to execution?

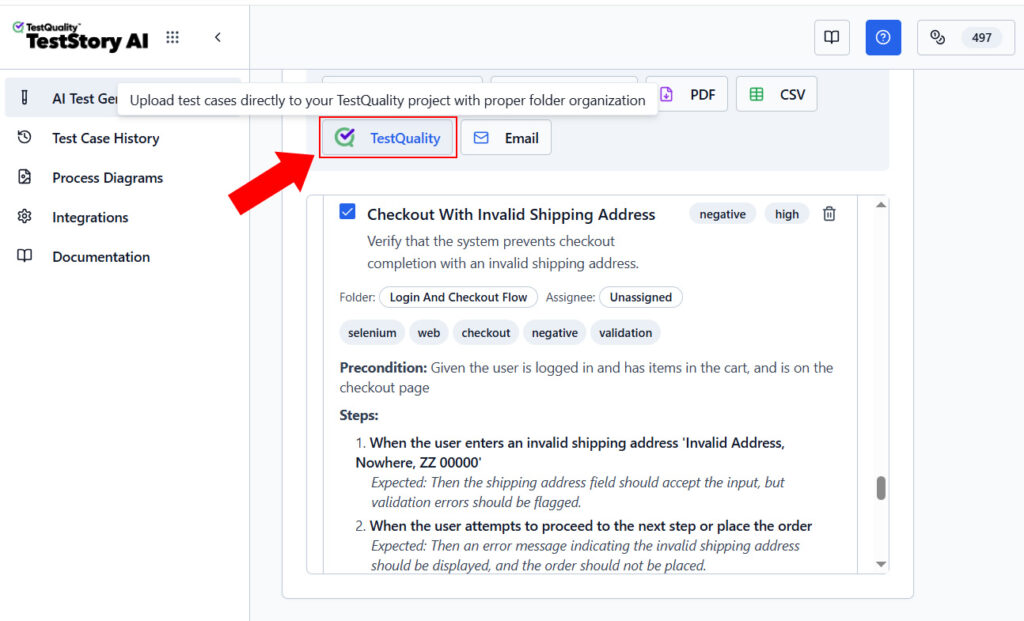

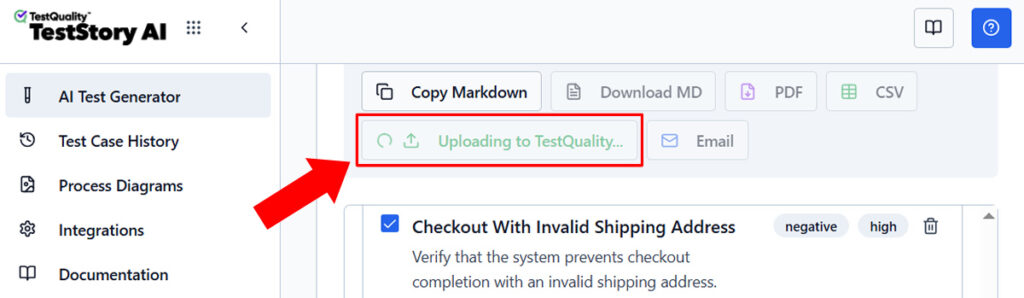

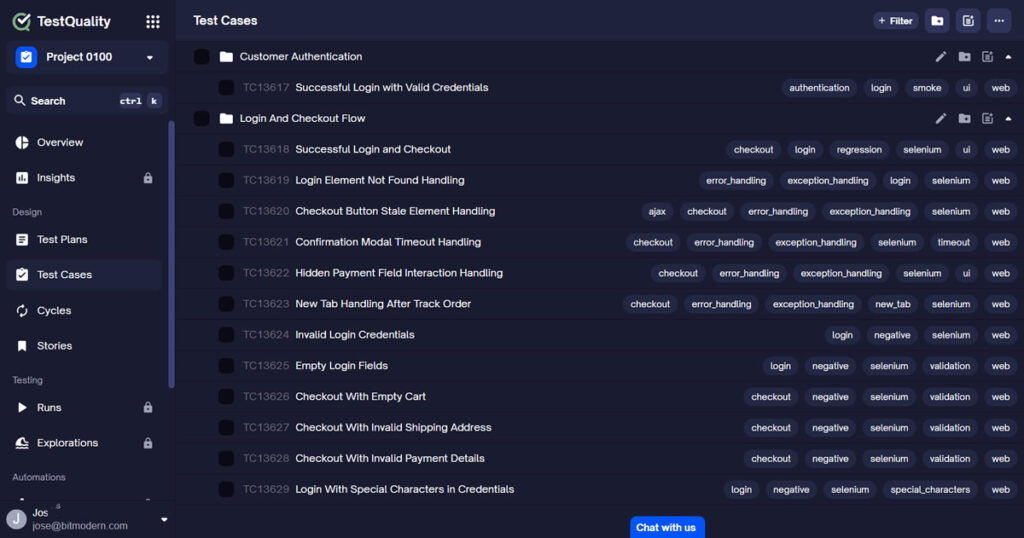

The Integration Layer seamlessly bridges unstructured product intent with structured test management. It ingests Jira acceptance criteria, translates them into executable test cases delivered in different formats, from Markdown text to csv file format.

The best process is to push them directly into TestQuality — maintaining bidirectional traceability and completely eliminating the need for manual copy-pasting.

Because the Agentic Orchestration Layer integrates natively into developer workflows, it functions continuously in the background. When a Pull Request is opened in GitHub, the agent intercepts the payload, identifies the delta in the code, and autonomously cross-references the corresponding Jira user story. It then regenerates or updates the specific Gherkin scenarios affected by that commit.

The full architecture stack in a mature 2026 deployment looks like this:

- Product Layer: User stories and acceptance criteria live in Jira, Linear, or GitHub Issues.

- Agentic Layer: TestStory.ai reads those stories, runs the Plan-Act-Verify loop, and generates Gherkin scenarios.

- Management Layer: TestQuality stores, organizes, versions, and tracks test cases and execution results — with full audit trails and coverage analytics.

- Execution Layer: Playwright, Selenium, or Cypress runs the generated specifications deterministically.

- Pipeline Layer: GitHub Actions or your CI/CD tool such as Jenkins, CloudBees, Circleci or Travis CI, orchestrates the full flow end-to-end.

Each layer does what it does best. The key insight is that the Agentic layer does not replace Playwright or TestQuality — it ensures both always have well-authored, current, coverage-complete inputs to work with.it.

Try It Now

See the Plan-Act-Verify loop in action — in under 60 seconds.

Paste any user story into TestStory.ai and watch the orchestration layer generate structured, Gherkin-formatted test cases instantly — covering happy paths, edge cases, and the failure scenarios your team would typically miss. Results sync directly into TestQuality for immediate execution and tracking. No account required to start.

No credit card required on either platform.

What are Self-Healing DOM Selectors?

Self-Healing DOM Selectors are AI-powered locators that automatically adapt test scripts when an application's interface changes. Instead of failing due to a renamed CSS class or shifted layout, the agent semantically identifies the target element to ensure continuous pipeline execution.

This is where the separation of "Brains" and "Muscles" becomes crucial. Playwright and Selenium remain the undisputed "muscles" of the execution layer — they are lightning-fast, support async architecture, and execute at scale. However, they lack semantic understanding.

When a developer changes a checkout button from <button id="submit-order"> to <button class="btn-primary checkout">, a traditional Playwright script breaks immediately. The "Maintenance Tax" kicks in: a QA engineer must locate the failure, understand the DOM change, update the locator, and re-run the suite. In a team managing thousands of selectors across a complex application, this is not an occasional inconvenience, it is a structural drain consuming 30–50% of the automation budget.

In an agentic architecture, the LLM ("the brain") catches the failure, reads the updated DOM tree, semantically identifies the new checkout button by its purpose rather than its attribute, updates the locator, and passes the corrected script back to Playwright to re-run without any human intervention.

Visual Regression and LLM-Based Testing: The Technical Layer

LLM-Driven Visual Regression

Traditional visual regression tools work by pixel comparison. They take a baseline screenshot and flag any pixel-level difference. The result is a high false-positive rate — a one-pixel font rendering difference triggers the same alert as a broken layout. Engineers spend hours reviewing noise for changes that are functionally irrelevant.

LLM-based visual testing evaluates screenshots semantically rather than pixel-by-pixel. The model understands that minor anti-aliasing differences are not defects, but a missing checkout button or a collapsed navigation menu is. Crucially, it can articulate what changed, why it matters, and whether a human should review it — transforming visual regression from a noisy alert system into an actionable signal.

When visual regression failures surface, whether flagged by LLM-based semantic analysis or automated Playwright assertions, TestQuality's test management layer ensures they never become orphaned tickets. Through native GitHub PR integration, every failure is automatically tied to the pull request that introduced it. Through bidirectional Jira linking, it carries a user story, a business context, and an assigned owner. The failure is not just detected, it is owned, traceable, and resolved within the same workflow where it was introduced..

Intelligent Test Prioritization

Not every test needs to run on every commit. An agentic QA system that understands the relationship between code changes, user stories, and test cases can determine which subset of your test suite is relevant for a given change, the practical implementation of risk-based testing without manual tagging or configuration.

For QA teams with large test suites, intelligent prioritization can reduce pipeline execution time by 40 to 60 percent without reducing defect detection rates. The agent identifies which tests cover the changed code and triggers those first — while lower-priority regression tests are organized into separate TestQuality cycles and scheduled for off-peak runs. This is not manual triage. TestQuality's cycle and milestone structure makes it the natural home for this kind of prioritized test organization, giving teams a single place to see what is running now, what is queued, and what each result means in the context of the current release. The outcome is faster feedback on the changes that matter most, with full visibility into the testing process at every stage..

The Efficiency Delta: Why Intent Drives Adoption

The architectural depth of an agentic workflow is completely reshaping how engineering teams adopt QA tools.

Technical benchmarks for 2026 show that Agentic workflows, particularly via TestStory.ai, achieve a 68% team activation rate, solving the common "Click Leakage" seen in traditional CI/CD tools. Compare this to the abysmal 0.04% click-through rate of generic AI test assistants that sit outside the developer's native ecosystem.

The efficiency delta is clear: when an AI tool requires a QA engineer to open a separate tab, paste a prompt, and manually migrate the output into their test management system, it is simply a slightly faster manual workflow — the human bottleneck is not eliminated, it is just moved one step to the right. When the architecture is native, operating silently between GitHub, Jira, and TestQuality, the bottleneck disappears entirely.

This is also why the 74% activation rate for technical referrals is meaningful. Engineers who discover TestStory.ai through GitHub integrations adopt it because it removes friction from their existing workflow. They do not have to change how they work. The tool improves what they already do.

What Agentic QA Means for QA Engineers in 2026

The practical question that follows from this architecture discussion is what it means for the engineers running QA workflows. Based on adoption patterns from teams already operating agentic pipelines, the role shifts rather than shrinks.

The work that disappears is mechanical: writing test cases from requirements, updating locators when UI changes, maintaining regression suites that drift out of coverage. This work consumed most available QA bandwidth in traditional teams — and it was the least intellectually engaging part of the job.

The work that expands is strategic: evaluating AI-generated coverage for gaps the model missed, designing test strategies for complex stateful system interactions, interpreting results in the context of real user behavior, and making architectural decisions about the testing stack itself. These are the skills that differentiate exceptional QA engineers, and they become the primary focus when the mechanical layer is automated.

Teams that have made this transition using TestQuality, integrated natively with GitHub and Jira, consistently report higher job satisfaction alongside improved coverage metrics. The QA role becomes what it should have always been: quality engineering, not test case transcription.

| Capability | Manual QA | Automated QA (Playwright / Selenium) |

Agentic QA (TestStory.ai) |

|---|---|---|---|

| Test Case Creation | Human-authored, session-by-session | Human-authored scripts, recorded or coded | Autonomous — LLM generates from user stories |

| Gherkin / BDD Output | Manual — written by QA or BA | Manual — step definitions coded separately | Auto-generated in Given/When/Then format |

| Maintenance on UI Change | Manual re-test | Scripts break — manual fix required | Self-healing — agent updates locators & assertions |

| Edge Case Coverage | Dependent on engineer experience | Only what was explicitly scripted | Inferred from requirement semantics by LLM |

| Visual Regression | None — manual spot checks only | Pixel-diff — high false-positive rate | Semantic — LLM evaluates functional significance |

| GitHub Integration | None | CI trigger only — tests pre-written | PR-aware — generates tests on PR open (with TestQuality GH Integration) |

| Jira Traceability | Manual linking | Manual or custom integration | Native — bidirectional story-to-test linking via TestQuality |

| Coverage on New Features | Days after story completion | Days — script authoring required | Minutes — agent generates on story creation |

| Test Prioritization | Manual — risk judgement per engineer | Static — runs full suite every time | Intelligent — runs relevant subset, 40–60% faster pipelines |

| Scales with Velocity | Requires headcount | Partially — maintenance still manual | Yes — generation and maintenance are autonomous |

Technical Deep Dive FAQ

Key Takeaways

Agentic QA Architecture in 2026

Stop managing test debt. Start orchestrating autonomous coverage.

Three-layer architecture: Agentic QA operates across an Orchestration Layer (Plan-Act-Verify reasoning loops), an Integration Layer (Jira/GitHub to Gherkin output), and a Self-Healing Execution Layer (AI-powered DOM selectors that adapt automatically to UI changes).

Maintenance Tax eliminated: Self-healing DOM selectors automatically identify updated elements by purpose rather than attribute — no human intervention required when UI changes break traditional locators.

Pipeline speed: Intelligent test prioritization runs only the tests relevant to changed code, reducing pipeline execution time by 40–60% without reducing defect detection rates.

Coverage scales with velocity: The Orchestration Layer responds to requirement changes immediately — test coverage never waits on human availability or sprint cycles.

Maturity benchmarks: A compilation rate above 94% and a regeneration frequency below 1.2 iterations per scenario indicate a correctly functioning agentic pipeline.

When all three layers operate together, teams eliminate the human bottleneck between a product requirement and a running test entirely.

Start Free Today

Transition from script-writing to outcome-orchestration.

TestStory.ai generates structured test cases from your user stories, acceptance criteria, or architecture diagrams — then syncs them directly into TestQuality for execution, tracking, and team collaboration. The gap between a user story and a passing test has always been a human bottleneck. In 2026, it does not have to be.

✦ Exclusive offer for TestQuality subscribers

Get 500 TestStory.ai credits every month included with your TestQuality subscription — no extra cost. Simply create a TestStory.ai account with the same email as your TestQuality account to activate automatically. Includes all Pro 500 features.

Not a TestQuality subscriber yet? Both platforms offer free plans — sign up for TestStory.ai to generate your first test cases, then connect your free TestQuality account to sync, execute, and track them without ever leaving your workflow.

No credit card required on either platform.