At a Glance

The Shift from Automated Testing to Autonomous AI Generation

Stop writing test cases. Start describing outcomes. Let the agent handle the rest.

Agentic QA replaces scripted test suites with autonomous AI agents that read user stories, generate Gherkin scenarios, and push executable test cases into your pipeline — without a human writing a single line of test code.

The gap between requirement and test script collapses from days to minutes when large language models handle the translation layer between product intent and test execution.

Technical teams using TestStory.ai report a 68% activation rate from first use, compared to 56.5% for teams arriving through generic paid search — proof that intent-matched tooling accelerates adoption.

Playwright and Selenium remain relevant as execution engines, but Agentic workflows sit above them, orchestrating generation, prioritization, and self-healing autonomously.

The benchmark for 2026 is not how fast an AI can write a script — it is how much test maintenance debt the agent eliminates.

Something fundamental broke in 2025. According to McKinsey's 2025 State of AI survey, 88% of organizations now use AI in at least one business function, and 62% are already experimenting with AI agents. The testing domain is no exception to this acceleration.Not a single framework or tool, the entire mental model of how QA fits into a modern release cycle. Teams that spent years building Selenium grids and Playwright suites found themselves in the same position: tests passing on Friday, users reporting regressions on Monday, and engineers spending more time fixing automation than building features.

The problem was never the execution layer. It was the gap between intent and automation — the human bottleneck between a product manager writing a user story and a QA engineer converting it into a test case. That gap is exactly what Agentic QA is designed to close.

This article explains why that shift is happening now, what it actually means in practice, and how teams are using tools like TestStory.ai to move from static automation to genuinely autonomous AI test generation.

Why 2026 Release Cycles Are Breaking Traditional Automation

Traditional automated testing was built for a world where software shipped quarterly. Scripts were written once, maintained occasionally, and executed on a predictable schedule. That model tolerated a five-day lag between requirement and test. It accepted that test suites would lag behind development by a sprint or two.

Modern CI/CD pipelines have eliminated that tolerance. Teams running trunk-based development with feature flags push to production dozens of times a day. Every push needs test coverage. Every coverage gap is a production risk. The arithmetic no longer works: you cannot manually author test cases faster than engineers write code.

Two specific failure modes have become unavoidable in 2026.

The Coverage Debt Spiral: As development velocity increases, test creation falls further behind. Teams accumulate coverage debt — areas of the application with no automated validation. Each sprint adds more surface area with no corresponding test investment. By the time the debt becomes visible, regression rates have already spiked.

The Maintenance Tax:Existing automation requires constant upkeep. UI changes, API modifications, and environmental drift break scripts that were working yesterday. Research consistently shows that 30 to 50 percent of test automation budgets go to maintenance rather than new coverage. That ratio is unsustainable when your codebase evolves weekly.

Neither problem is solvable by hiring more QA engineers or buying faster test execution infrastructure. They are structural problems requiring a different approach to how tests are created and maintained in the first place.

What Is Agentic QA?, and How Does It Differ From Test Automation?

The term Agentic QA gets used loosely, so it is worth being precise. An AI agent, in the technical sense, is a system that perceives its environment, takes actions toward a goal, and adjusts its behavior based on feedback without requiring explicit human instruction for each step.

Applied to software testing, an Agentic QA system can read a user story or acceptance criteria written in plain English, infer the test scenarios that need covering including edge cases, generate executable test cases in structured formats such as Gherkin or JSON, push those tests into your test management system or GitHub repository, and update or regenerate affected tests when requirements change.

This is categorically different from test automation, which executes pre-written scripts. It is also different from AI-assisted tools that suggest completions or flag potential issues — the agent operates end-to-end, not as a co-pilot for a human author.

The Spectrum from Automation to Agentic

It helps to think of this as a spectrum rather than a binary. At one end, a QA engineer writes every test case manually. Further along, tools like Playwright's code generator record interactions into scripts. Further still, AI assistants suggest test cases based on requirements. At the Agentic end, the system reads requirements, generates tests, executes them, reports results, and triggers regeneration when the application changes. The human defines the goal; the agent executes the workflow.

Most teams in 2026 sit somewhere in the middle of that spectrum. The opportunity and the competitive pressure, is in moving toward the Agentic end, where the human bottleneck in test creation is eliminated.

How TestStory.ai Implements Agentic QA in Practice

TestStory.ai is the AI agent layer built into TestQuality's platform. It accepts unstructured input and produces structured, executable test output across three stages.

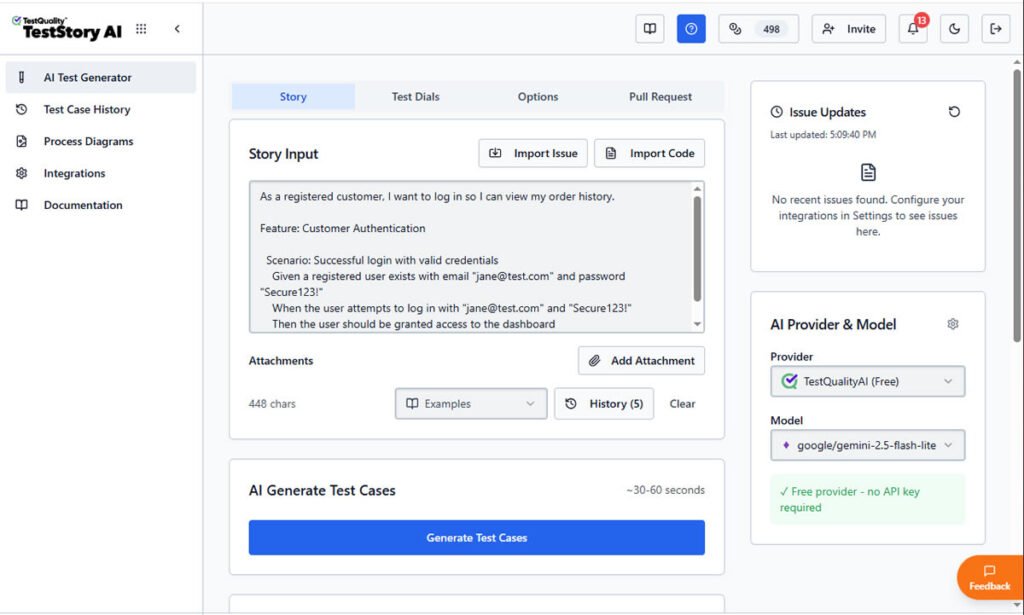

Stage 1: Ingestion — From User Story to Structured Intent

A QA agent using TestStory.ai begins with the same artifact your product team already produces: a user story, acceptance criteria, or feature description. No reformatting required. The agent's LLM layer parses the natural language input and extracts the underlying intent; what the feature is supposed to do, what states are possible, what failure modes exist, and what edge cases the description implies but does not explicitly state.

This is where Agentic tools separate from rule-based generators. A rule-based system produces tests for what the requirement says. An LLM-powered agent produces tests for what the requirement means, including the implicit scenarios an experienced QA engineer would catch but a junior might miss.

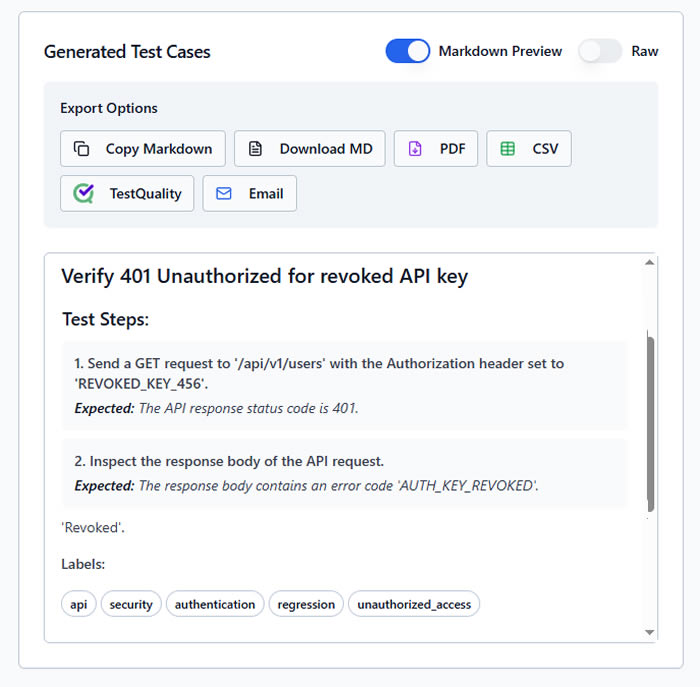

Stage 2: Generation — Gherkin-Based BDD Output

TestStory.ai outputs test cases in Gherkin syntax — the Given/When/Then format used by BDD frameworks like Cucumber and SpecFlow. This is a deliberate architectural choice. Gherkin scenarios are human-readable so product managers can validate coverage, machine-executable so CI/CD pipelines can run them directly, and framework-agnostic so they work with any BDD-compatible execution layer.

The generated scenarios are contextually aware, not templates with filled-in blanks. A user story about a payment flow generates tests for successful transactions, declined cards, network timeouts, and currency edge cases — not a generic CRUD test pattern.

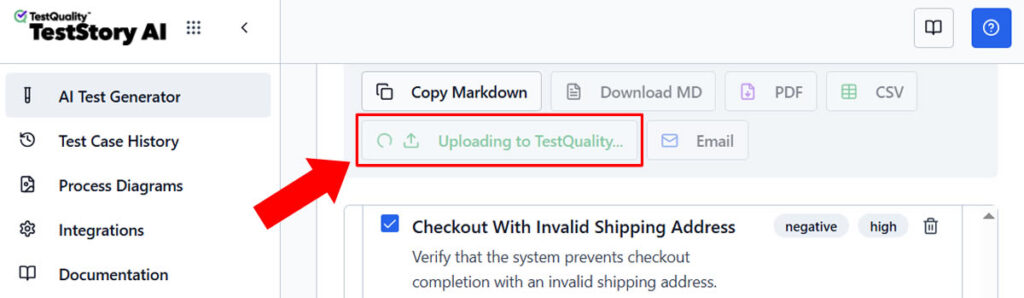

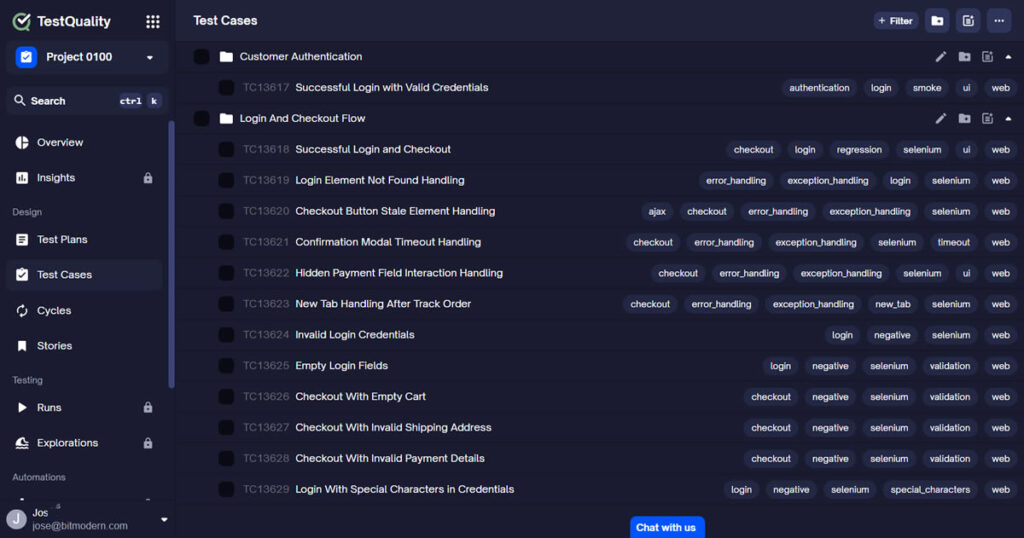

Stage 3: Integration — Direct into Your Existing Stack

Generated tests push directly into TestQuality's test management system, and from there into GitHub and Jira through native integrations. Tests appear as test cases in your management layer and as executable specifications in your test repository (no manual export, copy-paste, or reformatting required).

The GitHub integration deserves specific attention. When a pull request is opened, the agent can analyze the changed code, identify the affected user stories, and generate or update corresponding test cases automatically. Coverage gaps close at the moment they are created, not days later when someone remembers to write the tests.

This integration depth is why technical teams that discover TestStory.ai through developer channels activate at a 68 percent rate — the tool fits their existing workflow rather than demanding a parallel process.

Visual Regression and LLM-Based Testing: The Technical Layer

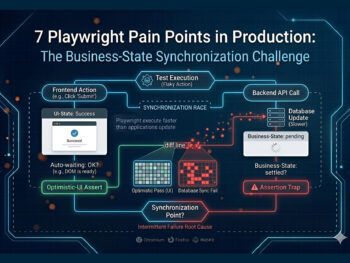

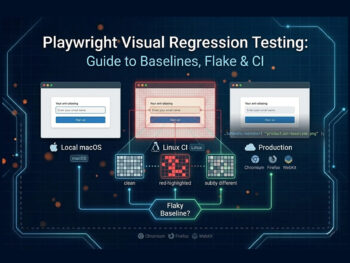

LLM-Driven Visual Regression

Traditional visual regression tools work by pixel comparison. They take a baseline screenshot and flag any pixel-level difference. This produces high false-positive rates, a one-pixel font rendering difference triggers the same alert as a broken layout. Engineers spend hours reviewing alerts for changes that are functionally irrelevant.

LLM-based visual testing evaluates screenshots semantically rather than pixel-by-pixel. The model understands that minor anti-aliasing is not a defect, but a missing button in the checkout flow is. It can articulate what changed, why it matters, and whether a human should review it, making visual regression actionable rather than just noisy.

Intelligent Test Prioritization

Not every test needs to run on every commit. An Agentic QA system that understands the relationship between code changes, user stories, and test cases can determine which subset of your test suite is relevant for a given change, the practical implementation of risk-based testing without manual tagging.

For teams with large test suites, this prioritization can reduce pipeline execution time by 40 to 60 percent without reducing defect detection rates. The agent knows which tests cover the changed code and runs those first.

Manual QA vs. Automated QA vs. Agentic QA: A Technical Comparison

| Capability | Manual QA | Automated QA (Playwright / Selenium) |

Agentic QA (TestStory.ai) |

|---|---|---|---|

| Test Case Creation | Human-authored, session-by-session | Human-authored scripts, recorded or coded | Autonomous — LLM generates from user stories |

| Gherkin / BDD Output | Manual — written by QA or BA | Manual — step definitions coded separately | Auto-generated in Given/When/Then format |

| Maintenance on UI Change | Manual re-test | Scripts break — manual fix required | Self-healing — agent updates locators & assertions |

| Edge Case Coverage | Dependent on engineer experience | Only what was explicitly scripted | Inferred from requirement semantics by LLM |

| GitHub Integration | None | CI trigger only — tests pre-written | PR-aware — generates tests on PR open (with TestQuality GH Integration). |

| Jira Traceability | Manual linking | Manual or custom integration | Native — bidirectional story-to-test linking using TestQuality integration. |

| Coverage on New Features | Days after story completion | Days — script authoring required | Minutes — agent generates on story creation |

| Scales with Velocity | Requires headcount | Partially — maintenance still manual | Yes — generation and maintenance are autonomous |

The comparison makes the architectural difference clear. Playwright and Selenium are not being replaced, they remain excellent execution engines. What Agentic QA adds is the generation layer above them: the system that decides what tests to write and keeps them current. TestStory.ai generates the Gherkin scenarios; your existing BDD framework executes them.

Where Playwright and Selenium Still Fit in an Agentic World

A common misreading of the Agentic QA narrative is that it replaces execution frameworks. It does not. Playwright in particular has become the de facto standard for browser automation, its async architecture, multi-browser support, and developer-friendly API make it genuinely excellent for reliable execution at scale.

The problem Playwright cannot solve is upstream from execution. Someone still has to write the tests it runs. Someone still has to update them when the application changes. Playwright's code generator records interactions, but recording is not the same as understanding, it captures what the user did, not what the application is supposed to do.

In an Agentic workflow, the stack looks like this. The product layer holds user stories and acceptance criteria in Jira, Linear, or GitHub Issues. The Agentic layer is where TestStory.ai reads those stories and generates Gherkin scenarios. The management layer is TestQuality, which stores, organizes, and tracks test cases and results. The execution layer is Playwright, Selenium, or Cypress, which runs the generated specifications. The pipeline layer is GitHub Actions or your CI/CD tool, which orchestrates the full flow.

Each layer does what it does best. The Agentic layer ensures Playwright always has well-authored, current, coverage-complete tests to execute.

The Ecosystem Advantage: Why Integration Depth Determines Value

Isolated AI test generation tools exist in the market. You paste in a user story, receive test cases, copy them somewhere useful. This is marginally better than writing tests from scratch, but it does not address the structural problem, it just makes one step in the manual workflow slightly faster.

The value of Agentic QA compounds when the agent is embedded in your existing workflow rather than sitting adjacent to it.

GitHub Integration: When a PR is opened against a story with changed acceptance criteria, the agent detects the delta and generates updated test cases automatically. Coverage is maintained at the commit level, not the sprint level.

Jira Integration: Test cases maintain bidirectional links to the Jira stories that generated them. When a story is updated, affected tests are flagged. When a test fails, the link back to the requirement clarifies business impact and ownership immediately.

TestQuality's Management Layer: Generated tests live in a structured management system, not a flat file or a spreadsheet. Version history, coverage analytics, execution reporting, and audit trails make AI-generated tests actionable at the team and organizational level.

This integration depth is why the platform's 74 percent activation rate for technical referrals is meaningful. Engineers who find TestStory.ai through GitHub integrations adopt it because it removes friction from their existing workflow. It does not ask them to change how they work, it improves what they already do.

Starting with AI Test Case Generation: The Free Builder

The practical question for most QA teams is not whether Agentic QA is theoretically superior to manual workflows. The question is how to start without a six-month implementation project.

TestQuality's Free AI Test Case Builder is the entry point: an AI test case generator, free to use online, that lets you paste in a user story or feature description and receive Gherkin-formatted test cases in under 60 seconds. No account required to try it. No integration setup needed to evaluate the output quality.

The free builder produces the same LLM-powered output that feeds the full TestStory.ai agentic workflow. Teams typically start here, validate the output against their own requirements, and then evaluate the full platform with GitHub and Jira integrations enabled.

This is also a practical way to address coverage debt incrementally. Start with the user stories your team is actively working on this sprint. Generate test cases using the free builder. Evaluate the coverage against what your team would have written manually. The quality gap is immediately visible and so, is the speed difference.

Try It Now

Generate your first AI test cases in under 60 seconds.

Paste in any user story and TestStory.ai generates structured, Gherkin-formatted test cases instantly — covering happy paths, edge cases, and failure scenarios your team would typically miss. No account required to try it.

No credit card required on either platform.

What Agentic QA Means for QA Engineers in 2026

The honest question that follows from this discussion is what happens to QA engineers when AI agents can generate and maintain test cases autonomously. Based on adoption patterns from teams already running Agentic workflows, the role shifts rather than shrinks.

The work that disappears is mechanical: writing test cases from requirements, updating locators when UI changes, maintaining regression test suites that drift out of coverage. This work consumed most available QA bandwidth in traditional teams — and it was the least intellectually engaging part of the job.

The work that expands is strategic: evaluating AI-generated coverage for gaps the model missed, designing test strategies for complex system interactions, interpreting results in the context of user behavior, and making architectural decisions about the testing stack. These are the skills that differentiate exceptional QA engineers and they become the primary focus when the mechanical work is automated.

Teams that have made this transition consistently report higher job satisfaction alongside improved coverage metrics. The QA role becomes what it should have always been: quality engineering, not test case transcription.

Getting Started with Agentic QA: A Practical Path

The fastest path to adoption is a targeted proof of concept on a single feature area, not a full platform migration. Here is the sequence that produces the fastest demonstrable results.

Step 1: Select a sprint's worth of user stories, ideally a feature area with known coverage gaps or high regression frequency.

Step 2: Run them through the Free AI Test Case Builder. Review the generated Gherkin scenarios against what your team would have written. Note the edge cases the model caught that you would have missed.

Step 3: Load the generated tests into TestQuality and link them to your Jira stories. Measure the time required versus manual authoring for the same coverage.

Step 4: Enable the GitHub integration and observe how the next PR against those stories triggers automatic test updates. This is the moment most teams decide to expand beyond the pilot.

Step 5: Extend to the full sprint backlog once the workflow is validated and the team is comfortable with the output quality.

This sequence typically produces measurable results — coverage improvement, time savings, or both — within two weeks. That timeline is short enough to justify the experiment without organizational commitment, and the results are clear enough to build the case for broader adoption.

The Bottom Line: Agentic QA Is Not the Future of Testing, It Is the Present

The shift from automated testing to Agentic QA is already underway in the teams that ship the most software at the highest quality. The question for QA leaders in 2026 is not whether to make this transition, but how quickly to move and where to start.

The architectural answer is clear: autonomous AI agents that read requirements, generate Gherkin-formatted test cases, integrate with GitHub and Jira natively, and maintain coverage as the application evolves. That is what TestStory.ai is built to do and the free Test Case builder lets any team experience the output quality without any infrastructure commitment.

The gap between a user story and a passing test case, has always been a human bottleneck. In 2026, it does not have to be.

Start Free Today

Stop managing test debt.

Start running an Agentic QA workflow.

TestStory.ai generates structured test cases from your user stories, acceptance criteria, or architecture diagrams — then syncs them directly into TestQuality for execution, tracking, and team collaboration. The gap between a user story and a passing test has always been a human bottleneck. In 2026, it does not have to be.

✦ Exclusive offer for TestQuality subscribers

Get 500 TestStory.ai credits every month included with your TestQuality subscription — no extra cost. Simply create a TestStory.ai account with the same email as your TestQuality account to activate automatically. Includes all Pro 500 features.

Not a TestQuality subscriber yet? Both platforms offer free plans — sign up for TestStory.ai to generate your test cases, then connect your free TestQuality account to sync, execute, and track them without ever leaving your workflow.

No credit card required on either platform.