AI test automation frameworks are transforming how teams build, execute, and maintain test suites by embedding intelligence directly into the testing workflow.

- NLP modules convert plain-language requirements into executable test cases, eliminating the gap between business stakeholders and technical teams while accelerating test creation cycles.

- Auto-test generation capabilities analyze application behavior to autonomously create comprehensive test scenarios, expanding coverage to edge cases that manual approaches typically miss.

- Self-healing mechanisms automatically update test scripts when UI elements change, reducing the maintenance overhead that can consume up to 50% of test automation budgets.

- Native CI/CD integration ensures AI-powered tests execute seamlessly within DevOps pipelines, providing real-time quality feedback without slowing deployment velocity.

Start small with a pilot framework implementation, prove ROI on a single project, then scale AI testing capabilities across your organization.

Building an AI test automation framework requires more than bolting AI features onto existing test suites. It demands rethinking test automation architecture and how intelligence flows through every layer of your testing ecosystem. According to McKinsey's State of AI survey, 78% of organizations now use AI in at least one business function, a jump from 55% a year earlier. The testing domain is no exception to this acceleration.

Traditional frameworks handle execution well enough. They run scripts, report results, and integrate with pipelines. But they lack the cognitive layer that separates reactive testing from proactive quality assurance. AI in testing introduces capabilities that change what frameworks can accomplish: understanding intent from requirements, generating tests from behavior patterns, predicting defect-prone areas, and healing broken tests without human intervention.

The shift toward AI-powered frameworks for QA automation reflects a broader industry recognition that manual test creation can't scale alongside modern development velocity. When teams deploy multiple times daily, the bottleneck moves from execution speed to test creation and maintenance. That's precisely where AI modules deliver their highest value.

What Exactly Is an AI Test Automation Framework?

An AI test automation framework extends traditional framework architecture by embedding machine learning models, natural language processing engines, and predictive analytics into the core testing infrastructure. The system learns, adapts, and improves without constant human reconfiguration.

Terminology often gets muddied in marketing speak. Adding an AI assistant to a traditional framework does not create an AI-native architecture. True AI frameworks for QA automation process data at multiple levels, from test design through execution analysis, creating feedback loops that continuously optimize testing effectiveness.

A traditional framework executes what you tell it. An AI framework understands what you need, suggests what you might have missed, and fixes what breaks. The intelligence is structural, woven into how the framework operates rather than sprinkled on top as a feature.

How Traditional Frameworks Differ from AI-Native Architectures

Standard automation frameworks follow deterministic paths. You write scripts, define assertions, configure environments, and execute. The framework faithfully reproduces your instructions. When applications change, scripts break. When requirements evolve, you manually update tests.

AI-native architectures introduce probabilistic reasoning. They assess which tests matter most for a given code change. They identify redundant coverage and recommend pruning. They learn from execution patterns to predict flaky tests before they cause pipeline failures. This intelligence emerges from training on your specific application behavior, not generic assumptions.

The practical impact shows up in maintenance metrics. Organizations typically dedicate 30–50% of testing resources to maintaining and updating test scripts. AI-native frameworks with self-healing capabilities can reduce this burden. Understanding test automation fundamentals provides essential context for appreciating how AI transforms these baseline capabilities.

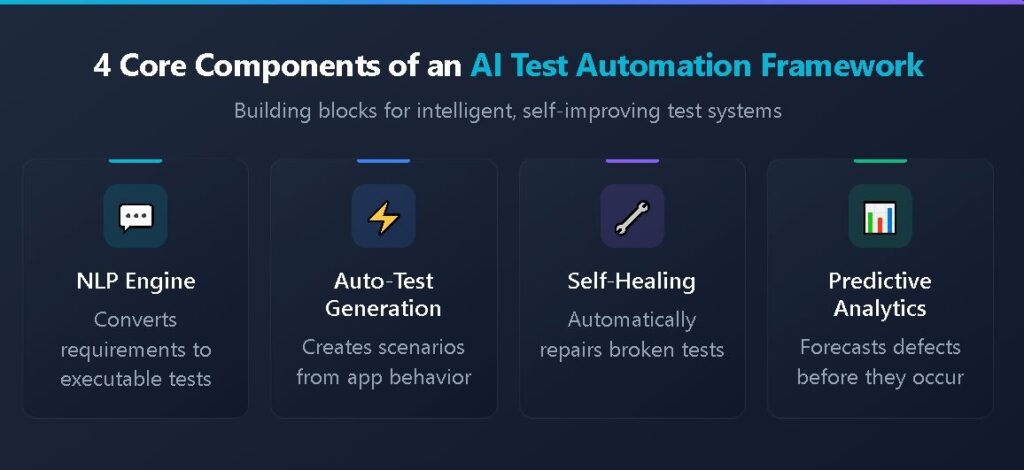

What Are the Core Components of an AI-Driven Framework?

Building an effective AI test automation framework requires assembling several specialized modules that work together as an integrated system. Each component handles specific intelligence tasks while sharing data and insights with other modules.

The architecture typically layers AI capabilities on top of traditional execution engines rather than replacing them entirely. This preserves compatibility with existing test assets while adding cognitive features that enhance every aspect of the testing lifecycle.

The NLP Engine: Converting Requirements to Tests

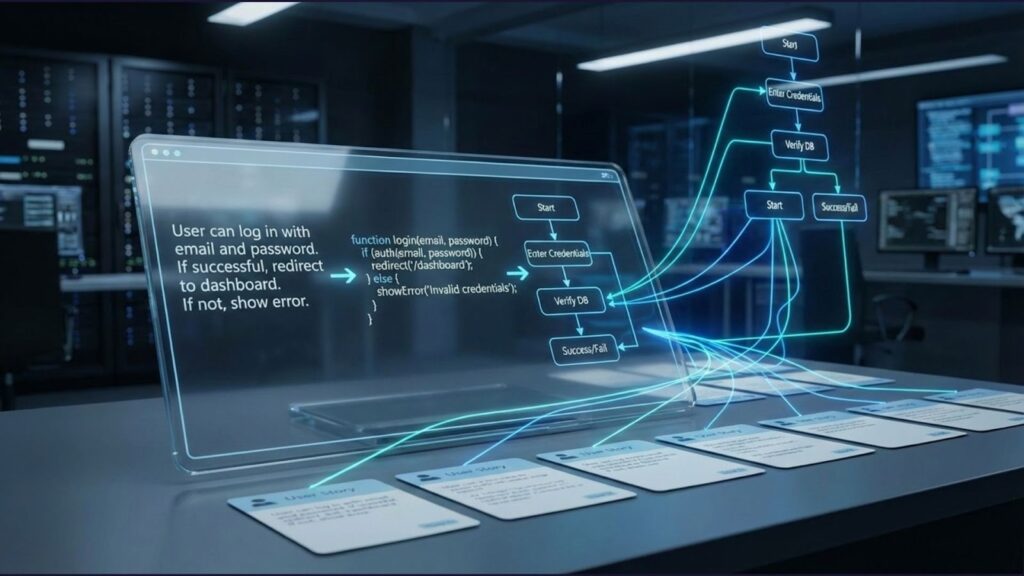

Natural Language Processing forms the gateway between human intent and automated execution. NLP modules parse requirements documents, user stories, and feature descriptions to extract testable conditions. Advanced implementations can distinguish between functional requirements, acceptance criteria, and edge case specifications within the same document.

The processing pipeline typically includes tokenization to break text into meaningful units, named entity recognition to identify system components and actions, and semantic analysis to understand relationships between elements. The output feeds directly into test case generators that translate understood requirements into executable specifications.

The quality of NLP output depends heavily on training data and domain adaptation. Generic NLP models struggle with technical documentation. Effective implementations fine-tune models on your organization's specific terminology, document formats, and writing conventions. This customization improves extraction accuracy.

Auto-Test Generation: Creating Scenarios from Behavior

Automatic test generation modules observe application behavior to create test scenarios without explicit human specification. These systems analyze user interactions, API call patterns, state transitions, and error conditions to identify paths worth testing.

Generation approaches fall into two categories. Model-based generation derives tests from formal specifications or behavioral models extracted from code analysis. Exploration-based generation mimics intelligent user behavior, systematically probing the application while recording interactions that exercise meaningful functionality.

The sophistication lies in avoiding trivial or redundant tests. Naive generation produces thousands of scenarios that add execution time without improving defect detection. Intelligent generators assess coverage contribution, prioritize risk areas, and deduplicate similar paths. Teams using AI-powered test generation tools often report meaningful coverage improvements while reducing total test count through intelligent deduplication.

Self-Healing Mechanisms: Autonomous Maintenance

Self-healing is one of the most immediately valuable capabilities of AI in testing automation programs. When UI elements change identifiers, locations, or structures, traditional tests fail. Self-healing frameworks detect these failures, analyze the application's current state, and automatically update locators or assertions to restore test functionality.

The healing process uses multiple strategies. Visual comparison identifies elements that look the same despite changed technical attributes. Semantic analysis finds elements performing equivalent functions. Historical pattern matching predicts likely changes based on past application evolution.

Not all healing is appropriate. Frameworks must distinguish between benign changes worth healing and substantive changes that indicate genuine defects. Sophisticated implementations present healing candidates for human approval rather than autonomously modifying tests, maintaining human oversight while reducing manual effort.

Predictive Analytics: Forecasting Quality Outcomes

Predictive modules analyze historical test data, code changes, and defect patterns to forecast where problems will likely occur. This intelligence enables risk-based testing strategies that focus effort where it delivers maximum defect-finding value.

Common predictions include defect probability scores for changed code areas, flakiness likelihood for test cases based on environmental factors, and execution time estimates that enable optimal parallel distribution. These predictions transform testing from comprehensive-but-wasteful to targeted-and-efficient.

The predictive layer connects testing intelligence to development workflows. When developers see defect probability scores for their commits, they can address issues before formal testing begins. This shift-left effect accelerates quality feedback loops beyond what reactive testing approaches achieve.

How Does NLP Transform Test Case Creation?

Natural Language Processing expands who can create automated tests and accelerates the scaling of test suites. By accepting requirements in human language rather than code syntax, NLP modules democratize test creation while maintaining technical rigor in execution.

NLP systems understand context, infer implicit requirements, and identify ambiguities that might otherwise cause downstream defects. They function as intelligent intermediaries between business intent and technical implementation.

From User Stories to Executable Tests

The most compelling NLP application converts user stories directly into test cases. A story like "As a customer, I want to reset my password so I can regain account access" contains implicit test scenarios: successful reset flow, invalid email handling, expired link behavior, and rate limiting for repeated attempts.

Advanced NLP systems extract these scenarios without explicit enumeration. They understand that password reset implies email verification, that email verification implies expiration windows, and that security features imply protection against abuse patterns. This inferential capability expands coverage beyond literally stated requirements.

The generated tests maintain bidirectional traceability to source requirements. When requirements change, affected tests are flagged for review or automatic regeneration. When tests fail, the connection to business requirements clarifies the impact and priority.

Handling Ambiguous Requirements

Human-written requirements frequently contain ambiguities that cause downstream testing gaps. NLP analysis detects these ambiguities and either resolves them through contextual inference or escalates them for clarification before test generation proceeds.

Consider a requirement stating "the system should respond quickly." NLP analysis flags this as ambiguous, lacking quantified performance criteria. Depending on configuration, the system might infer a default threshold from similar requirements, prompt for specific latency targets, or generate parameterized tests that accept variable thresholds.

Identifying unclear requirements during test planning highlights specification issues early enough for correction. Testing becomes a forcing function for requirement quality rather than a downstream consumer of whatever documents exist.

Why Is Automated Test Generation a Game-Changer?

Manual test creation can't scale to match continuous delivery velocity. Development teams deploying multiple times daily need test coverage that automatically expands as features grow. Automated generation closes this gap by creating tests at the speed of development rather than the pace of manual analysis.

The change is structural. Traditional testing models assume a fixed budget of human attention allocated across test creation, execution, and maintenance. Automated generation reallocates that budget toward review, optimization, and strategic planning while AI handles the volume.

Expanding Coverage to Edge Cases

Human testers naturally focus on expected paths and obvious failure modes. Edge cases, unusual combinations, and boundary conditions often escape attention. AI generation approaches systematically explore these areas that manual testing typically misses.

Combinatorial analysis identifies parameter interactions worth testing. State machine exploration finds unexpected paths through application workflows. Boundary analysis generates tests at threshold values where off-by-one errors hide. The resulting test suites expose defects that survive years of manual testing.

Coverage expansion must balance thoroughness against execution feasibility. Intelligent generators assess marginal coverage contribution and prioritize high-value scenarios. The goal is not exhaustive testing but optimal testing within practical constraints.

Reducing Time-to-Coverage for New Features

New feature development creates urgent testing needs. AI generation produces initial test coverage within hours rather than days. Development and testing can proceed in parallel rather than sequentially.

The speed advantage compounds across releases. Each feature increment automatically generates corresponding test coverage. Over time, the cumulative coverage advantage of AI-assisted teams grows substantially compared to manual-only approaches.

Framework Component Comparison

Understanding how different AI capabilities contribute to testing outcomes helps prioritize implementation investments. The following table summarizes key components of frameworks for QA automation, their primary benefits, and typical implementation complexity:

| Component | Primary Benefit | Implementation Complexity |

| NLP Test Generation | Converts requirements to tests automatically | Medium |

| Self-Healing | Reduces maintenance effort | Medium |

| Predictive Analytics | Enables risk-based test prioritization | High |

| Visual AI Testing | Catches UI defects humans miss | Low |

| Intelligent Execution | Optimizes parallel distribution | Medium |

| Behavior Analysis | Generates tests from usage patterns | High |

Implementation complexity reflects both technical requirements and organizational change management. Components requiring significant workflow changes face higher adoption barriers regardless of technical simplicity.

5 Steps to Implement Your AI Test Automation Framework

Successful implementation follows a deliberate progression from foundation to advanced capabilities. Rushing to sophisticated features before establishing solid fundamentals produces fragile systems that fail under production pressure.

Step 1: Assess Current State and Define Goals: Document existing automation coverage, maintenance costs, and pain points. Identify specific outcomes that would justify investment, such as reduced maintenance hours, expanded coverage percentages, and faster test creation cycles. Quantified goals enable meaningful success measurement.

Step 2: Select Framework Components Based on Priorities: Match AI capabilities to your highest priority pain points. If maintenance consumes excessive effort, prioritize self-healing. If test creation bottlenecks development, focus on NLP generation. Avoid implementing everything simultaneously.

Step 3: Build Integration Infrastructure: AI modules require data flows from existing systems. Establish connections to requirements repositories, version control, CI/CD pipelines, and test management platforms. These integrations enable the information exchange that powers AI capabilities.

Step 4: Pilot with a Contained Scope: Select a single project or application for initial implementation. This contained scope allows thorough learning without organization-wide disruption. Document what works, what fails, and what needs adjustment before broader rollout.

Step 5: Scale Based on Validated Results: Expand successful patterns to additional teams and projects. Use pilot learnings to streamline subsequent implementations. Build internal expertise that accelerates adoption across the organization.

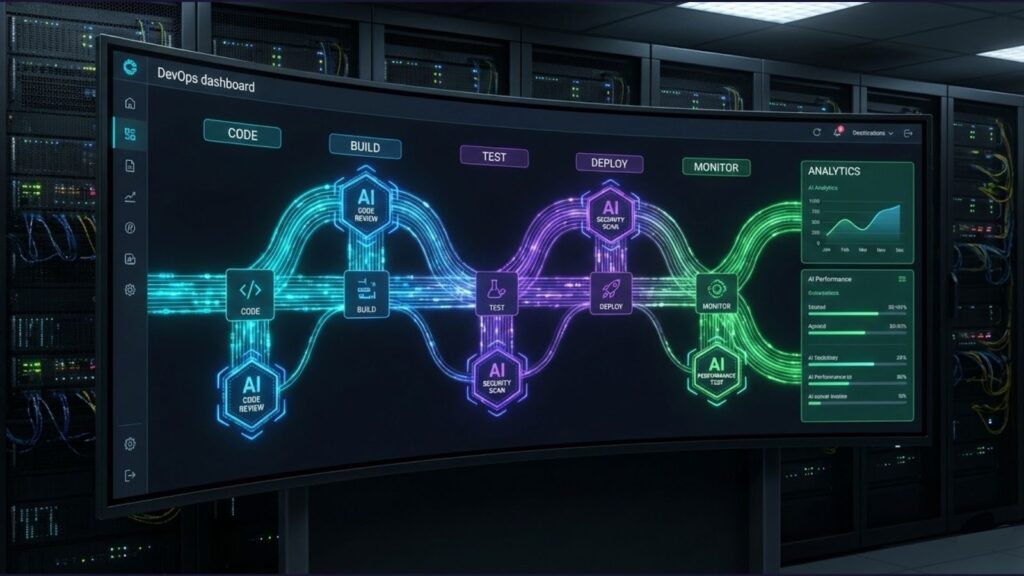

How Do You Integrate AI Frameworks with CI/CD Pipelines?

Test automation architecture must fit seamlessly within DevOps workflows to deliver value at development speed. Integration points span the entire pipeline from code commit through production deployment.

The most effective integrations automatically trigger AI analysis. Code commits initiate impact analysis that identifies affected tests. Completed builds launch optimized test execution. Failed tests trigger self-healing evaluation. This automation removes manual steps that would otherwise slow pipeline flow.

Pipeline Configuration Considerations

AI modules often require more computational resources than traditional test execution. Container orchestration, parallel execution clusters, and cloud scaling accommodate these demands without blocking deployment pipelines.

Feedback timing matters. AI analysis providing results hours after commits loses value for developers who have moved to other tasks. Pipeline architecture must balance thoroughness against latency, sometimes immediately executing quick AI checks while deferring comprehensive analysis to later stages.

Teams achieving mature integration between test management and CI/CD report faster deployment cycles while maintaining quality standards. The key is treating testing as an accelerator rather than a gate.

Handling AI-Specific Pipeline Challenges

AI components introduce failure modes unfamiliar to traditional automation. Model inference can timeout, training data drift can degrade prediction accuracy, and resource contention can cause unpredictable latency. Pipeline design must accommodate these possibilities.

Graceful degradation strategies prevent AI failures from blocking deployments entirely. When AI modules fail, pipelines should fall back to traditional execution rather than halting. Monitoring and alerting ensure AI health issues receive attention without creating deployment emergencies.

Frequently Asked Questions

What skills do QA teams need to implement AI test automation frameworks? Teams benefit from a foundational understanding of machine learning concepts, though deep expertise isn't required for most implementations. More critical is domain knowledge about your application and testing requirements, which helps configure AI modules effectively. Basic programming skills remain valuable for custom integrations and override scenarios.

How long does it take to see ROI from AI testing investments? Self-healing and visual testing capabilities typically show returns quickly through reduced maintenance effort. NLP-based test generation and predictive analytics require a longer runway to accumulate sufficient training data and workflow integration for measurable impact. Start with quick wins while building toward strategic capabilities.

Can AI frameworks work with existing test automation assets? Yes, AI frameworks typically layer on top of existing automation infrastructure rather than replacing it. Your current Selenium, Playwright, or other framework tests remain functional while AI modules add intelligence. This approach protects automation investments while progressively enhancing capabilities.

What are the biggest risks when implementing AI test automation? Over-reliance on AI recommendations without human oversight can introduce subtle quality gaps. Training AI on unrepresentative data produces biased test generation. Underestimating integration complexity leads to implementation delays. Mitigate these risks through phased rollout, continuous validation, and maintaining human review checkpoints.

Transform Your Testing with Intelligent Automation

The shift toward AI test automation frameworks is the most impactful evolution in testing methodology since automation itself. Organizations that build these capabilities now establish competitive advantages in quality and delivery speed that late adopters will struggle to match.

Implementation success depends on viewing AI as a strategic capability rather than a tactical tool. The frameworks, integrations, and processes you establish today determine your testing capacity for years ahead. Investment in intelligent infrastructure compounds as AI capabilities mature.

TestQuality's AI-driven QA platform combines intelligent test creation through TestStory.ai with comprehensive test management, native GitHub and Jira integration, and seamless CI/CD connectivity. Our QA Agents work alongside your team to generate, execute, and maintain tests at the pace modern development demands. Start your free trial today and experience how AI transforms testing from bottleneck to accelerator.