Gherkin's structured, human-readable format gives it a decisive edge when working with AI-powered testing tools.

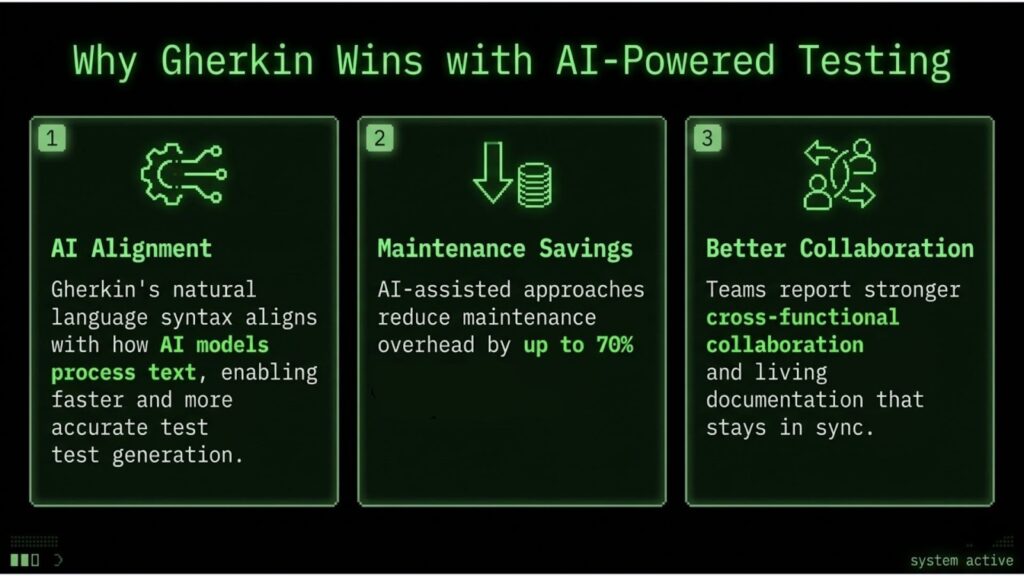

- AI models trained on natural language patterns tend to parse Gherkin's Given-When-Then syntax more reliably than procedural test scripts, enabling faster test generation and more reliable maintenance.

- Test script maintenance consumes up to 50% of QA engineering time, with AI-assisted approaches showing up to 70% reduction in this overhead.

- Teams adopting BDD with Gherkin report stronger cross-functional collaboration and living documentation that stays synchronized with actual system behavior.

- Hybrid strategies combining Gherkin for behavior validation with traditional scripts for performance and API testing deliver the strongest coverage.

Start evaluating your test suite structure now, as AI-powered QA is becoming the industry standard, and your test format determines how well these tools can assist you.

The debate over Gherkin vs traditional testing has taken an unexpected turn. What started as a philosophical argument about collaboration and readability has become a practical question with measurable consequences: which test format works better with the AI tools that teams depend on?

Seventy-two percent of QA professionals now use AI for test case and script generation. That number will keep climbing. The question is whether your existing test suite can actually leverage these capabilities or whether your format choice is creating an invisible ceiling on what AI can do for your team.

Modern test management demands more than just passing builds. Teams need living documentation, rapid feedback loops, and tests that adapt as requirements evolve. The structural differences between Gherkin and traditional scripts create different outcomes when AI enters the workflow.

What Separates Gherkin vs Traditional Testing?

Before addressing AI compatibility, understanding the core distinctions between Gherkin vs traditional testing clarifies why the differences matter for automated tooling.

The Structure Question

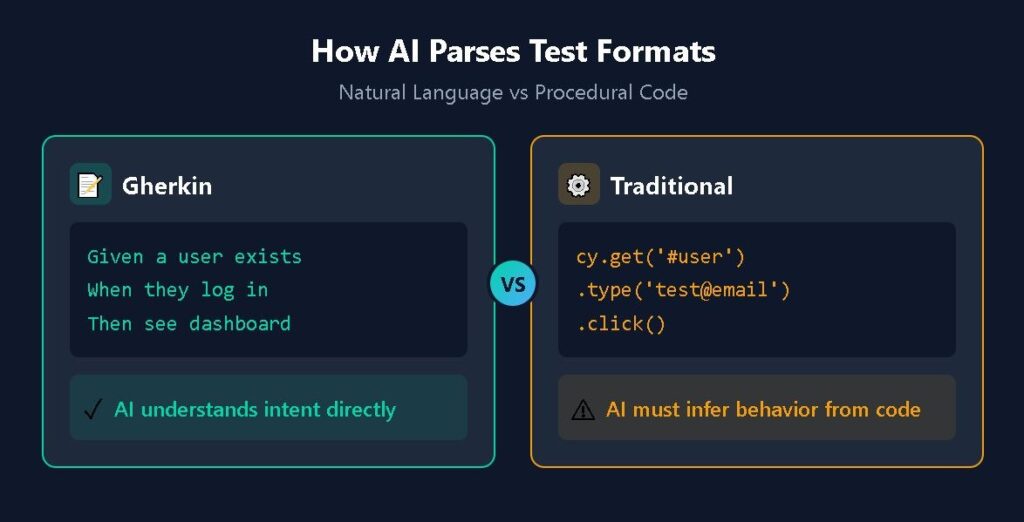

Gherkin uses a declarative, behavior-focused syntax built around the Given-When-Then pattern. Each scenario describes what the system should do from a user perspective without specifying implementation details. A login test might read: "Given a registered user exists, When they enter valid credentials, Then they should see their dashboard."

Traditional test scripts take a procedural approach. The same login test becomes a sequence of specific commands: locate the username field by CSS selector, input the value, locate the password field, input the value, click the submit button, wait for navigation, assert the dashboard element exists.

The Gherkin version communicates intent. The scripted version communicates the mechanism. Both can validate the same functionality, but they encode information differently.

Why This Distinction Matters for Teams

Gherkin scenarios function as executable specifications that non-technical stakeholders can read, review, and contribute to. When a product manager sees "Given a premium subscriber, When they access exclusive content, Then they should see the full article," they can verify whether that matches the actual requirement.

Traditional scripts require translation. Someone technical must interpret cy.get('[data-testid="content-body"]').should('be.visible') and explain what it validates. This structure creates a communication layer that slows feedback and introduces opportunities for misalignment.

For teams practicing behavior-driven development, this communication advantage often justifies the investment in Gherkin adoption. The BDD vs scripted tests debate has historically centered on this collaboration benefit.

How Does AI Parse Gherkin vs Scripted Tests?

The introduction of Gherkin AI capabilities has shifted the conversation. AI models process these formats differently, with significant implications for test generation, maintenance, and analysis.

Natural Language Advantages

Large language models excel at understanding natural language patterns. Gherkin's structured English syntax aligns closely with how these models process text. The Given-When-Then format provides consistent semantic markers that help AI systems:

- Identify preconditions (Given statements)

- Recognize user actions (When statements)

- Extract expected outcomes (Then statements)

When you ask an AI tool to generate additional test scenarios, it can parse existing Gherkin files and produce new scenarios that follow the same patterns and vocabulary. The semantic structure acts as a template that guides generation.

Traditional scripts present different challenges. AI must infer intent from implementation code, which contains noise from framework-specific syntax, selector strategies, and assertion libraries. The actual behavior being tested gets buried under technical machinery.

Generating New Tests from Existing Patterns

Consider the practical task of expanding test coverage. With a well-structured Gherkin feature file, AI can recognize patterns like "Given I am logged in as [role]" and automatically suggest scenarios for roles you haven't covered. It can identify that you're testing successful paths and propose corresponding failure scenarios.

With traditional scripts, the AI must first reverse-engineer what behavior the code validates before it can suggest extensions. This extra translation step introduces errors and limits the quality of generated tests.

Teams using AI in test script writing report higher accuracy and faster iteration when working with Gherkin-based test suites. The format provides AI with better training data for your specific application domain.

Which Approach Wins on Maintainability?

Maintainability determines long-term test suite viability. The Capgemini World Quality Report found that script maintenance consumes up to 50% of test engineering time. Teams using AI-based testing tools have reduced that maintenance burden by up to 70%.

The Gherkin vs traditional testing comparison on maintainability reveals consistent patterns:

| Factor | Gherkin/BDD | Traditional Scripts |

| Readability | Plain English scenarios readable by entire team | Requires technical knowledge to interpret |

| Change impact | Behavior changes affect scenarios; implementation changes stay in step definitions | Any change may require script updates |

| Duplication | Reusable step definitions reduce redundancy | Copy-paste tendencies create maintenance debt |

| Documentation sync | Scenarios serve as living documentation | Tests and documentation diverge over time |

| AI maintenance support | Strong alignment with natural language AI tools | Requires more human interpretation for AI assistance |

The Declarative Advantage

Traditional scripts often become brittle because they encode implementation details. When a button's CSS class changes, dozens of scripts may need to be updated. Gherkin isolates these details in step definitions, so the scenario "When I submit the form" remains stable while only the underlying automation code adapts.

This architectural separation creates a natural fit for AI-assisted maintenance. Tools can modify step definitions based on UI changes without touching scenarios that stakeholders have reviewed and approved. The abstraction layer that makes Gherkin more accessible to humans also makes it more tractable for AI systems.

Five Advantages of Gherkin in AI-Powered Testing

For teams evaluating whether to adopt or expand Gherkin usage, these advantages become more prominent as AI tooling matures:

- Superior test generation accuracy: AI models trained on natural language patterns produce higher-quality Gherkin scenarios with fewer hallucinations than procedural code generation. The constrained vocabulary and consistent structure reduce ambiguity.

- Faster requirement-to-test conversion: Pasting a user story into an AI tool and receiving executable Gherkin scenarios happens in seconds. Converting requirements to traditional scripts requires intermediate translation steps that slow the process and introduce errors.

- Self-documenting test suites: Effective Gherkin scenarios serve triple duty as requirements documentation, test specifications, and acceptance criteria. This eliminates the documentation drift that plagues traditional approaches where tests and documentation live in separate systems.

- Reduced cognitive load for maintenance: When a scenario fails, understanding what broke requires reading a sentence rather than parsing code. This accessibility extends to AI tools, which can explain failures in business terms rather than technical jargon.

- Natural integration with QA agents: AI-powered QA agents operate most effectively with structured, behavior-focused inputs. These agents can autonomously navigate Gherkin scenarios, expanding coverage and identifying gaps without constant human guidance.

How Does TestStory.ai Transform a User Story into an Executable Reality?

To understand the impact of an AI-powered QA workflow, let’s trace a standard requirement through the platform. We'll start with the common, yet often under-specified, authentication story example shared above to demostrate moving a user story through TestStory.ai agentic pipeline directly into TestQuality.

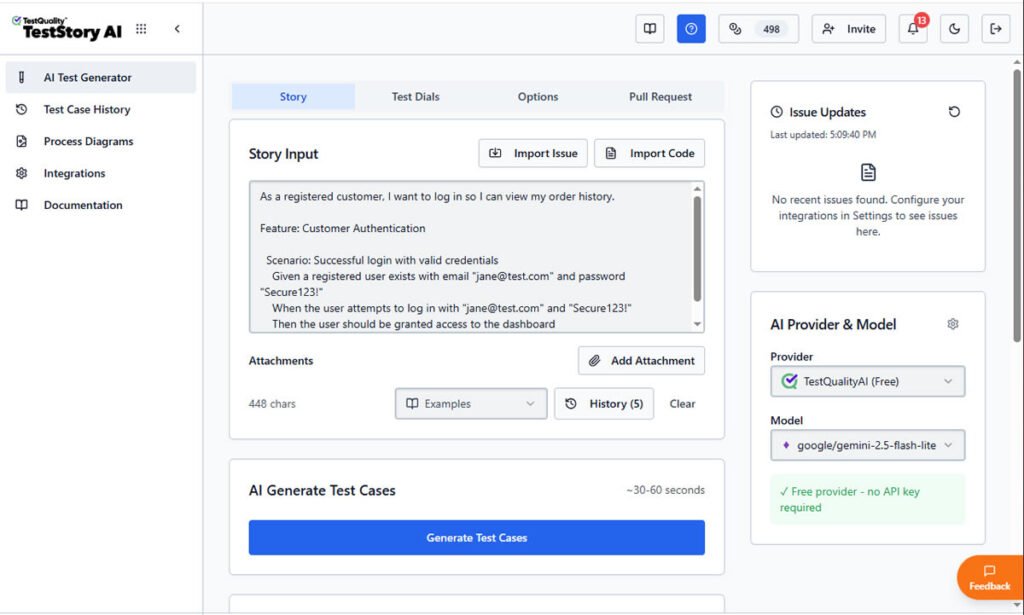

Step 1: Contextual Input for Gherkin Acceptance Criteria

The process begins by defining the human intent. In the main input field of TestStory.ai, we paste our primary Gherkin user story:

As a registered customer, I want to log in so I can view my order history."

At this stage, most traditional tools would simply store this requirement as a static text string. However, TestStory.ai treats it as a logical blueprint for generating Gherkin acceptance criteria. By adjusting the Test Dials, we can instruct the AI Agent to go beyond a simple "Happy Path."

We can guide it to think like a Senior SDET by automatically incorporating boundary values, security validations, and edge cases into the Gherkin scenarios.

Step 2: Generating Executable Gherkin Scenarios

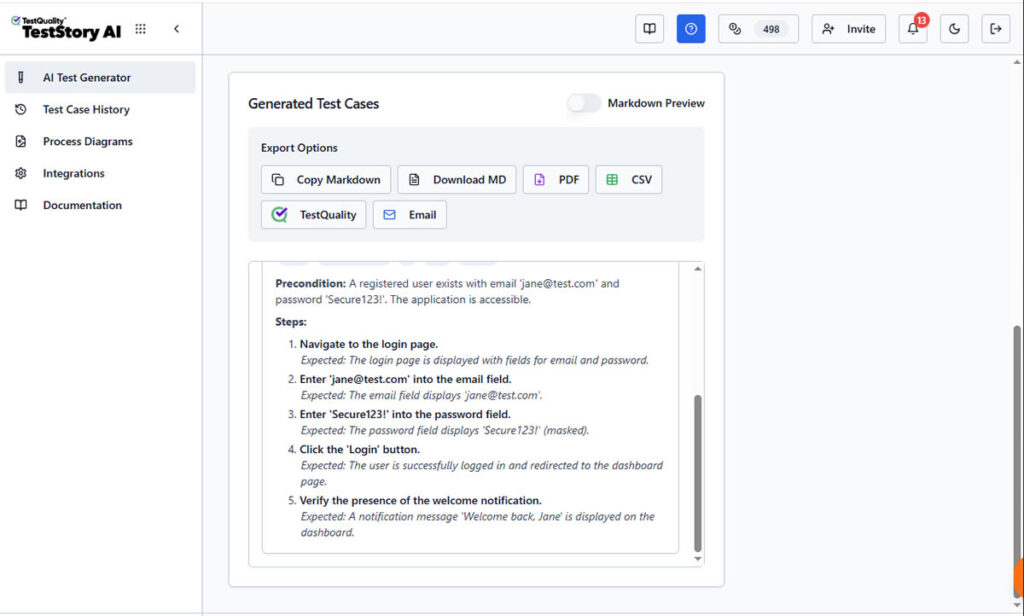

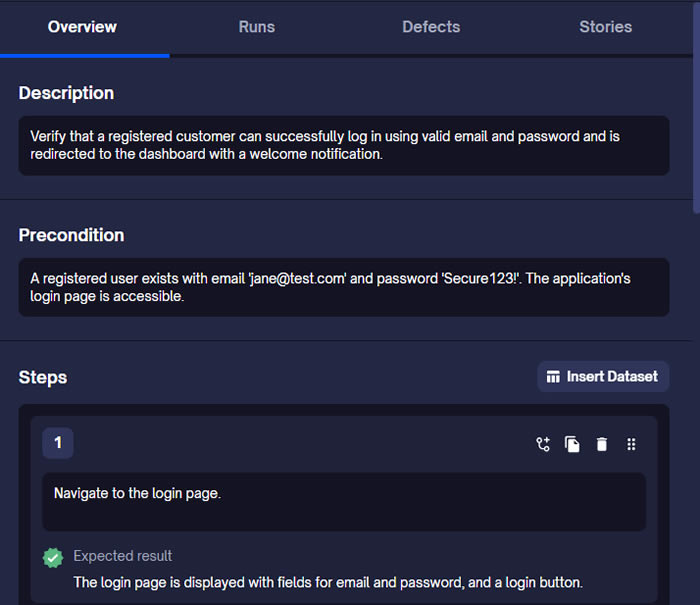

Once "Generate Test Cases" is triggered, TestStory.ai produces structured steps with clear Preconditions and Expected Results.

the AI parses the story to create structured Gherkin format user stories acceptance criteria. As shown in the screenshots, the platform doesn't just "write" steps; it builds an executable specification.

The AI identifies the necessary preconditions (Given), the specific user triggers (When), and the verifiable outcomes (Then). This ensures that your Gherkin acceptance criteria examples are declarative and behavior-focused, following 2026 industry best practices.

Unlike a static document, this output is ready for the "last mile" of testing. The interface provides immediate Export Options, allowing you to download the Gherkin as Markdown, PDF, CSV, share it by Email, downloaded it or, what's most important, sync it directly to TestQuality as your option as test management stack.

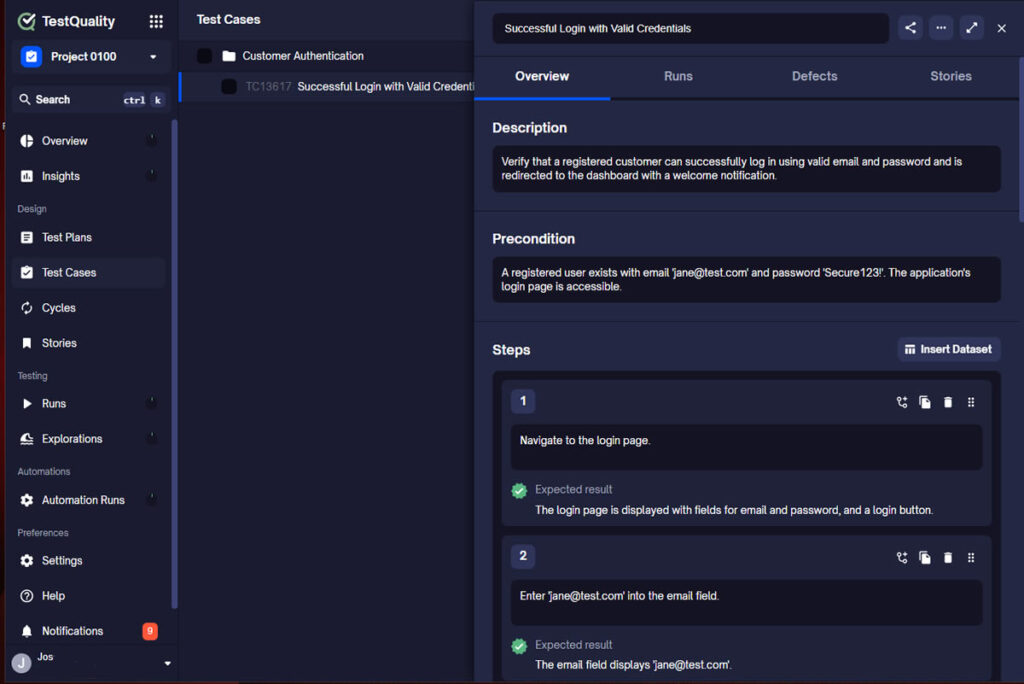

Step 3: Synchronizing Gherkin Scenarios to TestQuality

By selecting the TestQuality export button, the AI-generated scenario is instantly synchronized with our live project within TestQuality with the proper folder organization. As shown in the final view, the Gherkin story is now a formal Test Case within TestQuality, fully populated with:

- Traceability: Linked back to the original User Story requirement.

- Structured Steps: Ready for manual execution or automation mapping.

- Organization: Automatically filed into the correct "Customer Authentication" folder.

What was once a high-level user story is now a collection of formal, version-controlled test cases ready for execution.

When Do Traditional Scripts Still Make Sense?

The BDD vs scripted tests comparison isn't one-sided. Traditional scripts maintain clear advantages in specific contexts that teams shouldn't ignore.

Performance and Load Testing

Performance tests measure system behavior under specific technical conditions. Scripted tests that directly control request timing, connection pools, and resource utilization provide necessary precision. Expressing "simulate 10,000 concurrent users with 50ms think time" in Gherkin adds abstraction without adding value.

Low-Level API Validation

API contract testing often requires validating specific response structures, header values, and error codes. While Gherkin can describe API behavior at a high level, detailed contract verification benefits from direct assertion code that maps closely to the technical specification.

Exploratory Automation Scripts

Quick automation scripts written during exploratory testing sessions don't need stakeholder review or long-term maintenance. The overhead of structuring these as Gherkin scenarios exceeds their benefit when the goal is rapid investigation rather than permanent test assets.

Legacy System Integration

Systems with minimal documentation sometimes require test scripts that essentially document discovered behavior through assertion code. Converting these findings to Gherkin scenarios adds effort without improving the information capture.

The practical answer for most teams involves hybrid strategies. Use Gherkin for user-facing behavior validation where collaboration and documentation matter. Use traditional scripts for technical validation where precision and control matter.

Try It Now

See the Plan-Act-Verify loop in action — in under 60 seconds.

Paste any user story into TestStory.ai and watch the orchestration layer generate structured, Gherkin-formatted test cases instantly — covering happy paths, edge cases, and the failure scenarios your team would typically miss. Results sync directly into TestQuality for immediate execution and tracking. No account required to start.

No credit card required on either platform.

The Future of AI in Test Script Writing

The trajectory of AI testing points toward increasingly autonomous QA workflows. Rather than AI assisting human testers, we're moving toward AI agents that execute complete testing workflows with human oversight focused on strategic decisions rather than tactical execution.

This evolution favors test formats that AI can confidently manipulate. Gherkin's structure provides the semantic richness that enables AI to understand intent, not just syntax. As these tools mature, the gap between what AI can do with Gherkin versus traditional scripts will widen.

Teams investing in test infrastructure today should consider how their format choices position them for this future. A test suite that AI can extend, maintain, and analyze autonomously delivers compounding returns as tooling improves.

For teams exploring AI-powered test generation, free AI test case builders demonstrate how quickly natural language requirements convert to structured Gherkin scenarios. The barrier to trying behavior-driven approaches has never been lower.

FAQ

What is the main difference between Gherkin and traditional test scripts?

Gherkin uses a declarative, natural language syntax (Given-When-Then) that describes expected system behavior from a user perspective. Traditional test scripts use procedural code that specifies exactly how to interact with system components. Gherkin focuses on what the software should do, while traditional scripts focus on how to test it.

Can AI generate traditional test scripts as effectively as Gherkin scenarios?

AI models produce higher quality output when generating Gherkin scenarios because the format's structured English syntax aligns with how large language models process natural language. Traditional script generation requires AI to produce framework-specific code with correct selectors and assertions, which introduces more opportunities for errors. Teams report needing less human review and correction when generating Gherkin compared to procedural code.

Should my team switch entirely from traditional scripts to Gherkin?

A hybrid approach often delivers the best results. Use Gherkin for user-facing functionality where stakeholder collaboration, living documentation, and AI-assisted generation add value. Retain traditional scripts for performance testing, low-level API validation, and technical scenarios where precise control matters more than readability. The goal is matching the format to the testing need rather than enforcing uniformity.

How does Gherkin improve test maintenance over time?

Gherkin separates business-readable scenarios from technical implementation in step definitions. When UI elements change, only step definitions need updates while scenarios remain stable. This isolation reduces maintenance scope and enables AI tools to handle routine updates automatically. Traditional scripts often embed implementation details throughout, requiring changes across multiple files when the application evolves.

Start Building Smarter Tests Today

Choosing between Gherkin vs traditional testing ultimately depends on your team's priorities, existing infrastructure, and willingness to adopt new workflows. Both approaches can produce reliable software when implemented thoughtfully.

However, the momentum clearly favors Gherkin for teams investing in AI-augmented QA. The format's natural language structure, semantic clarity, and separation of concerns align precisely with how modern AI tools process and generate test content. Teams report improved collaboration, reduced maintenance overhead, and faster feedback cycles after adopting BDD practices.

Many organizations successfully migrate portions of their test suites from procedural scripts to behavior-driven specifications as they recognize the benefits. Starting with high-visibility features that benefit from stakeholder collaboration often demonstrates value quickly.

TestQuality provides an AI-powered QA platform that unifies Gherkin-based testing with comprehensive test management, seamless GitHub and Jira integration, and QA agents that assist throughout the testing lifecycle. Start your free trial and experience how structured behavior specifications transform your testing workflow.