AI test case generators are spreading across QA teams, with Gartner predicting that 80% of engineers will need to upskill by 2027.

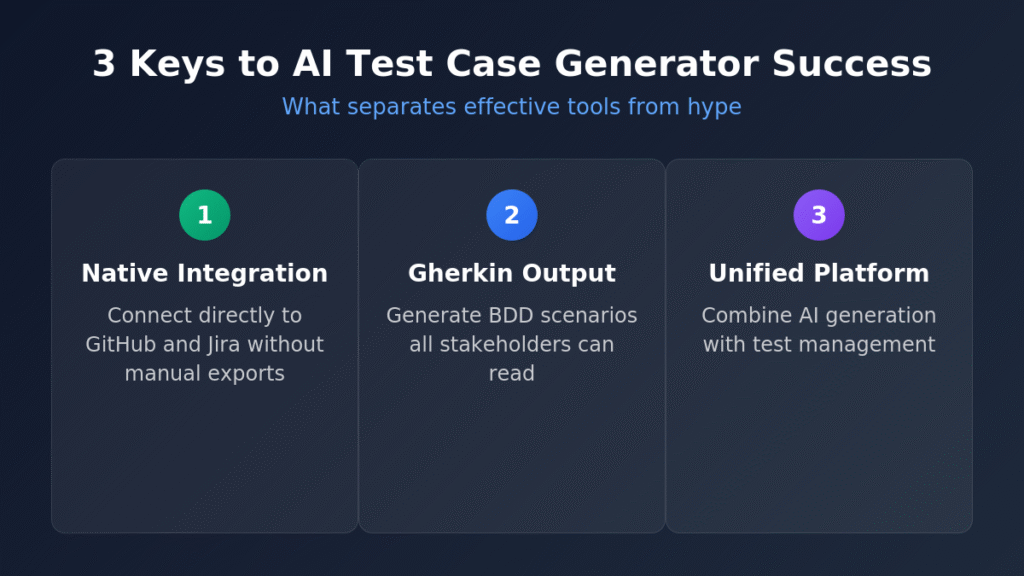

- The best AI test case builders combine natural language processing with deep DevOps integration, outputting tests in formats like Gherkin that both technical and business stakeholders understand.

- Free and freemium options have matured, making AI-powered test generation accessible to teams of all sizes without enterprise-level budgets.

- Integration depth matters more than feature count; tools that connect natively to GitHub and Jira eliminate workflow friction.

Prioritize platforms that unify AI test generation with test management rather than tools that fragment your QA workflow.

Writing test cases manually has always been the bottleneck in software delivery. You spend hours translating requirements into structured scenarios, only to watch those same tests break the moment someone refactors a login flow or redesigns a checkout page. According to McKinsey, 88% of organizations now deploy AI in at least one business function. For QA teams specifically, that adoption is accelerating around one capability in particular: intelligent test case generation that converts requirements into executable scenarios without the tedious manual translation.

An AI test case generator interprets your user stories, acceptance criteria, and feature descriptions to automatically produce comprehensive test coverage. The result is more thorough testing in less time, with scenarios that often catch boundary conditions human testers overlook. For teams already stretched thin, that efficiency gain isn't optional anymore.

What Makes an AI Test Case Generator Worth Using in 2026?

The market for AI testing tools has exploded, which means quality varies wildly. Some platforms bolt AI features onto legacy test automation and call it innovation. Others built intelligence into their architecture from day one. Understanding the difference saves you from expensive migrations later.

Effective AI test case builders share several characteristics that separate genuine productivity gains from marketing hype. First, they understand context. A tool that generates generic login tests for every authentication feature isn't useful. The best generators analyze your specific requirements, identify the unique business logic, and produce scenarios that actually validate what matters for your application.

Second, modern tools output tests in formats teams already use. Gherkin syntax has become the lingua franca of behavior-driven development because it bridges technical implementation and business requirements. When your AI test case generator outputs Given-When-Then scenarios, product managers can review them, developers can implement step definitions, and QA engineers can execute them. Everyone speaks the same language.

Third, integration depth matters more than feature count. A brilliant AI engine trapped inside a standalone tool creates friction. The platforms gaining traction in 2026 connect directly to GitHub, Jira, and CI/CD pipelines without requiring manual import/export cycles. When requirements change in your issue tracker, your test cases should automatically reflect those changes.

How Do AI Test Case Builders Transform QA Workflows?

The workflow transformations from adopting test case generation AI compound over time in ways that productivity numbers alone don't capture.

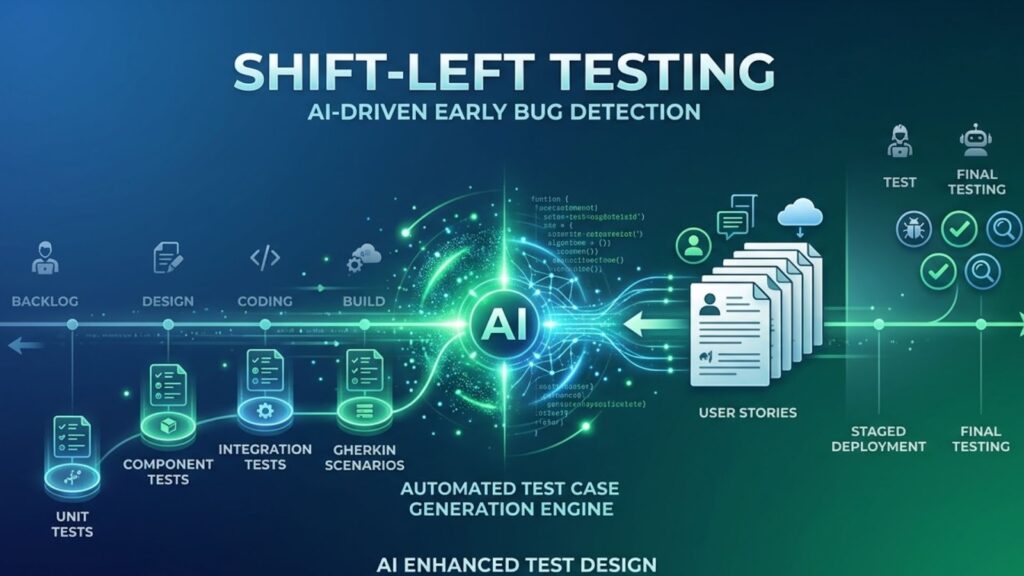

Shifting from Reactive to Proactive Testing

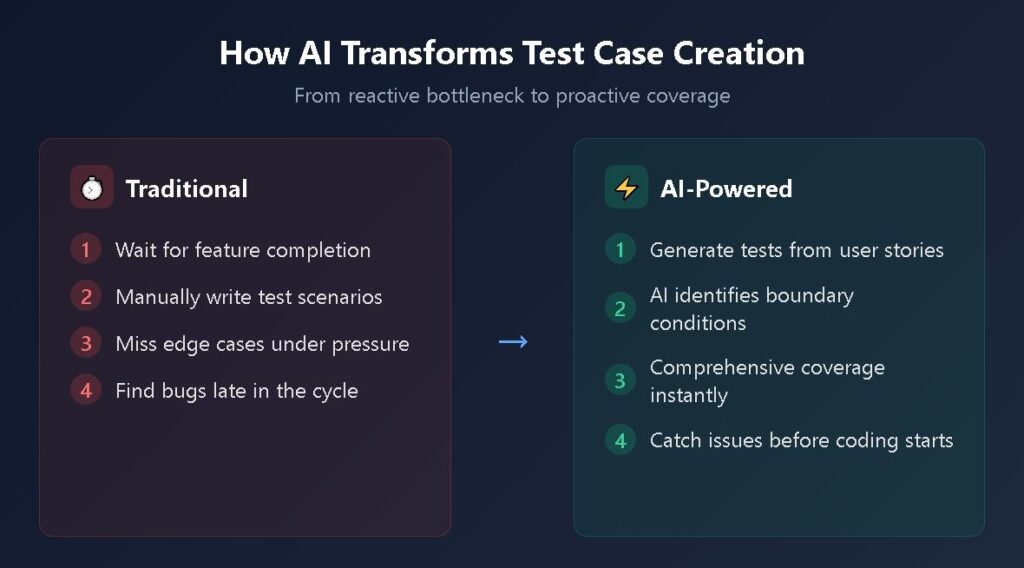

Traditional QA happens after development completes a feature. Testers receive a build, explore functionality, document bugs, and hope developers have time to fix issues before release. AI test generation flips this sequence. When product managers write user stories, an AI test case generator can immediately produce test scenarios. Developers see expected behaviors before writing code. Edge cases surface during planning rather than in production.

This shift aligns perfectly with shift-left testing principles. Defects caught during requirements review cost a fraction of bugs discovered in production. Teams that generate test cases from user stories at sprint planning start development with clearer expectations and fewer surprise failures late in the cycle.

Eliminating Coverage Gaps

Human testers focus on scenarios they understand and use cases they've encountered before. That's natural but limiting. AI tools systematically analyze requirements to identify boundary conditions, input combinations, and error handling paths that manual approaches miss. A login feature test suite generated by AI might include rate-limiting scenarios, session timeout handling, password complexity validation, and concurrent session management that a rushed tester might skip.

The coverage improvement is especially pronounced for complex features with numerous interdependencies. Checkout flows, multi-step wizards, and permission-based interfaces have exponential scenario combinations. AI handles that complexity without fatigue.

Reducing Maintenance Burden

Test maintenance often consumes more time than test creation. When UI elements change, locators break. When workflows evolve, scenarios become obsolete. Modern AI test case generators address this through self-healing capabilities and intelligent adaptation. Rather than requiring manual updates for every application change, these tools automatically suggest modifications, update locators, and flag scenarios that need human review.

Top AI Test Case Generators for QA Teams in 2026

The following tools represent the current state of the art in intelligent test generation. Each approaches the problem differently, making the right choice dependent on your existing tech stack and team composition.

TestQuality with TestStory.ai

TestQuality combines AI-powered test generation through TestStory.ai with unified test management designed for modern DevOps workflows. TestStory.ai converts user stories, requirements, and feature descriptions into comprehensive test cases in seconds, outputting in Gherkin/BDD format that integrates with existing test management. The platform's dual-native integration with both GitHub and Jira eliminates the manual export/import cycles that plague standalone generators. QA Agents assist throughout the workflow, from test creation through execution and analysis, providing an active intelligence layer rather than passive documentation.

Best for: Teams wanting AI test generation unified with test management in a single platform, especially those using GitHub or Jira workflows.

Testsigma

Testsigma converts plain English descriptions into automated test scripts across web, mobile, and API platforms. The natural language processing engine interprets instructions like "verify the shopping cart updates when removing an item" and generates executable test code. Teams without deep automation expertise find this accessibility valuable. The platform includes self-healing for locator changes and integrates with common CI/CD tools.

Common among: Teams transitioning from manual to automated testing without dedicated automation engineers.

Katalon Platform

Katalon combines AI test generation with an automation suite covering web, API, mobile, and desktop testing. The AI copilot assists with test case creation from requirements while the broader platform handles execution, reporting, and maintenance. Enterprise teams appreciate the unified approach, though smaller organizations sometimes find the feature set overwhelming for their needs.

Common among: Large organizations wanting an all-in-one solution for test generation through execution.

Qodo Gen

Formerly CodiumAI, Qodo Gen focuses specifically on developer-written unit and integration tests. The tool analyzes function behavior, examines implementation logic, and generates test suites covering meaningful scenarios rather than trivial assertions. For teams practicing test-driven development or building test coverage for existing codebases, Qodo's code-aware approach produces higher quality results than generic generators.

Common among: Development teams writing unit tests in languages like Python, JavaScript, and Java.

CloudQA

CloudQA generates test cases from live URLs, UI mockups, or wireframes using Google Cloud Vision AI for element recognition. The visual approach suits teams that work from design specifications before development completes. Output includes structured test documentation ready for manual execution or automation framework integration.

Common among: Teams with strong design processes who want to generate tests early from mockups.

How Do These AI Test Case Generators Compare?

Understanding the differences between AI test generation approaches helps teams select tools that match their specific workflow requirements and technical environment.

| Tool | Primary Approach | Output Format | Integration Strength | Free Tier |

| TestQuality + TestStory.ai | User story to Gherkin | BDD/Gherkin scenarios | GitHub & Jira dual-native | Yes |

| Testsigma | Natural language to scripts | Executable automation | CI/CD pipelines | Limited |

| Katalon | AI-assisted full lifecycle | Multiple formats | Enterprise DevOps | Basic |

| Qodo Gen | Code analysis to unit tests | Native test frameworks | IDEs | Yes |

| CloudQA | Visual AI from mockups | Test documentation | Design tools | Yes |

What Features Should You Prioritize in an AI Test Case Builder?

Evaluating tools requires looking beyond demo scenarios to real-world usage patterns. Consider these criteria before committing to any platform.

Native DevOps Integration: The generator should connect directly to your issue tracking and source control. If you live in GitHub and Jira, your testing tools should speak those languages fluently. Manual export/import cycles defeat the efficiency gains AI provides. Platforms with dual-native integration eliminate context switching between test generation and test management.

BDD and Gherkin Support: Writing tests in Gherkin syntax keeps scenarios readable for non-technical stakeholders while remaining executable by automation frameworks. Tools that output Given-When-Then scenarios integrate smoothly with Cucumber, SpecFlow, and similar BDD frameworks.

Edge Case Detection: The whole point of AI generation is catching scenarios humans miss. Evaluate whether the tool identifies boundary conditions, negative test cases, and error handling paths automatically rather than only translating happy paths you already documented.

Unified Test Management: Standalone generators create workflow fragmentation. The most effective platforms combine AI test generation with test management capabilities, keeping generated scenarios, manual tests, and automated results in a single system of record.

How Does Test Case Generation AI Handle Gherkin and BDD?

Teams practicing behavior-driven development need generators that understand the methodology's conventions. Effective AI test case generators for BDD workflows do more than format output as Given-When-Then statements. They produce scenarios that follow best practices: declarative rather than imperative, focused on single behaviors, using domain language that stakeholders recognize.

The connection between AI generation and BDD testing matters because poorly written Gherkin creates maintenance headaches regardless of who wrote it. AI tools trained on quality examples produce scenarios that describe behaviors without coupling to implementation details. When your login test says "Given a user with valid credentials" rather than "Given I navigate to the login page and enter the username in the username field," you've created a durable specification that survives UI changes.

Modern AI generators also understand scenario outlines and data-driven testing patterns. Instead of producing dozens of nearly identical scenarios for different input combinations, they generate parameterized templates that efficiently exercise multiple cases. This approach keeps test suites manageable as applications grow.

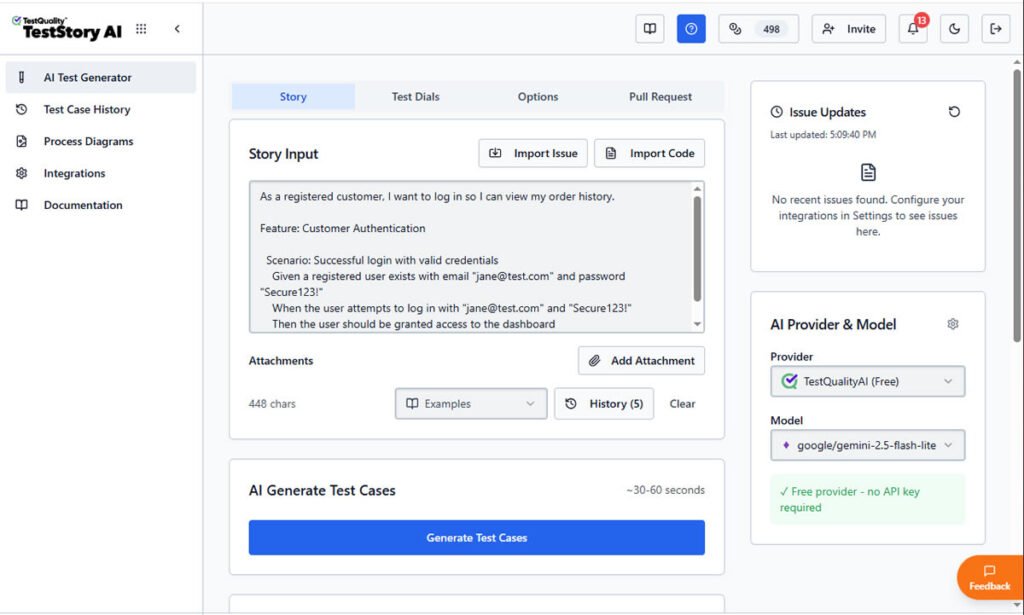

How Does TestStory.ai Transform a User Story into an Executable Reality?

To understand the impact of an AI-powered QA workflow, let’s trace a standard requirement through the platform. We'll start with the common, yet often under-specified, authentication story example shared above to demostrate moving a user story through TestStory.ai agentic pipeline directly into TestQuality.

Step 1: Contextual Input for Gherkin Acceptance Criteria

The process begins by defining the human intent. In the main input field of TestStory.ai, we paste our primary Gherkin user story:

As a registered customer, I want to log in so I can view my order history."

At this stage, most traditional tools would simply store this requirement as a static text string. However, TestStory.ai treats it as a logical blueprint for generating Gherkin acceptance criteria. By adjusting the Test Dials, we can instruct the AI Agent to go beyond a simple "Happy Path."

We can guide it to think like a Senior SDET by automatically incorporating boundary values, security validations, and edge cases into the Gherkin scenarios.

Step 2: Generating Executable Gherkin Scenarios

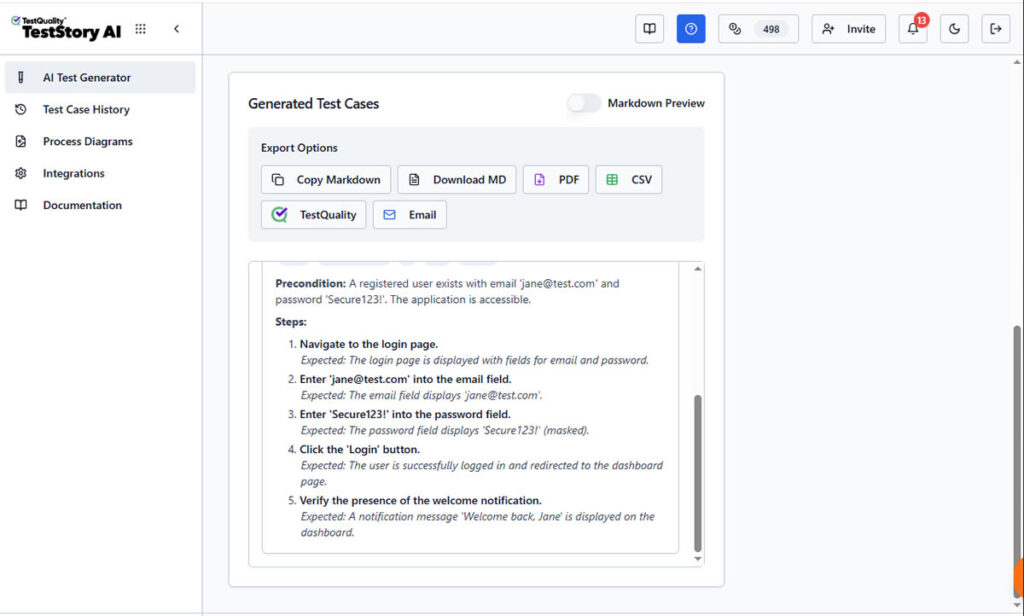

Once "Generate Test Cases" is triggered, TestStory.ai produces structured steps with clear Preconditions and Expected Results.

the AI parses the story to create structured Gherkin format user stories acceptance criteria. As shown in the screenshots, the platform doesn't just "write" steps; it builds an executable specification.

The AI identifies the necessary preconditions (Given), the specific user triggers (When), and the verifiable outcomes (Then). This ensures that your Gherkin acceptance criteria examples are declarative and behavior-focused, following 2026 industry best practices.

Unlike a static document, this output is ready for the "last mile" of testing. The interface provides immediate Export Options, allowing you to download the Gherkin as Markdown, PDF, CSV, share it by Email, downloaded it or, what's most important, sync it directly to TestQuality as your option as test management stack.

Step 3: Synchronizing Gherkin Scenarios to TestQuality

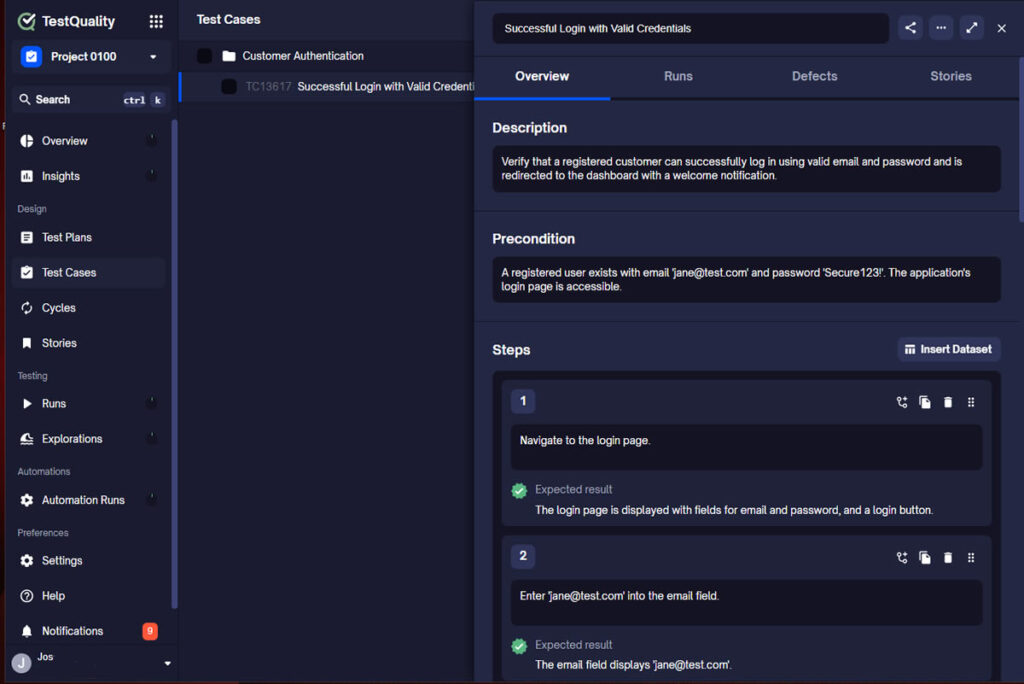

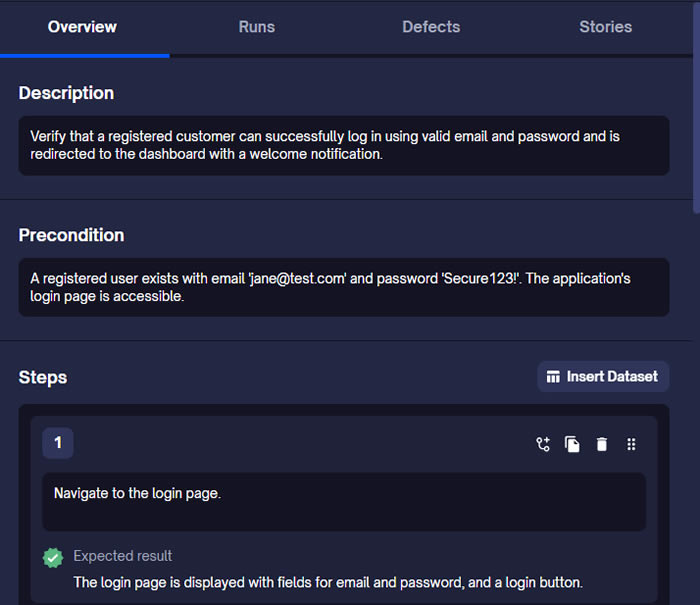

By selecting the TestQuality export button, the AI-generated scenario is instantly synchronized with our live project within TestQuality with the proper folder organization. As shown in the final view, the Gherkin story is now a formal Test Case within TestQuality, fully populated with:

- Traceability: Linked back to the original User Story requirement.

- Structured Steps: Ready for manual execution or automation mapping.

- Organization: Automatically filed into the correct "Customer Authentication" folder.

What was once a high-level user story is now a collection of formal, version-controlled test cases ready for execution.

FAQ

What's the difference between an AI test case generator and traditional test automation?

Traditional test automation executes tests you've already written. An AI test case generator creates the test scenarios itself by analyzing requirements, user stories, or application behavior. You still need execution infrastructure, but the time-consuming work of documenting scenarios shifts from humans to AI.

Can AI test case generators completely replace manual test case writing?

Not entirely. AI excels at systematic coverage, edge case identification, and repetitive scenario generation. Complex business logic, exploratory testing insights, and scenarios requiring deep domain expertise still benefit from human judgment. The best approach combines AI-generated baseline coverage with human-crafted scenarios for critical functionality.

How do AI test case builders handle Gherkin and BDD workflows?

Quality AI generators output scenarios in Given-When-Then format that integrates directly with BDD frameworks like Cucumber. The best tools produce declarative, behavior-focused scenarios rather than imperative scripts coupled to implementation details, creating durable specifications that business stakeholders can review and validate.

Accelerate Your Testing with AI-Powered QA

The gap between teams using AI test case builders and those relying on manual approaches widens every quarter. According to Gartner, 80% of software engineers will need to upskill by 2027 due to AI-augmented testing tools adapting roles. Teams that wait will find themselves competing against organizations shipping faster with higher quality.

TestQuality combines AI-powered test generation through TestStory.ai with unified test management designed for modern DevOps workflows. Our free AI test case builder generates Gherkin scenarios from user stories instantly, while native GitHub and Jira integrations keep everything synchronized without manual effort. QA Agents assist throughout the workflow, from test creation through execution and analysis. Start your free trial and experience what an AI-powered QA platform delivers for teams serious about shipping quality software faster.