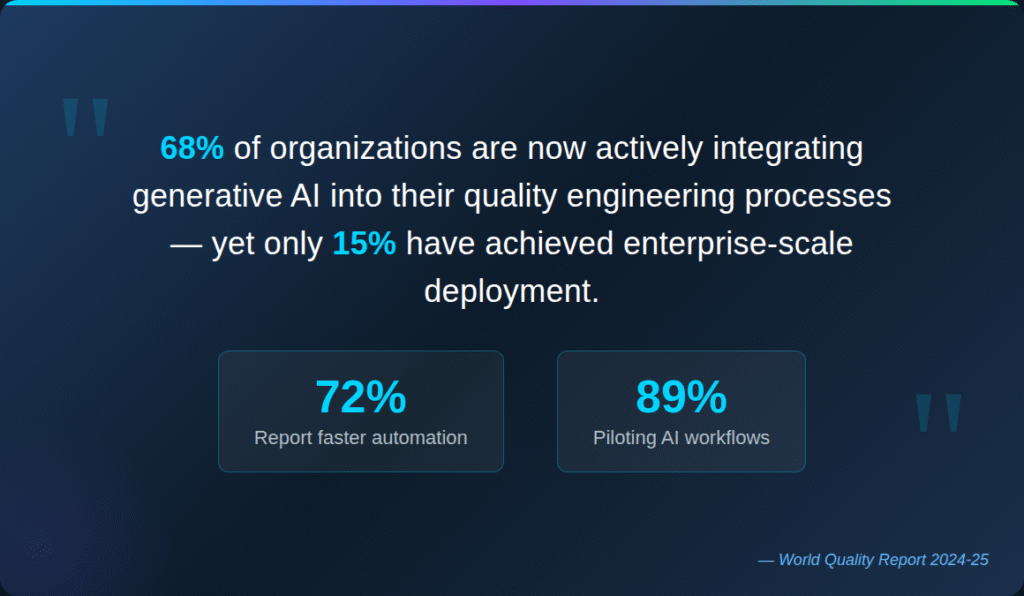

AI test management software has evolved from experimental technology to essential QA infrastructure, with 68% of organizations actively integrating generative AI into their quality engineering processes.

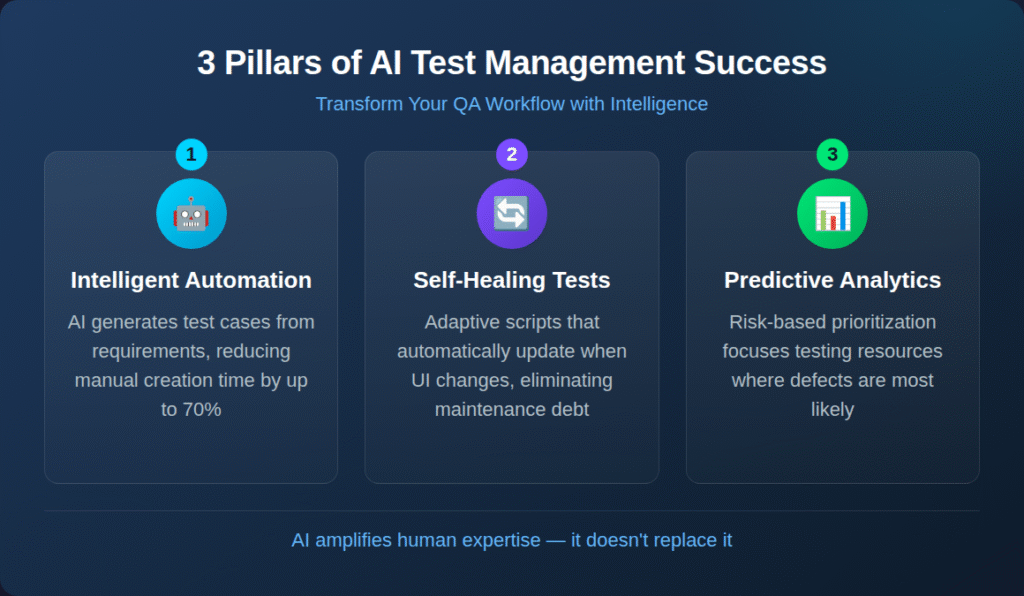

- Intelligent test case generation reduces manual creation time while improving coverage of edge cases and scenarios that human testers might miss.

- Self-healing capabilities and predictive analytics shift testing from reactive defect-finding to proactive quality assurance.

- Successful implementation requires aligning AI tools with existing DevOps workflows rather than forcing process changes to accommodate new technology.

- Organizations that treat AI as an amplifier for human expertise rather than a replacement achieve the strongest ROI and team adoption.

Start evaluating AI test management tools based on integration depth and workflow compatibility, not just feature lists.

Nearly 90% of organizations are now piloting or deploying AI-augmented testing workflows, yet only 15% have achieved enterprise-scale deployment. This gap between ambition and execution reveals a truth about AI test management software: success depends less on the technology itself and more on how teams integrate it into existing quality assurance practices. The organizations closing this gap treat AI as an intelligence layer that enhances human decision-making rather than a replacement for skilled testers.

72% of teams report faster automation processes after Gen AI integration, with test case generation and defect analysis leading adoption. Manual test creation, once consuming half of the testing time for many teams, can now be accelerated. But velocity means nothing without accuracy and relevance. Understanding what AI-powered test management actually delivers helps teams separate genuine capability from marketing promises.

What Is AI Test Management Software?

AI test management software combines traditional test organization capabilities with machine learning algorithms that automate, optimize, and predict across the testing lifecycle. These platforms analyze patterns in code, requirements, and historical test data to generate intelligent recommendations and automate routine tasks.

Traditional test management tools serve as documentation systems. They store test cases, track execution results, and generate reports. Test case management AI transforms these passive repositories into active intelligence layers that participate in the testing process. It changes how QA teams allocate their time and expertise.

AI-powered platforms analyze application requirements and automatically generate test scenarios covering functional paths, edge cases, and negative testing conditions. They monitor test execution patterns to identify unreliable tests that waste resources. They predict which code changes carry the highest defect risk, enabling teams to focus limited testing time where it matters most.

The Evolution from Automation to Intelligence

First-generation test automation focused on execution speed. Record a test, replay it faster than manual execution, and scale across environments. Second-generation tools added scripting flexibility and integration with CI/CD pipelines. The current generation introduces genuine intelligence that learns, adapts, and improves.

This trajectory aligns with broader enterprise AI adoption. By 2028, 75% of enterprise software engineers will use AI code assistants. Testing tools follow a similar adoption curve as organizations recognize that AI-generated code requires AI-augmented validation.

Agile methodologies demand faster feedback cycles. DevOps practices require testing embedded throughout delivery pipelines. AI-generated code from tools like GitHub Copilot produces unprecedented volumes of code requiring validation. QA management tools must evolve to match these realities or become bottlenecks rather than enablers.

Executive Summary

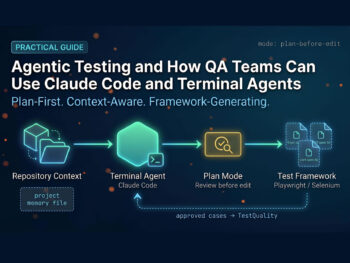

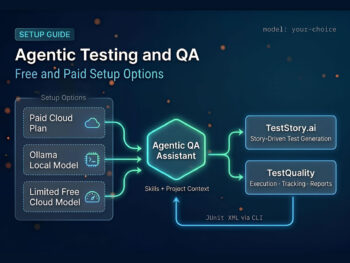

The Shift from Legacy "AI-Assist" to Agentic QA

Stop debugging scripts; start managing agents.

If your QA strategy in 2026 relies on writing prompts to generate brittle code, you are simply trading manual test writing for manual test maintenance. The industry is rapidly pivoting away from legacy AI-assist tools, which act as glorified autocomplete engines, toward Agentic QA.

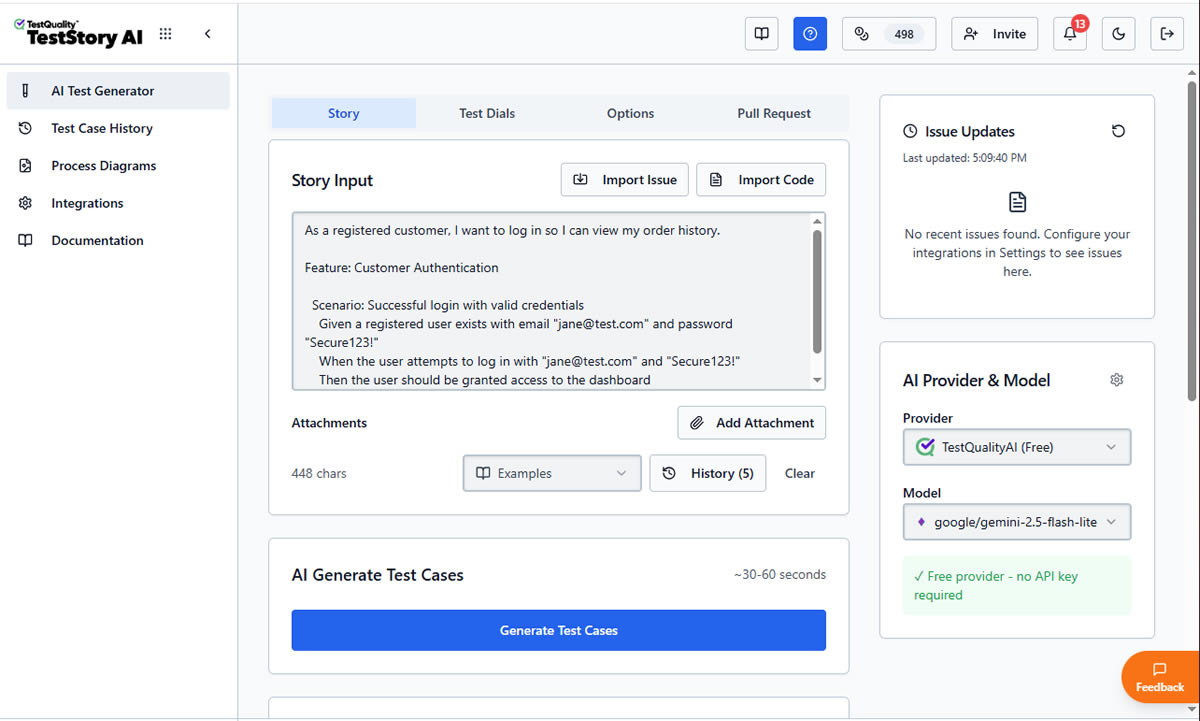

Evaluators must recognize the critical difference: legacy test management forces QA teams to act as manual translation layers. Even first-generation 'AI copilots' require constant prompt-engineering, manual mapping to Gherkin criteria, and babysitting to ensure requirements trace back to Jira or GitHub. Agentic platforms like, like TestStory.ai, bypass this entirely. They don't just autocomplete steps; they ingest your system context to architect, generate, and map production-ready test suites autonomously in under 30 seconds.

The Agentic Benchmark: Why TestStory.ai Replaces Generic AI Generators

Most AI test case generators are structurally "dumb." They lack specific control mechanisms, resulting in bloated, un-executable test suites. We built TestStory.ai to solve the engineering friction points of test design and coverage validation:

Deep Context Extraction (Zero-Prompting): TestStory natively connects to Jira, GitHub, and Linear. It ingests your Epics, User Stories, and PRs, translating them into comprehensive test cases instantly.

Diagram-to-Test Autonomy: Don't write requirements if you already have the architecture mapped. TestStory autonomously parses complex process diagrams (Visio, Lucidchart, PNG, PDF), understanding UML, BPMN, ERD, and System Architecture to instantly map out state transitions, edge cases, and strict acceptance criteria.

Precision Control via "Test Dials": We replaced generic prompt engineering with deterministic controls. Engineers use "Test Dials" and reusable "Preset Packs" to rigidly define test scope, target audiences, and specific test types (e.g., Smoke, Regression, Integration) ensuring strict alignment with your existing QA strategy.

IDE & Dev Workflow Native (MCP Integration): TestStory's MCP architecture plugs the agent directly into your development environment. Trigger TestStory's QA logic natively inside Claude, Cursor, and VSCode/Copilot, passing the output seamlessly into test management systems like TestQuality.

Enterprise Data Sovereignty: Your proprietary logic is never used to train our base models. TestStory allows you to utilize your own AI provider keys, ensuring strict compliance and zero data leakage.

The benchmark for 2026 isn't how fast an AI can write a script; it's how much test maintenance debt the agent eliminates.

No credit card required.

How Does AI Transform Test Case Management?

AI capabilities in test management span the entire testing lifecycle, from initial test design through execution, maintenance, and analysis. Understanding each capability helps teams identify where AI delivers the most value for their workflows.

Intelligent Test Case Generation

The most immediate impact of AI in testing comes from automated test case creation. Natural language processing enables platforms to analyze requirements documents, user stories, and even Figma mockups to generate comprehensive test scenarios. What once required hours of manual documentation now happens in minutes.

These AI systems understand context in ways that rule-based automation cannot. A login feature requirement triggers test case generation covering valid credentials, invalid passwords, account lockouts, session timeouts, password complexity rules, and multi-factor authentication flows. The AI recognizes patterns from training data and applies them to new requirements.

AI-powered test case builders allow teams to customize generation parameters. Teams can specify test depth, target audience, and output format, including Gherkin syntax for BDD workflows. The result is draft test cases that capture domain expertise while eliminating blank-page syndrome that slows down test creation.

Self-Healing Tests and Predictive Analytics

Traditional automated tests break when application interfaces change. A button moves, a field name updates, or a workflow restructures, and suddenly test suites fail not because of defects but because of test maintenance debt. Self-healing capabilities address this fragility.

AI test management software with self-healing identifies elements through multiple attributes rather than single locators. When one identifier changes, the system recognizes the element through alternative characteristics and automatically updates tests. Teams spend less time fixing broken tests and more time investigating actual defects.

Predictive analytics extends intelligence into planning. By analyzing historical defect patterns, code complexity, and change frequency, AI algorithms predict which modules carry the highest risk. This intelligence enables risk-based test prioritization where limited testing resources focus on areas most likely to contain defects. Organizations implementing risk-based prioritization report reductions in regression testing cycles while maintaining or improving defect detection rates.

Risk-Based Test Prioritization

Not all tests carry equal value. Some validate critical business flows; others check edge cases unlikely to fail. AI analyzes test execution history, code coverage, and defect correlations to rank tests by their likelihood of catching issues. This intelligence proves essential in CI/CD pipelines where testing time is limited.

Risk-based prioritization transforms regression testing from exhaustive verification to targeted validation. Instead of running every test on every build, teams execute the subset most relevant to recent changes. The approach accelerates feedback without sacrificing confidence, provided the AI models receive sufficient historical data for accurate predictions.

What Benefits Does AI Test Management Deliver?

Organizations implementing test case management AI report measurable improvements across multiple dimensions. These benefits compound over time as AI models learn from organizational testing patterns.

Accelerated test creation is the most immediate benefit. AI-powered testing platforms can reduce the manual effort required for test design, with some teams achieving up to 70% time savings on test creation tasks.

Improved test coverage emerges from AI's ability to identify scenarios humans overlook. Machine learning models trained on millions of test cases recognize patterns and edge cases that experience alone might miss. AI-generated tests consistently cover boundary conditions, negative paths, and error handling that manual processes sometimes skip.

Reduced maintenance overhead follows from self-healing capabilities and intelligent test analysis. Rather than manually updating dozens of broken tests after UI changes, AI automatically handles routine maintenance. Teams redirect this saved effort toward higher-value testing activities.

Faster feedback cycles result from optimized test selection and parallel execution. When AI prioritizes tests based on code change impact, teams receive relevant feedback faster. Critical issues surface within minutes rather than hours, enabling rapid iteration without sacrificing quality gates.

Enhanced collaboration between technical and non-technical stakeholders improves when AI generates tests in natural language or Gherkin format. Business analysts can review and validate test scenarios without deep technical expertise, ensuring tests align with actual requirements rather than developers' assumptions.

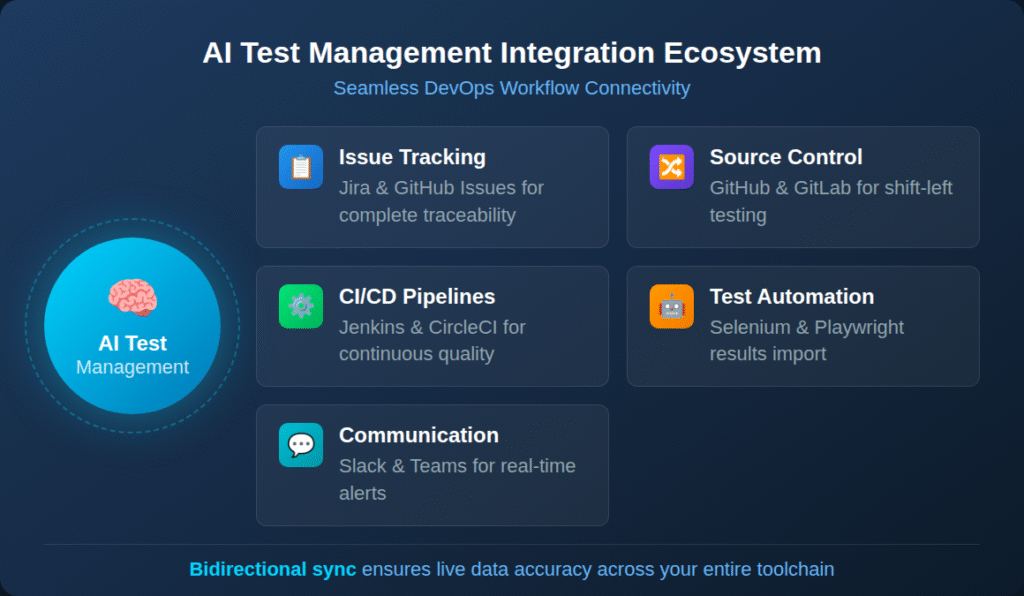

How Do QA Management Tools Integrate with DevOps Workflows?

Integration depth separates effective QA management tools from disconnected point solutions. The most valuable platforms embed seamlessly into existing development ecosystems rather than requiring workflow changes to accommodate testing.

The following table outlines key integration categories and their impact on testing effectiveness:

| Integration Type | Purpose | Business Impact |

| Issue Tracking (Jira, GitHub Issues) | Link tests to requirements and defects | Complete traceability from requirement to validation |

| Source Control (GitHub, GitLab) | Trigger tests on code changes, link results to PRs | Shift-left testing with immediate feedback |

| CI/CD Pipelines (Jenkins, CircleCI) | Automate test execution in deployment workflows | Continuous quality gates without manual intervention |

| Test Automation Frameworks (Selenium, Playwright) | Import automated test results | Unified reporting across manual and automated testing |

| Communication Tools (Slack, Teams) | Real-time test result notifications | Faster response to failures and quality issues |

Bidirectional synchronization matters more than simple import/export capabilities. When test status is updated in the management platform, that status should be reflected immediately in linked Jira issues or GitHub pull requests. This live synchronization eliminates the data staleness that undermines confidence in test management data.

Native integration also enables AI-driven test planning that considers the full development context. AI can analyze code changes in pull requests, correlate them with historical defect patterns, and recommend specific test cases for validation. This intelligence requires deep integration rather than surface-level connections.

What Challenges Should Teams Anticipate?

Honest assessment of AI testing limitations helps teams set realistic expectations and plan effective implementations. The technology delivers genuine value but isn't a panacea for all testing challenges.

Data quality dependencies create the most common stumbling block. AI models learn from historical data, so organizations with inconsistent test naming, incomplete results tracking, or siloed testing records provide poor training material. The World Quality Report 2025 found that 67% of organizations cite data privacy risks as a top challenge, while 60% express concerns about AI hallucination and reliability.

Skills gaps persist despite tool advancement. Half of organizations report a lack of sufficient AI/ML expertise in their QA teams. While AI-powered tools reduce coding requirements, teams still need the capability to evaluate AI-generated outputs, configure generation parameters, and integrate tools into existing workflows.

Integration complexity challenges organizations with diverse technology stacks. Legacy test management systems, custom automation frameworks, and unique CI/CD configurations may not connect easily with modern AI platforms. Teams should evaluate integration depth during tool selection rather than assuming all platforms connect equally well.

Over-reliance risks emerge when teams trust AI outputs without validation. AI-generated tests require human review to ensure relevance, accuracy, and alignment with actual requirements. The most effective approaches position AI as a collaborator that handles routine tasks while humans provide judgment, context, and strategic direction.

How to Evaluate and Implement AI Test Management Software

Successful implementation begins with clear identification of current pain points. Teams drowning in test maintenance benefit most from self-healing capabilities. Those struggling with test creation velocity should prioritize generation features. Organizations lacking visibility need platforms with strong analytics and reporting.

Start with pilot projects that demonstrate value without organizational risk. Select a specific project or team, implement AI capabilities in isolation, and measure results against baseline metrics. This approach builds internal expertise while validating tool fit before broader rollout.

Prioritize workflow compatibility over feature counts during evaluation. The most capable tool means nothing if it requires abandoning existing processes that work. Evaluate how naturally each platform fits into current development and testing workflows, including existing tool integrations.

Plan for change management beyond technical implementation. Test case management transformation affects how testers spend their time, how they collaborate with developers, and how organizations measure testing effectiveness. Successful implementations address these human factors alongside technical configuration.

Measure outcomes, not activities. Track defect escape rates, time to feedback, test maintenance effort, and coverage metrics rather than simple adoption statistics. These outcome-focused measurements reveal whether AI implementation actually improves quality rather than just changing how work gets done.

FAQ

What is the difference between AI test management software and traditional test management tools? Traditional test management tools simply store test cases, track execution results, and generate reports. AI test management software adds an intelligence layer that actively participates in testing through automated test generation, self-healing test maintenance, predictive analytics for defect risk, and test prioritization based on code change analysis.

How much does AI test management software reduce test creation time? Organizations implementing AI-assisted test generation typically report reductions in time spent writing test cases. The actual savings depend on implementation maturity, data quality, and how well the AI tools integrate with existing workflows. Teams see the largest gains when AI handles routine test documentation while testers focus on strategic test design and exploratory testing.

Can AI-generated test cases replace human testers? AI-generated test cases augment rather than replace human testers. While AI excels at generating comprehensive coverage, identifying patterns, and handling routine maintenance, human judgment remains essential for validating test relevance, interpreting ambiguous requirements, performing exploratory testing, and ensuring tests align with actual business needs.

What should teams prioritize when selecting AI test management software? Integration depth with existing development tools should take priority over feature lists. Evaluate how naturally each platform connects with your issue trackers, source control systems, CI/CD pipelines, and automation frameworks. Also assess the quality of AI-generated outputs for your specific domain, the learning curve for your team's skill level, and the vendor's track record for keeping AI capabilities current with technological advancement.

Elevate Your QA Strategy with Intelligent Test Management

The shift toward AI test management software signals a change in how organizations approach quality assurance. Teams that embrace this evolution position themselves to handle accelerating development velocity, increasing code complexity, and rising quality expectations.

The most successful implementations integrate AI deeply into existing workflows, maintain human oversight of AI-generated outputs, and focus on outcomes rather than activities. These organizations treat AI as an active intelligence layer that amplifies human expertise rather than replacing it.

Ready to experience AI-powered test management that integrates seamlessly with your GitHub and Jira workflows? TestQuality's QA Agents help you create, run, and analyze test cases automatically from a chat interface or agentic workflows. Start your free trial and discover how AI-driven test management accelerates software quality for both human and AI-generated code.