At a Glance

The State of AI-Generated Code in 2026

The verification gap is the defining engineering problem of the agentic era.

75.3% of multi-agent failures stem from the planner-coder gap — semantic breakdown during handoff from planning to coding agents. (arXiv 2510.10460, 2025)

75.17% of those failures are silent gray errors — code that passes compilation and superficial checks but violates intended business logic. (MAST / arXiv 2503.13657, NeurIPS 2025)

Adding explicit verification phases yields +15.6% improvement in agentic task success rates. (MAST / arXiv 2503.13657, NeurIPS 2025)

Properly orchestrated systems show 3.2x lower failure rates than unstructured multi-agent environments. (Galileo, 2025)

95% of enterprise GenAI pilots fail to deliver measurable financial returns. (MIT NANDA, 2025)

10.83 issues per AI-authored pull request versus 6.45 for human-written code — a 1.7x defect rate. (CodeRabbit, Dec 2025)

"The agentic SDLC converts AI speed into verifiable outcomes — replacing unstructured generation with closed-loop, human-orchestrated development pipelines."

What Is the Agentic SDLC?

The agentic software development lifecycle is a structured engineering methodology where autonomous AI agents handle planning, code generation, and verification under human orchestration. It replaces unstructured generation with context engineering and closed-loop validation, ensuring AI-produced code meets defined business logic and quality standards before it reaches production.

In a traditional software development lifecycle, humans execute every phase: writing requirements, building code, creating tests, and approving deployments. Generative AI disrupted this by creating a volume problem — AI agents can generate code at machine speed, but verification has remained bottlenecked at human pace. The agentic SDLC solves this by formalizing how specialized AI agents interact across the entire pipeline. It establishes a builder-validator chain where agents do not just produce code — they independently review, test, and iterate on each other's outputs. In the near future, TestQuality will act as the orchestration layer in this paradigm, connecting product planning in Jira, code execution in GitHub, and autonomous QA verification into a single closed-loop system.

Why Does Traditional SDLC Break Down With AI-Generated Code?

Traditional software development processes assume the entity writing code understands its broader business context. AI models do not carry that understanding natively. Retrofitting AI generation into a legacy SDLC without an orchestration and verification layer produces a predictable failure pattern: fast output, slow trust, and compounding technical debt.

The data confirms the scale of this breakdown. MIT's 2025 "GenAI Divide" study, based on analysis of 300 AI deployments, found that 95% of enterprise GenAI pilot programs fail to deliver measurable financial returns ComplexDiscovery — not because models are incapable, but because organizations generate at AI speed without verifying at the same pace. A December 2025 CodeRabbit analysis of 470 open-source GitHub pull requests found that AI-authored PRs averaged 10.83 issues compared to 6.45 in human-written code TechRadar — a 1.7x defect rate that scales linearly with adoption, including a 75% rise in logic and correctness errors.

Corroborating this at the system level, UC Berkeley's MAST research analyzed over 1,600 annotated multi-agent execution traces and found that most failures manifest as silent gray errors — outputs that pass superficial checks such as code compilation but contain runtime bugs because they fail to validate against actual business logic arXiv. Stack Overflow's 2025 Developer Survey found that 66% of developers cite "AI solutions that are almost right, but not quite" as their primary frustration, with 45.2% reporting that debugging AI-generated code is more time-consuming ADTmag than writing it themselves. Legacy test management tools built for human-paced, manual workflows have no mechanism to absorb this failure class at scale.

| Dimension | Traditional SDLC | Agentic SDLC | Vibe Coding |

|---|---|---|---|

| Planning Method | Manual Jira tickets, human-authored specs | Agent-assisted parsing with codebase context mapping | Unstructured prompts, no formal spec |

| Execution Model | Human developers writing every line | Context engineering with constrained, bounded agents | LLM autocomplete with no architectural boundaries |

| QA Approach | Manual QA and static script automation | Builder-validator chains with trajectory-level evaluation | "LGTM" — if it compiles, it ships |

| Failure Mode | Human error and reviewer fatigue | Edge-case logic gaps caught early in the pipeline | Silent gray errors: code compiles, business logic is wrong |

| Human Role | Manual builder executing every task | System orchestrator setting constraints and reviewing outcomes | Babysitter debugging unpredictable output |

| Verification Speed | Human-paced review cycles | Autonomous validation running in parallel with CI/CD | No structured verification — review is ad hoc |

| Technical Debt Pattern | Accumulates gradually from human oversight gaps | Contained by self-improving feedback loops and agentic memory | Accumulates rapidly — high churn, duplicated code blocks |

| Reliability Outcome | High reliability at human speed | 3.2x lower failure rate vs. unorchestrated systems (Galileo, 2025) | Low — high defect rate, high debugging cost |

What Are the Three Stages of a Properly Orchestrated Agentic SDLC?

A properly structured agentic SDLC does not deploy uncoordinated AI agents against a problem and hope for coherence. It applies tightly scoped, specialized agents across three sequential stages — each with defined inputs, outputs, and verification checkpoints. Skipping any stage reintroduces the verification gap that makes AI-generated code unreliable at scale.

Stage 1: Agentic Planning — Closing the Planner-Coder Gap

The most consequential failure point in AI-generated software development occurs before a single line of code is written. Research published in arXiv in 2025 identified the planner-coder gap as the root cause of 75.3% of multi-agent code generation failures, occurring when semantic alignment breaks down during the handoff from planning agents to coding agents arXiv. A product manager writes a high-level Jira requirement; an AI coding agent attempts to execute it without access to the architectural history or established design decisions embedded in the codebase. The gap between intent and execution produces code that is structurally plausible but logically misaligned.

In an agentic SDLC, planning is an active, agent-assisted process. Planning agents parse incoming requirements, cross-reference them against agentic memory of the existing codebase, and generate heavily constrained execution plans before any coding agent receives a prompt. This bridges the gap between human intent and machine execution by embedding architectural context directly into the instruction set passed to GitHub.

Stage 2: Agentic Execution — Context Engineering Over Vibe Coding

Vibe coding — the practice of feeding conversational prompts into a general-purpose LLM and accepting whatever code emerges — generates output that looks functional at a surface level and fails in production at the business logic level. It fails because AI models fill the gaps in their instructions with statistically likely but contextually wrong assumptions about the codebase.

Context engineering replaces this pattern. Instead of relying on the model's generalized training, orchestration platforms inject structured, localized data directly into the agent's operating environment: Abstract Syntax Tree (AST) parses of the existing codebase, dependency graphs, historical design decisions stored in agentic memory, and RAG-retrieved patterns from similar past implementations. The coding agent then operates within defined architectural boundaries. When a GitHub pull request is submitted, it reflects the actual system — not a statistically plausible hallucination of it.

Stage 3: Agentic Verification — Builder-Validator Chains and Trajectory Evaluation

Verification is where the agentic SDLC delivers its most measurable return. UC Berkeley's MAST research demonstrated that introducing an additional high-level task objective verification step — one focused on business logic rather than just code compilation — yielded a notable +15.6% absolute improvement in task success rates Tim Williams, while also revealing that existing verifiers performing only superficial checks consistently miss the failure class that matters most.

This is why the builder-validator chain enforces strict separation between the agent that produces code and the agent that tests it. The validator agent operates with no access to the builder's reasoning — it evaluates only the final output against the original Jira acceptance criteria. Trajectory-level evaluation goes further, examining the step-by-step reasoning path the builder agent used to arrive at its solution and identifying logical deviations at the decision point, not after they reach production. For a deep dive into the QA layer of this system, see our foundational guide to the shift to agentic QA beyond automated testing to autonomous AI generation in 2026.

How Does Agentic Testing Catch Silent Semantic Errors?

Agentic testing catches silent semantic errors by evaluating AI-generated code against original business requirements — not just syntactic correctness. Builder-validator chains use trajectory-level analysis to reconstruct the agent's decision path and identify where logical intent diverged from implementation before the code merges to production.

UC Berkeley's MAST dataset, comprising 1,600+ annotated multi-agent execution traces, found that 75.17% of failures manifest as silent gray errors — outputs that do not trigger explicit system failures and are therefore not immediately visible arXiv through standard compilation or unit test pipelines. A syntax error halts the pipeline immediately and announces itself. A silent gray error compiles perfectly, passes every unit test, and executes the wrong business logic in production — often for days before it surfaces through user behavior or downstream data anomalies.

Traditional test management tools are blind to this failure class because they verify only what the human explicitly instructs them to check. Agentic testing, such as the pipeline enabled by TestStory.ai within TestQuality, autonomously generates test cases derived from the original Jira requirements and acceptance criteria — not from the resulting code. By comparing actual execution behavior against the semantic intent of the feature, the agentic verification layer surfaces logical deviations before they merge. For guidance on structuring this at the pipeline level, see our guide on the LLM regression testing pipeline.

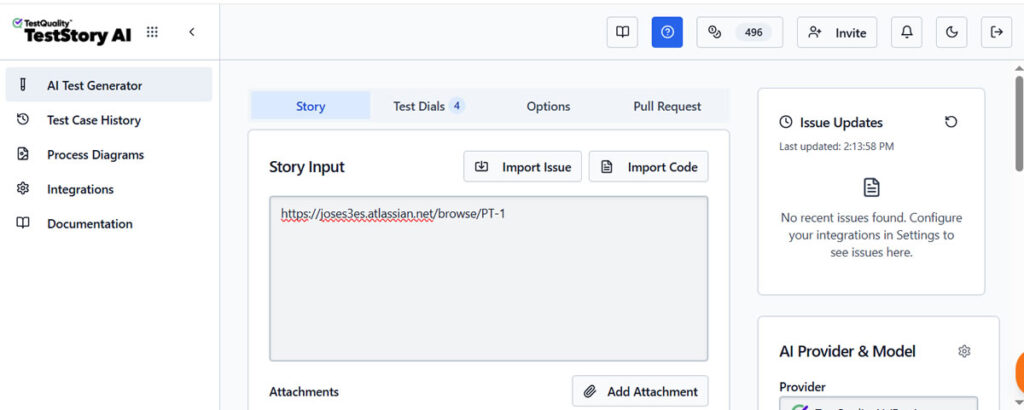

TestStory.ai in action, the agent actively can fetch and reason about the Jira issue context.

A Jira issue URL is pasted directly into TestStory.ai and, no requirement document, no manual test plan, no scripted input template. The agent fetches the issue context from Jira autonomously, interprets the feature scope, and begins reasoning about the full risk surface the issue introduces.

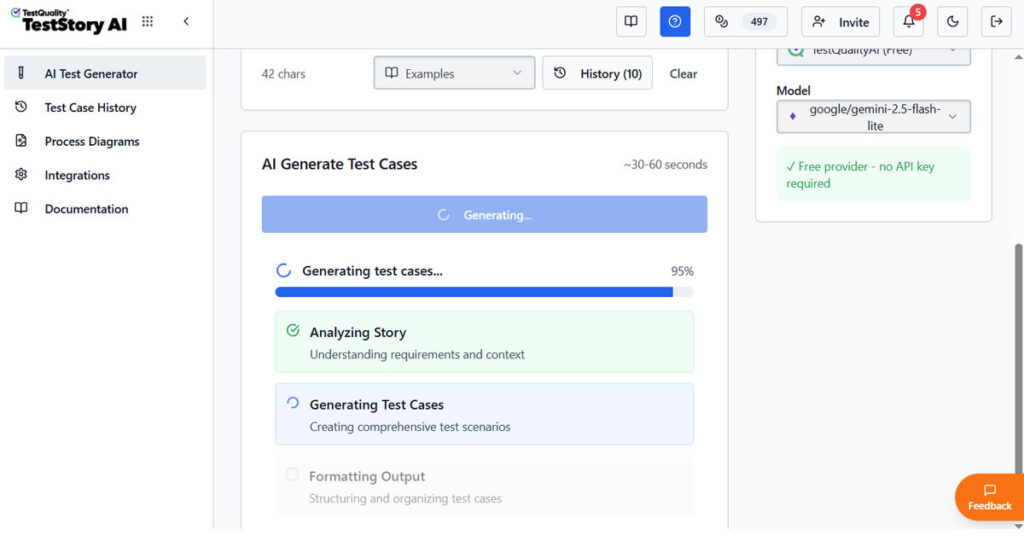

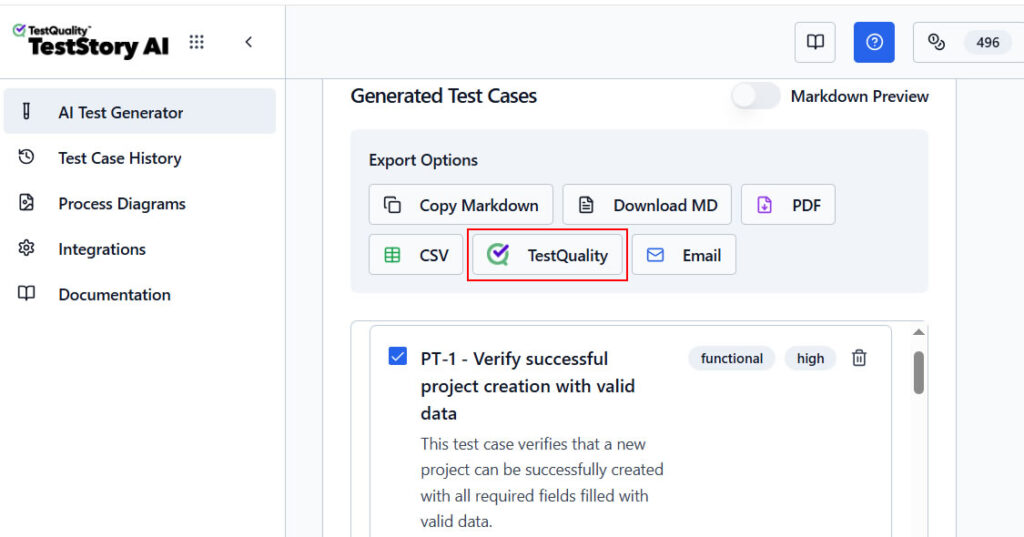

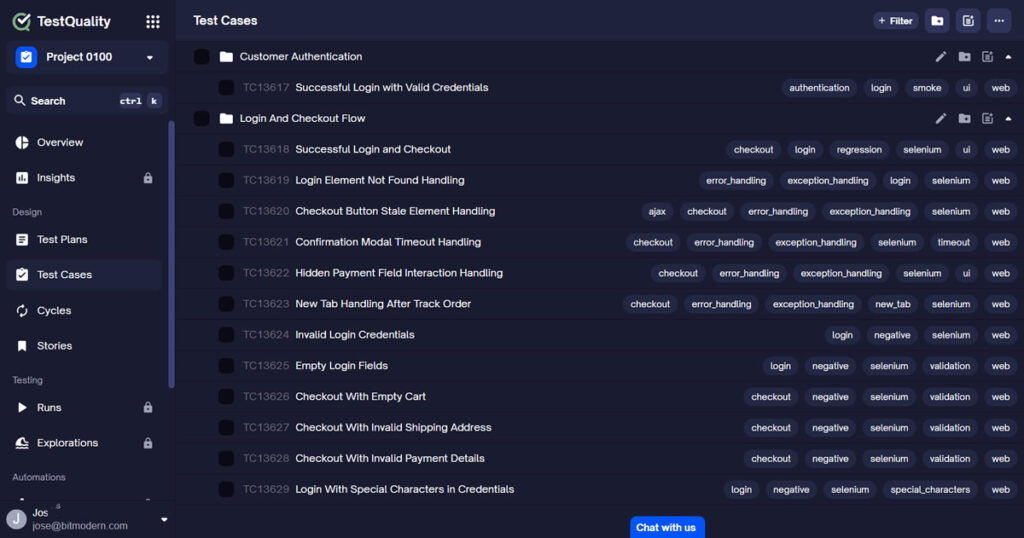

The Story based test case generation generates the Test Cases that can be exported for execution runs, cycles or analysis into TestQuality

With test cases generated, a single click exports the full output into TestQuality, where the real test management workflow begins. From there, teams can execute the test cases within a dedicated test cycle, log new Jira issues directly from a failed result, comment on the originating Jira issue, or reassign it to another team member. The entire quality loop, from Jira issue to test execution to resolution, closes without leaving the integrated workflow.

What Is the Human Role in an Agentic SDLC?

The human role in an agentic SDLC shifts from manual builder to system orchestrator. Instead of writing code, engineers define architectural boundaries, set acceptance criteria, and review high-level validation outcomes. This shift dramatically reduces time spent debugging AI output and redirects engineering attention toward system-level judgment and quality governance.

This distinction matters because the alternative — expecting developers to manually review every AI-generated pull request without structured tooling — reintroduces exactly the bottleneck the agentic SDLC is designed to eliminate. Analysis from Galileo confirms that properly orchestrated systems experience 3.2x lower failure rates compared to systems lacking formal orchestration frameworks Galileo AI — a reliability multiplier that comes not from more powerful models, but from the structural separation between generation and verification, and from the governance layer that coordinates both.

Properly orchestrated agentic systems do not aim to reduce the number of engineers. They aim to redirect engineering attention away from repetitive validation tasks and toward the decisions that only humans can make: what to build, how to bound the agents building it, and what quality thresholds define a successful outcome. TestQuality's native integration with Jira and GitHub enforces this separation structurally — every pull request triggers automated validation before any human reviewer opens it, making the human review step higher-signal and faster.

Stop Babysitting AI-Generated Code

Implement builder-validator chains before silent errors reach production.

TestStory.ai generates structured test cases from your Jira acceptance criteria and syncs them into TestQuality for execution, tracking, and team review — automatically, on every pull request.

Get 500 TestStory.ai credits monthly with your TestQuality subscription →How Does TestQuality Unify the Agentic SDLC?

TestQuality acts as the orchestration layer that connects the three stages of the agentic SDLC into a single closed-loop system. It bridges Jira-based planning, GitHub-based code execution, and autonomous QA verification — ensuring that AI-generated code is evaluated against defined business requirements at every pipeline stage, not just at merge time.

Legacy test management platforms were designed for a world where humans write code and tests at similar speeds. They treat AI as a bolt-on feature to a fundamentally manual workflow. TestQuality was built natively for the agentic era: when a product manager creates a Jira ticket, TestQuality's AI layer — TestStory.ai — immediately begins building the verification criteria before the first coding agent receives its prompt. When code agents push pull requests to GitHub, TestQuality triggers the builder-validator chain and runs those pre-built test cases against the new output, flagging logical deviations before any human reviewer opens the PR.

Through agentic memory, TestQuality continuously refines its testing strategies based on historical failure patterns. When a specific type of silent gray error is caught in one sprint's code, the system stores the trajectory that produced it and screens for the same logical pattern in subsequent pull requests — without requiring manual rule-writing. This self-improving loop is what distinguishes an agentic QA layer from a conventional automated testing suite. To understand the infrastructure that powers these capabilities, see our Agentic QA Architecture guide.

Technical Deep Dive FAQ

Key Takeaways

The Agentic SDLC: What Engineering Teams Need to Know in 2026

Verification architecture — not generation speed — determines whether AI-generated code delivers value or debt.

75.3% of multi-agent failures trace to the planner-coder gap (arXiv 2510.10460) — and 75.17% of those failures are silent gray errors that pass compilation and unit tests while executing incorrect business logic (MAST / arXiv 2503.13657, NeurIPS 2025). Closing both requires structured context injection before generation and independent trajectory-level evaluation after it.

Adding explicit verification phases delivers +15.6% improvement in agentic task success (MAST / arXiv 2503.13657) — making the builder-validator chain the highest-ROI architectural decision in the agentic SDLC, not an optional quality enhancement.

Properly orchestrated systems achieve 3.2x lower failure rates than unstructured multi-agent environments (Galileo, 2025) — a reliability multiplier that comes from governance architecture, not from more powerful models.

AI-authored pull requests carry a 1.7x defect premium — 10.83 issues per PR versus 6.45 for human-written code (CodeRabbit, Dec 2025). This rate scales with adoption; it only becomes manageable when an autonomous verification layer absorbs it before human review.

95% of enterprise GenAI pilots fail to return measurable ROI (MIT NANDA, 2025) — not because models are insufficient but because organizations generate at AI speed without verifying at the same pace. The agentic SDLC closes that gap structurally, not incrementally.

"The agentic SDLC converts AI speed into verifiable outcomes — replacing unstructured generation with closed-loop, human-orchestrated development pipelines."

Start Free Today

Transition from script-writing to outcome-orchestration.

TestStory.ai generates structured test cases from your user stories, acceptance criteria, or architecture diagrams — then syncs them directly into TestQuality for execution, tracking, and team collaboration.

✦ Get 500 TestStory.ai credits every month included with your TestQuality subscription — no extra cost.

Try TestStory.ai Free → Start TestQuality Free →No credit card required on either platform.