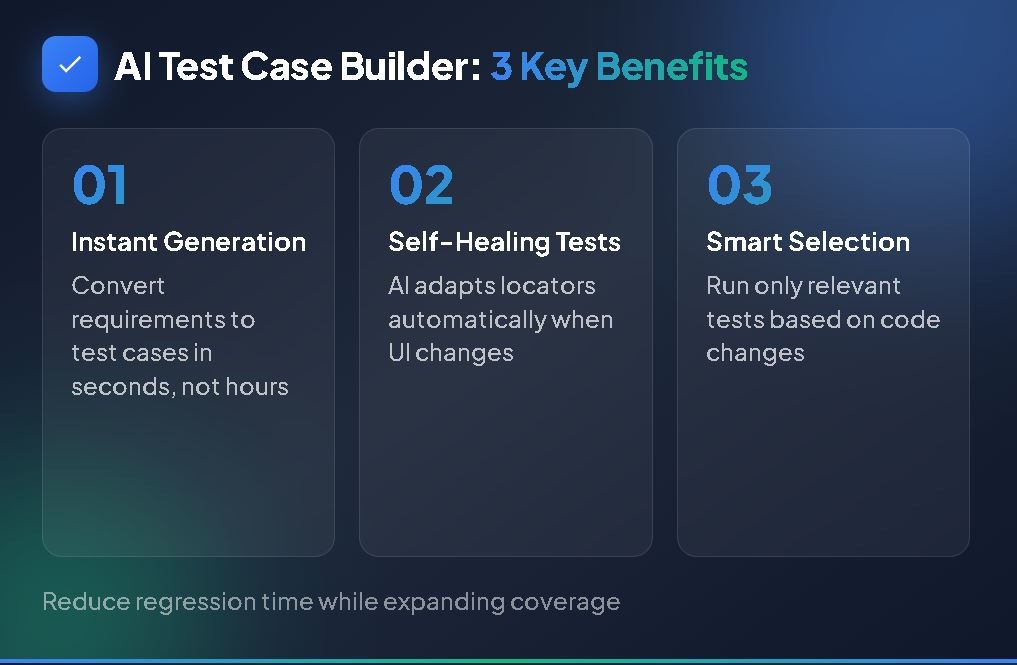

An AI test case builder can reduce regression testing time while improving test coverage and catching defects earlier in the development cycle.

- AI-powered test generation converts requirements into executable test cases in seconds, eliminating the bottleneck of manual test creation that consumes weeks per sprint.

- Self-healing capabilities automatically update test locators when UI elements change, reducing maintenance overhead for most teams.

- Intelligent test selection ensures only relevant tests run after code changes, avoiding the resource drain of executing entire regression suites for every deployment.

- Teams that integrate AI QA testing into their workflows report faster release cycles without sacrificing quality or increasing headcount.

Start treating AI as a testing partner rather than a replacement for human judgment, and your regression cycles will never look the same.

Regression testing is the safety net that catches bugs before they reach production. It's also one of the most time-consuming phases of the software development lifecycle. According to the World Quality Report 2025, 89% of organizations are now actively pursuing generative AI in their quality engineering practices, with test case design and requirements refinement leading adoption use cases. Teams are desperate for faster ways to validate that new code doesn't break existing functionality.

The traditional approach to regression testing involves manually creating test cases, updating them as features evolve, and running increasingly bloated test suites against every release. This process doesn't scale. Development teams ship code faster than QA teams can write tests. Features change faster than test cases can be updated. Either you slow down releases to maintain test quality, or you accept coverage gaps that let defects slip through.

An AI test case builder changes how developer teams operate. Instead of humans manually translating requirements into test scenarios, AI analyzes your specifications and generates comprehensive test coverage in seconds.

What Makes Regression Testing So Time-Consuming?

The challenge isn't running tests. Modern CI/CD pipelines can execute thousands of tests in minutes. The real drain comes from everything that happens before and after test execution.

Test creation consumes weeks, not hours. QA engineers spend significant portions of their sprints translating user stories and acceptance criteria into structured test cases. Each scenario requires identifying preconditions, defining test steps, specifying expected outcomes, and considering edge cases. Multiply this effort across hundreds of features, and you understand why test backlogs never shrink.

Maintenance compounds over time. Software applications aren't static. Every UI change, API modification, or workflow adjustment potentially breaks existing tests. Maintenance can consume up to 60% of testing time in some organizations, leaving less capacity for writing new tests than maintaining existing ones. That's time spent fixing tests rather than expanding coverage or exploring new functionality.

Flaky tests erode confidence. Tests that pass sometimes and fail others create noise that masks real failures. QA engineers waste hours investigating false positives, only to discover that timing issues or environment inconsistencies caused the failure. This noise trains teams to ignore test results, defeating the purpose of automation.

Full regression runs take too long. As test suites grow, execution time expands proportionally. Running every test after every code change might be thorough, but it's also impractical. Teams face a constant tension between thoroughness and speed, often compromising on coverage to meet deployment windows.

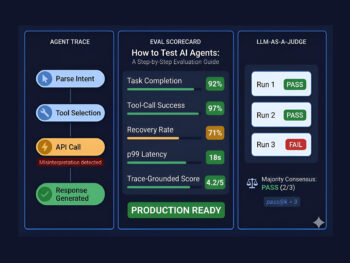

How Does an AI Test Case Builder Accelerate Regression Workflows?

An AI test case builder addresses each of these pain points by automating the repetitive, pattern-matching work that consumes QA resources. The technology doesn't replace human testers. Instead, it amplifies their capabilities by handling the mechanical aspects of test creation and maintenance while humans focus on strategy, edge cases, and exploratory testing.

Generating Test Cases from Requirements in Seconds

The most immediate impact comes from automating test case creation itself. AI systems analyze requirements documents, user stories, API specifications, and acceptance criteria to automatically generate comprehensive test scenarios. Natural language processing enables the AI to extract testable conditions from written requirements, identifying what the system should do under various circumstances.

Consider a typical user story for a login feature. A human tester might spend an hour documenting test cases for valid credentials, invalid passwords, account lockouts, session management, and remember-me functionality. An AI test case builder ingests the same requirements and produces equivalent coverage in seconds. The AI recognizes patterns from thousands of similar features and includes edge cases that human testers might overlook during initial test design.

The output formats matter for practical adoption. Modern AI testing tools produce results in formats compatible with manual execution or automation frameworks: plain text descriptions, Gherkin syntax for BDD workflows, or executable scripts for popular testing tools like Selenium and Playwright. Teams can generate test cases with AI that integrate directly into their existing test management systems, without the overhead of format conversion.

Self-Healing Tests That Reduce Maintenance Overhead

Historically, UI changes broke automated tests at scale. A developer renames a button class from "submit-btn" to "action-submit," and dozens of test scripts fail immediately. QA engineers spend hours updating selectors, re-running tests, and validating that fixes work across all affected scenarios. This maintenance burden compounds with every sprint as applications evolve.

AI QA testing platforms use machine learning to automatically adapt tests when application interfaces change. Self-healing capabilities recognize UI changes and adjust locators without human intervention. The AI examines the DOM structure, visual positioning, accessibility attributes, and contextual relationships to identify elements even after modifications.

According to Gartner's Market Guide for AI-Augmented Software-Testing Tools, 80% of enterprises will have integrated AI-augmented testing tools into their software engineering toolchains by 2027. Self-healing is among the capabilities driving this adoption, as it directly addresses the maintenance burden that has historically limited automation ROI. Teams redirecting maintenance time toward coverage expansion see compounding benefits over successive releases.

Intelligent Test Selection and Prioritization

Running every test after every code change is wasteful. When a developer modifies the checkout workflow, tests validating the login page are irrelevant. Traditional automation lacks the intelligence to distinguish between affected and unaffected tests, so teams either run everything or manually curate test subsets for each release.

AI-powered testing tools understand code relationships and make intelligent decisions about which tests to execute. Change impact analysis examines the modified code paths and selects only the tests that validate affected functionality. A developer changing the payment processing module triggers tests related to checkout, invoicing, and order confirmation while skipping unrelated tests for user profiles or search functionality.

This intelligent selection delivers measurable results. Teams implementing AI in CI/CD QA pipelines commonly report reductions of 50% in regression testing time without sacrificing defect detection. The tests that run are the tests that matter, providing faster feedback loops while maintaining comprehensive validation of affected areas.

What Does an AI-Powered Regression Workflow Look Like?

Understanding the theoretical benefits is valuable, but practical application matters more. Here's how an AI-enhanced regression workflow differs from traditional approaches at each stage of the testing lifecycle.

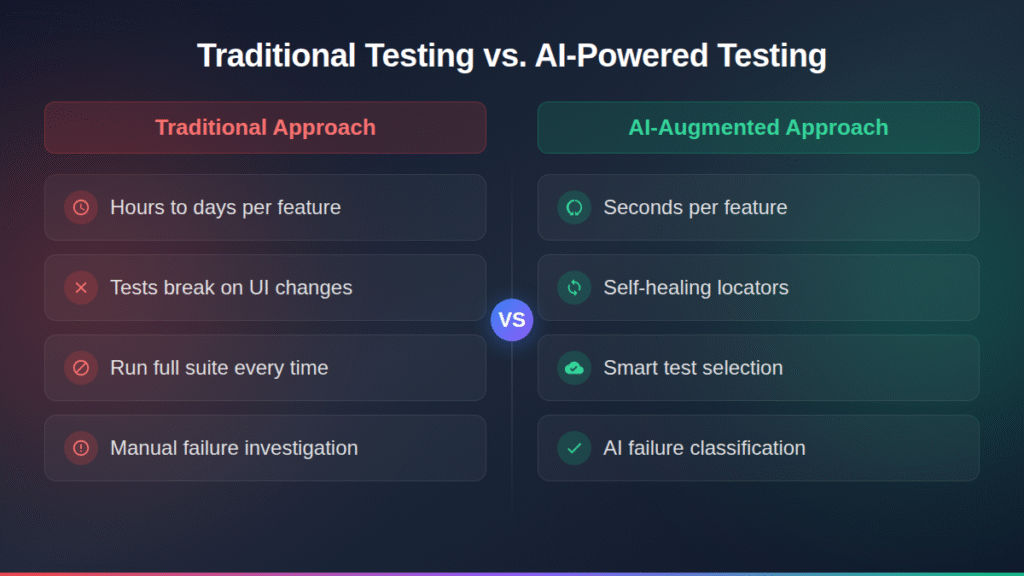

The following table compares traditional regression workflows against AI-augmented approaches across key activities:

| Workflow Stage | Traditional Approach | AI-Augmented Approach |

| Test Case Creation | QA engineers manually write test cases from requirements (hours to days per feature) | AI generates test scenarios from requirements in seconds, humans review and refine |

| Coverage Analysis | Manual review of test coverage, often incomplete or outdated | AI identifies untested code paths and automatically generates missing test cases |

| Test Selection | Run full regression suite or manually select relevant tests | AI analyzes code changes and automatically selects affected tests |

| Test Maintenance | Manual updates when UI changes break locators (significant hours per sprint) | Self-healing tests automatically adjust to UI modifications |

| Failure Analysis | Engineers manually investigate each failure (time-intensive per failure) | AI classifies failures, identifies patterns, and suggests root causes rapidly |

| Execution Timing | Full suite runs overnight, feedback arrives next day | Targeted tests run immediately, feedback in minutes |

Setting aside the speed benefits, AI changes what teams test, when they test, and how they respond to failures. Engineers spend less time on mechanical tasks and more time on the strategic thinking that actually improves software quality.

When developers create pull requests, AI systems can analyze the changed code and automatically generate relevant test cases before the code merges. These generated tests become part of the review process, ensuring coverage exists from the start rather than as an afterthought. Teams using version control integrations can trigger AI generation directly from issue creation, maintaining traceability between requirements and tests throughout the development lifecycle.

5 Ways AI QA Testing Transforms Regression Cycles

Beyond the core capabilities of test generation, self-healing, and intelligent selection, AI QA testing introduces several additional transformations that compound efficiency gains over time.

1. Continuous test generation keeps coverage aligned with development velocity. Traditional test creation can't keep pace with agile sprints. By the time QA finishes writing tests for the last release, developers have already shipped three more features. AI generation eliminates this lag. As requirements evolve, AI produces updated test scenarios that reflect current functionality rather than historical snapshots.

2. Pattern recognition identifies defect-prone code areas. AI systems analyze historical test results, code change patterns, and defect distributions to predict where bugs are most likely to occur. This risk-based testing focuses resources on high-impact areas rather than distributing effort uniformly across the application. Teams catch critical defects earlier while spending less time testing stable, low-risk modules.

3. Cross-browser and cross-device testing scales without multiplicative effort. Validating functionality across Chrome, Firefox, Safari, Edge, and mobile browsers traditionally required separate test runs with configuration-specific adjustments. AI efficiently generates test variations covering multiple browsers, devices, and configurations without requiring testers to manually create and maintain separate test suites for each environment.

4. Natural language interfaces democratize test creation. Not every team has deep automation expertise. AI tools that accept natural language inputs enable product managers, business analysts, and junior testers to contribute test scenarios without writing code. This democratization expands the pool of contributors while maintaining consistency through AI-enforced standards.

5. Failure classification reduces investigation time. When tests fail, determining whether the failure indicates a genuine bug, a test infrastructure problem, or an environmental issue requires investigation. AI failure classification analyzes test logs, compares results against historical patterns, and automatically categorizes failures. What previously took significant time per failure can be resolved much faster, accelerating the feedback loop from failure detection to resolution.

When Should Teams Adopt AI for Automated Test Cases?

Not every team will see identical benefits from automated test cases generated by AI. The technology delivers the strongest returns in specific contexts that align with AI capabilities and address genuine workflow bottlenecks.

- Applications requiring extensive regression validation benefit immediately. If your regression suite contains hundreds or thousands of tests, the maintenance burden alone justifies AI adoption. AI generates and maintains comprehensive regression suites that would overwhelm manual approaches.

- Teams practicing continuous delivery need test creation that matches development velocity. When you deploy multiple times per day, manual test creation becomes the rate limiter. AI generation keeps pace with frequent releases and constant change.

- Organizations migrating from legacy systems gain immediate coverage. Existing manual test cases can be efficiently converted to automated test cases through AI analysis and generation. Teams starting from scratch get comprehensive coverage faster than traditional approaches allow.

- QA teams lacking automation expertise benefit from natural language interfaces. You don't need specialized engineers to build coverage when AI accepts requirements in plain English and produces executable tests. The barrier to automation drops.

However, AI testing isn't a silver bullet. Applications with highly specialized domain logic, unusual user interactions, or security-sensitive workflows still require human oversight and manual test design. The best implementations combine AI efficiency with human judgment about edge cases and business logic nuances. Experienced testers review and refine AI-generated tests rather than accepting them blindly.

Start with a pilot project targeting high-volume, repetitive test areas before scaling AI testing across your organization. Prove ROI on a single project, measure improvements in test creation time and maintenance effort, then expand systematically based on demonstrated value.

FAQ

How much time can an AI test case builder actually save on regression testing? Teams commonly report 50% reductions in regression testing time after implementing AI-powered test generation and intelligent test selection. The savings come from faster test creation (seconds instead of hours), reduced maintenance overhead from self-healing capabilities, and smarter test selection that avoids running irrelevant tests. Individual results vary based on test suite size, application complexity, and how effectively teams integrate AI into their existing workflows.

Do AI-generated test cases replace human testers? No. AI handles repetitive, pattern-matching tasks like generating test scenarios from requirements, maintaining locators, and selecting relevant tests for execution. Human testers focus on strategic activities: exploratory testing, usability evaluation, edge case discovery, and test strategy design. The most effective implementations combine AI efficiency with human judgment about business logic and context that AI can't fully understand.

What types of projects benefit most from AI test case generation? Projects with extensive regression suites, frequent releases, or continuous delivery requirements see the strongest returns. Applications featuring common patterns like authentication, search, e-commerce flows, and data management align well with AI pattern recognition capabilities. Organizations migrating from legacy systems or building new applications with limited existing test coverage can accelerate their quality initiatives with AI-assisted test generation.

How do AI test case builders handle changing requirements? Modern AI systems adapt to requirement changes by automatically regenerating affected test cases. When product managers update user stories or acceptance criteria, AI can produce corresponding test cases that reflect modifications without requiring complete rewrites. This adaptability addresses one of the most persistent pain points in test automation: keeping tests aligned with evolving application functionality.

Turn Your Regression Bottleneck into a Competitive Advantage

Regression testing doesn't have to be the phase that slows every release. An AI test case builder transforms test creation from a manual, time-intensive process into an automated, scalable capability that keeps pace with modern development velocity. The teams that adopt AI-powered testing now will ship faster, catch more defects, and redirect QA resources toward strategic quality initiatives rather than mechanical test maintenance.

TestQuality brings these AI capabilities into a unified platform that integrates seamlessly with GitHub, Jira, and your existing CI/CD workflows. With QA Agents that drive test creation and analysis through an intuitive chat interface, you can generate comprehensive test cases from requirements and accelerate quality for both human and AI-generated code. Start your free trial and experience how AI-powered test management reduces regression time while expanding coverage.