AI test case generation has shifted from experimental pilot programs to essential QA infrastructure, enhancing how teams approach test design and execution.

- Organizations implementing AI-powered test creation report faster test case development and improved edge-case coverage that manual processes typically miss.

- Agentic AI workflows allow QA teams to orchestrate intelligent systems rather than write every test scenario from scratch.

- Successful adoption requires quality input data, clear requirements documentation, and consistent human oversight to validate AI-generated scenarios.

Teams that delay AI testing adoption risk falling behind competitors who are already shipping higher quality software at faster release cycles.

Writing and maintaining test cases has always been one of the most time-consuming responsibilities in software development. QA engineers spend countless hours translating requirements into test scenarios, covering edge cases, and updating test suites as features evolve. According to Gartner's 2025 strategic trends report, AI-native software engineering is transforming every phase of the development lifecycle, and testing is no exception.

What Is AI Test Case Generation?

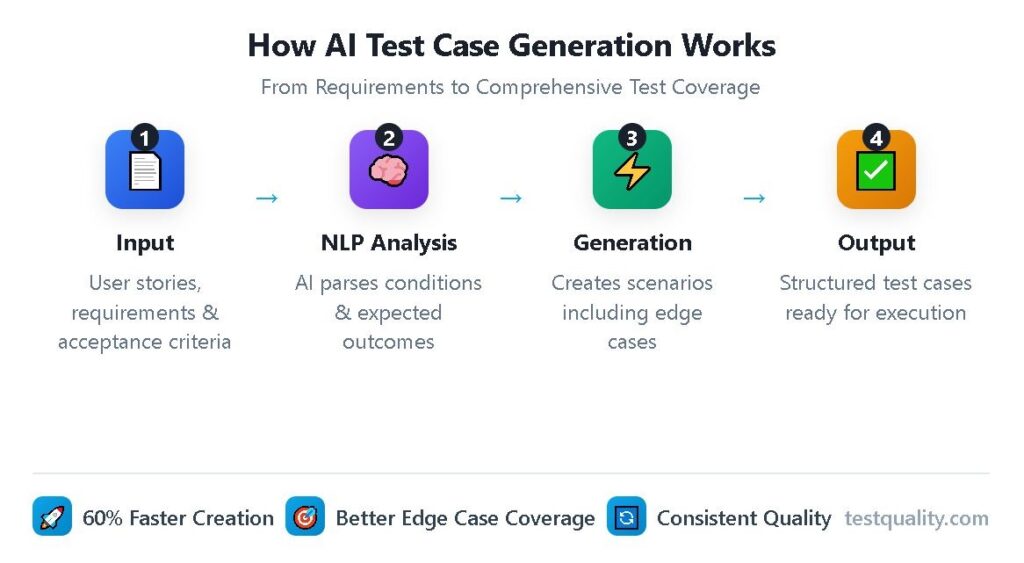

AI test case generation uses machine learning algorithms and natural language processing to automatically create test scenarios from various inputs, including requirements documents, user stories, acceptance criteria, and existing codebases. Unlike traditional automated test execution, which runs pre-written tests, AI-powered generation actually creates the test cases themselves.

Traditional test automation accelerates execution but still requires humans to manually design every scenario. AI generation handles both the thinking and the writing, producing structured test cases that cover happy paths, negative scenarios, boundary conditions, and edge cases that human testers might deprioritize or overlook entirely.

Modern AI test generation tools analyze application functionality through multiple lenses. They parse natural language requirements to identify testable conditions. They examine code structure to understand data flows and potential failure points. They learn from historical defect data to predict where bugs are most likely to appear. The result is test coverage that would take human teams weeks to produce.

How Do AI Test Generation Tools Actually Work?

The technical foundation of AI-powered test creation combines several machine learning approaches working in concert. Understanding this process helps QA teams set realistic expectations and prepare their workflows for successful AI integration.

Natural Language Processing in Action

The first stage involves ingesting and interpreting available documentation. AI systems use NLP to extract testable conditions from written requirements, acceptance criteria, and user stories. When a product owner writes "users should receive an error message when submitting an empty form," the AI identifies the action (submit empty form), the condition (empty fields), and the expected outcome (error message).

This parsing capability extends beyond simple if-then statements. Advanced models understand context, recognize implied requirements, and identify unstated assumptions that experienced testers would naturally question. The quality of input directly impacts output quality, which is why organizations with well-documented requirements see the best results from AI test generation.

Pattern Recognition and Scenario Generation

Once the AI understands what needs testing, it applies pattern recognition to generate comprehensive scenarios. Machine learning models trained on millions of existing test cases recognize common testing patterns: boundary value analysis, equivalence partitioning, decision table testing, and state transition testing.

The system generates not just obvious test cases but variations that address:

- Boundary conditions at minimum and maximum values

- Invalid input handling across multiple data types

- Concurrent user scenarios and race conditions

- Error recovery and exception handling paths

- Integration points between system components

This systematic generation ensures coverage completeness that manual approaches struggle to achieve consistently. Teams using automated test case creation report discovering scenarios they would have missed entirely through traditional methods.

Executive Summary

The Shift from Legacy "AI-Assist" to Agentic QA

Stop debugging scripts; start managing agents.

If your QA strategy in 2026 relies on writing prompts to generate brittle code, you are simply trading manual test writing for manual test maintenance. The industry is rapidly pivoting away from legacy AI-assist tools, which act as glorified autocomplete engines, toward Agentic QA.

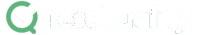

Evaluators must recognize the critical difference: legacy test management forces QA teams to act as manual translation layers. Even first-generation 'AI copilots' require constant prompt-engineering, manual mapping to Gherkin criteria, and babysitting to ensure requirements trace back to Jira or GitHub. Agentic platforms like, like TestStory.ai, bypass this entirely. They don't just autocomplete steps; they ingest your system context to architect, generate, and map production-ready test suites autonomously in under 30 seconds.

The Agentic Benchmark: Why TestStory.ai Replaces Generic AI Generators

Most AI test case generators are structurally "dumb." They lack specific control mechanisms, resulting in bloated, un-executable test suites. We built TestStory.ai to solve the engineering friction points of test design and coverage validation:

Deep Context Extraction (Zero-Prompting): TestStory natively connects to Jira, GitHub, and Linear. It ingests your Epics, User Stories, and PRs, translating them into comprehensive test cases instantly.

Diagram-to-Test Autonomy: Don't write requirements if you already have the architecture mapped. TestStory autonomously parses complex process diagrams (Visio, Lucidchart, PNG, PDF), understanding UML, BPMN, ERD, and System Architecture to instantly map out state transitions, edge cases, and strict acceptance criteria.

Precision Control via "Test Dials": We replaced generic prompt engineering with deterministic controls. Engineers use "Test Dials" and reusable "Preset Packs" to rigidly define test scope, target audiences, and specific test types (e.g., Smoke, Regression, Integration) ensuring strict alignment with your existing QA strategy.

IDE & Dev Workflow Native (MCP Integration): TestStory's MCP architecture plugs the agent directly into your development environment. Trigger TestStory's QA logic natively inside Claude, Cursor, and VSCode/Copilot, passing the output seamlessly into test management systems like TestQuality.

Enterprise Data Sovereignty: Your proprietary logic is never used to train our base models. TestStory allows you to utilize your own AI provider keys, ensuring strict compliance and zero data leakage.

The benchmark for 2026 isn't how fast an AI can write a script; it's how much test maintenance debt the agent eliminates.

No credit card required.

What Are the Benefits of Test Automation AI?

The measurable advantages of test automation AI extend across efficiency, coverage, and quality metrics. Organizations adopting these capabilities see improvements that compound over time.

Dramatic Time Savings: Research demonstrated 60% acceleration in test case generation, reducing average time per test case from approximately one hour to nineteen minutes. Teams with mature implementations often report even greater efficiency gains as AI becomes fully integrated into established workflows.

Expanded Test Coverage: AI systematically identifies scenarios that human testers might deprioritize under time pressure. Edge cases, unusual input combinations, and complex state transitions receive the same attention as primary functionality. This comprehensive coverage catches defects earlier in the development cycle when fixes cost less.

Consistent Quality Standards: Manual test case creation varies based on individual tester experience, available time, and cognitive load. AI generates tests with uniform structure, consistent terminology, and standardized formatting. This consistency improves test suite maintainability and makes onboarding new team members easier.

Continuous Learning and Adaptation: Modern AI QA tools improve over time by analyzing test execution results. When tests fail or reveal defects, the system adjusts its generation patterns to prioritize similar scenarios in future cycles. This feedback loop creates progressively smarter test suites.

Reduced Maintenance Overhead: Self-healing capabilities automatically adapt tests to application changes. When UI elements shift or API responses update, AI-powered systems detect the changes and adjust test steps accordingly, reducing the manual maintenance burden that traditionally consumes a substantial portion of automation budgets.

How Do AI QA Tools Integrate Into Modern Workflows?

Effective AI QA tools operate within existing development ecosystems rather than requiring teams to abandon established processes. The integration points span the entire software delivery pipeline.

The following table illustrates how AI capabilities map to different workflow stages:

| Workflow Stage | Traditional Approach | AI-Enhanced Approach |

| Requirements Analysis | Manual review and test planning | Automated test case generation from user stories |

| Test Design | Testers write individual scenarios | AI generates comprehensive scenario sets with edge cases |

| Test Data Creation | Manual data preparation or scripted generation | Intelligent data synthesis matching real-world patterns |

| Test Execution | Scheduled batch runs | Prioritized execution based on risk and change impact |

| Results Analysis | Manual defect triage | Automated root cause analysis and defect prediction |

| Test Maintenance | Manual updates when features change | Self-healing scripts that adapt automatically |

CI/CD integration is a critical capability. AI test generation tools connect directly with Jenkins, GitHub Actions, GitLab CI, and similar platforms. When code commits trigger pipeline execution, AI systems can generate targeted tests covering the changed functionality, execute them immediately, and provide results within minutes.

For teams building strategic test plans in the AI era, these integrations enable continuous quality validation without manual intervention. The Gartner prediction that 90% of software engineers will orchestrate AI-driven processes by 2028 reflects this shift toward intelligent automation throughout the development lifecycle.

Teams looking to explore AI-powered test creation can start with free AI test case builders, which generate comprehensive test scenarios from user stories and requirements without extensive setup or configuration.

What Does Test Case Automation Look Like in Practice?

Real-world implementations demonstrate how test case automation powered by AI delivers measurable results across different contexts. While specific outcomes vary based on organization size, existing processes, and implementation maturity, certain patterns emerge consistently.

E-commerce Platform Migrations: Retail organizations migrating legacy systems typically face enormous test coverage requirements across product configurations, payment flows, and inventory scenarios. AI generation commonly compresses test creation timelines from months to weeks while surfacing edge cases around currency conversions, tax calculations, and regional variations that manual planning might overlook.

API Testing for Financial Services: Fintech teams using AI to generate API test cases frequently cover scenarios that would be impractical to write manually. Boundary tests for transaction amounts, authentication failure handling, timeout scenarios, and security vulnerability checks can be systematically generated rather than relying on individual tester intuition about what might go wrong.

Mobile Application Release Cycles: Development teams practicing frequent releases often struggle to maintain adequate regression coverage. AI-generated tests can reduce this burden while actually expanding scenario coverage, allowing teams to redirect effort toward exploratory testing and user experience validation where human judgment adds the most value.

Healthcare Compliance Validation: Regulatory requirements in healthcare demand exhaustive documentation and audit trails. AI test generation produces test cases with proper traceability to requirements, often improving regulatory examination readiness while reducing preparation overhead.

These implementations share common success factors: quality input documentation, clear acceptance criteria, integration with existing tools, and human oversight of generated output.

What Challenges Should Teams Expect?

Adopting AI test case generation involves navigating predictable obstacles that organizations should anticipate and plan for.

- Input Quality Dependency: AI systems produce output proportional to input quality. Teams with sparse documentation, ambiguous requirements, or inconsistent acceptance criteria will see correspondingly inconsistent test generation results. Improving requirements documentation often delivers better ROI than tool optimization.

- Over-Reliance Risk: Treating AI output as final without human review leads to blind spots in test coverage. Experienced testers must validate generated scenarios against business logic, user behavior patterns, and domain-specific risks that AI may not fully understand.

- Integration Complexity: Connecting AI tools with existing test management platforms, CI/CD systems, and defect tracking solutions requires technical effort. Organizations should evaluate integration capabilities carefully before committing to specific tools.

- Skill Evolution Requirements: Gartner research indicates 80% of engineering teams will need upskilling to work effectively with AI through 2027. QA professionals must develop new competencies around prompt engineering, AI output validation, and strategic test orchestration.

- Cultural Resistance: Some team members view AI testing tools as threats rather than enablers. Successful implementations frame AI as augmenting human expertise rather than replacing it, emphasizing how automation frees testers for high-value activities.

Understanding how AI transforms software testing helps teams prepare for these challenges and develop realistic implementation roadmaps.

Frequently Asked Questions

Can AI completely replace human testers in 2026?

No. AI excels at generating comprehensive test scenarios and automating repetitive tasks, but human judgment remains essential for test planning, exploratory testing, and validating that generated tests align with actual business requirements. The most effective implementations combine AI efficiency with human expertise.

How much does AI test case generation typically cost?

Costs vary based on tool selection, team size, and usage volume. Many platforms offer free tiers for initial exploration, with paid plans scaling based on generated test case volume or team seats. ROI typically becomes positive within the first few months as time savings compound across multiple development cycles.

What types of testing benefit most from AI generation?

Functional testing, regression testing, and API testing see the strongest results from AI generation. These areas involve repetitive scenario patterns that AI recognizes. Exploratory testing, usability testing, and highly specialized domain testing still benefit more from human-led approaches, though AI can assist with test data preparation and scenario suggestions.

How do AI-generated tests integrate with existing test automation frameworks?

Modern AI test generation tools output tests compatible with popular frameworks, including Selenium, Playwright, Cypress, and JUnit. Generated tests can execute within existing CI/CD pipelines without requiring infrastructure changes. Some platforms also support Gherkin/BDD output formats for teams practicing behavior-driven development.

Start Building Smarter Test Cases Today

The transformation of test case generation through AI is one of the most impactful shifts in QA practice since automated test execution. The path forward involves starting with well-defined use cases, typically high-volume repetitive test scenarios, and expanding systematically as teams develop comfort with AI-augmented workflows. Human expertise remains essential for strategic test planning, edge case validation, and quality governance.

TestQuality's AI-powered QA platform leverages intelligent agents to drive test workflows from creation through execution and maintenance. Start your free trial and experience how AI transforms test case generation from a bottleneck into a competitive advantage.