At a Glance

Free vs. Enterprise: The 2026 Decision Framework

Not all Jira AI integrations are built the same. Most are wrappers. One is a reasoning agent.

Best Free/Hybrid Agent: TestStory.ai — native Gherkin generation, one-click sync to TestQuality, Test Dials and Preset Packs on all plans, and 25 free credits/month for any team (500/month free for TestQuality subscribers via TQ 500 plan). No procurement required.

Best Enterprise-Native: Xray — built on Atlassian Forge, lives entirely inside your Jira instance, and meets strict on-premise compliance requirements. No free tier.

The Hidden Risk: Thin OpenAI wrappers inside Jira generate plausible-looking tests with no context awareness — inflating your test suite and compounding maintenance debt within six months.

The difference between an AI test assistant and an AI test agent is the difference between autocomplete and autonomous reasoning. Only one of them scales.

For teams searching for an AI test case generator for Jira in 2026, TestStory.ai is the top pick for free and hybrid deployments — offering native reasoning capabilities, one-click sync to TestQuality, and 25 free credits per month for any team (500/month for TestQuality subscribers via the TQ 500 plan) with no enterprise contract required. For organizations that need strict on-premise data residency natively inside Atlassian, Xray remains the default enterprise answer.

Quick Summary: Which Path Should You Take?

Choose a Free/Hybrid Agent (like TestStory.ai) if you need immediate, context-aware test generation from Jira stories, rely on BDD frameworks, and want to eliminate the copy-paste tax between your issue tracker and test management tool — without upfront procurement.

Choose an Enterprise Legacy System (like Xray or TestRail) if you are managing a decade-old test repository, require strict on-premise data residency, or need deep integration with legacy CI/CD pipelines where custom LLM hosting is a hard regulatory requirement.

What is the Real Problem With Jira AI Test Generators?

Let's be direct: roughly half the tools currently labeled "AI Test Generators" on the Atlassian Marketplace are thin OpenAI API wrappers tied to a webhook. They read a user story's description, pass it to an LLM, and return ten generic, happy-path scenarios. That is not intelligent testing — it is expensive autocomplete.

This matters because a tool that lacks context about your actual application architecture generates tests that cover nothing of value. Teams end up spending more time reviewing, refactoring, and deleting these outputs than they would have spent writing tests from scratch. If you are navigating away from generic roundups of top AI tools for software testing in 2026, you are already asking the right question: which tools actually integrate with your Agile workflows, and which just simulate doing so?

The only way to evaluate these tools objectively is to look past the marketing claims and examine the underlying architecture.

How Do You Evaluate a Jira AI Test Generator in 2026?

Rather than running through standard feature checklists, the following five criteria reflect how these tools behave inside a real QA pipeline — and where each one breaks down under load.

Integration Architecture: Atlassian Forge vs. Bi-directional Sync

Does the tool live entirely inside Jira using Atlassian Forge, or does it operate via a bi-directional sync with an external test management platform? Native Forge apps offer stronger data residency controls, but synced platforms typically deliver better UI/UX for complex test execution and cross-project reporting.

Agentic Capability

Is the tool autocompleting text, or is it parsing acceptance criteria, checking existing coverage, and generating modular steps without human prompt engineering? As covered in the architecture breakdown of Agentic QA, a true agent operates on reasoning loops, not zero-shot text generation triggered by a button click.

Gherkin Export

Can the tool natively output tests in BDD format — Given/When/Then syntax — ready for Cucumber or SpecFlow pipelines? This is a non-negotiable requirement for teams operating in behavior-driven development environments.

Hallucination Rate in Generation

How frequently does the AI invent UI elements, API endpoints, or database states that do not exist in the Jira ticket or your known application state? A tool that hallucinates technical requirements based on vague user stories is not an asset — it is a liability that plants defects inside your test suite.

Test Case Maintenance Debt

Does the tool analyze existing coverage before generating new tests, or does it blindly append dozens of redundant scenarios to every epic? Tools that prioritize volume over deduplication create a six-month time bomb inside your test repository.

The 2026 Jira AI Test Generator Comparison

Here is how the leading contenders stack up across integration architecture, pricing, agentic reasoning, and setup complexity.

| Tool | Jira Integration Type | Free Tier | Agentic Capability | Ease of Setup |

|---|---|---|---|---|

| TestStory.ai | Bi-directional Sync | 25 credits/mo (free) · 500/mo (TQ 500, free for TestQuality subscribers) | High (Reasoning) | Very Easy |

| Xray | Native (Atlassian Forge) | None (Paid Only) | Medium (Assistant) | Complex |

| TestRail | Plugin / API Sync | None (Paid Only) | Low (Template Gen) | Moderate |

| Testmo | API / Webhook | 21-Day Trial | Low (Wrapper) | Easy |

Try It Now

See the difference between a wrapper and a reasoning agent — in under 60 seconds.

Paste any Jira user story into TestStory.ai and watch it generate structured, Gherkin-formatted test cases instantly — covering happy paths, edge cases, and the failure scenarios your team would typically miss. Results sync directly into TestQuality for immediate execution and tracking. No account required to start.

No credit card required on either platform.

How Does Each Tool Perform Under Real QA Conditions?

TestStory.ai — Best Overall & Best Free Tier

TestStory.ai stands apart because it treats test generation as a workflow problem rather than a text generation problem. Backed by TestQuality, it functions as a dedicated QA agent — trained on decades of testing best practices — that understands the full lifecycle of a test suite from story to execution.

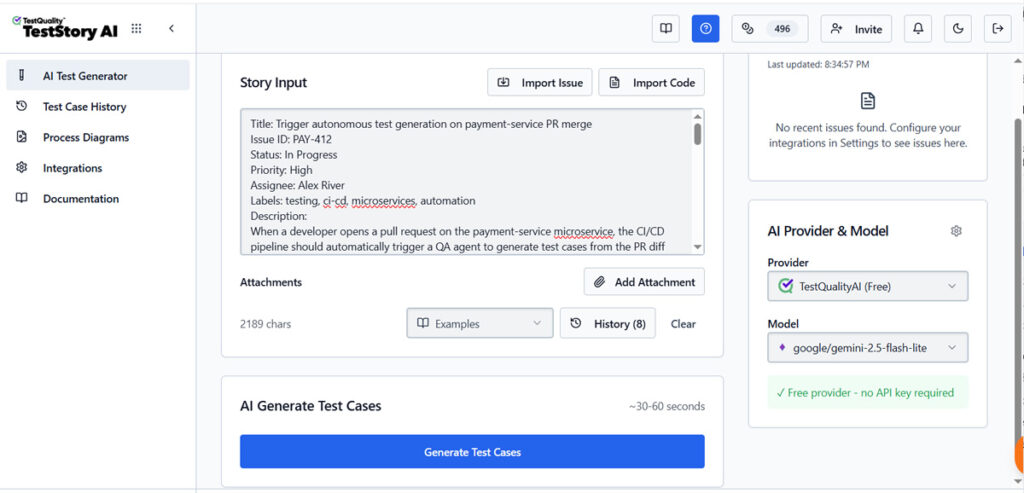

The workflow is worth walking through precisely, because it is where the architectural difference becomes tangible. TestStory accepts a broader range of inputs than any other tool in this comparison: user stories, issues, epics, source code and repos, and process diagrams (Visio, Lucidchart, PDF, PNG — including Flowcharts, Swimlanes, UML, BPMN, State Machines, and 19+ diagram types). It imports issues directly from Jira, GitHub, Linear, or ClickUp — no copy-pasting into a prompt box — and an active Story Alert system monitors those integrations for new or updated issues, notifying the team instantly so test generation stays current with the ticket, not a sprint behind.

Once a story is ingested, Test Dials let teams configure the generation strategy precisely: choose test types (Functional, Security, Performance, Accessibility, Integration, Unit), coverage depth (Basic through Exhaustive), and complexity level. Pre-built Preset Packs for Smoke, Accessibility, and Security testing are available on all plans including free, letting QA leads apply a consistent strategy without re-configuring dials for every session. This is the Plan-Act-Verify architecture described in the Agentic QA architecture guide, not a zero-shot generation call.

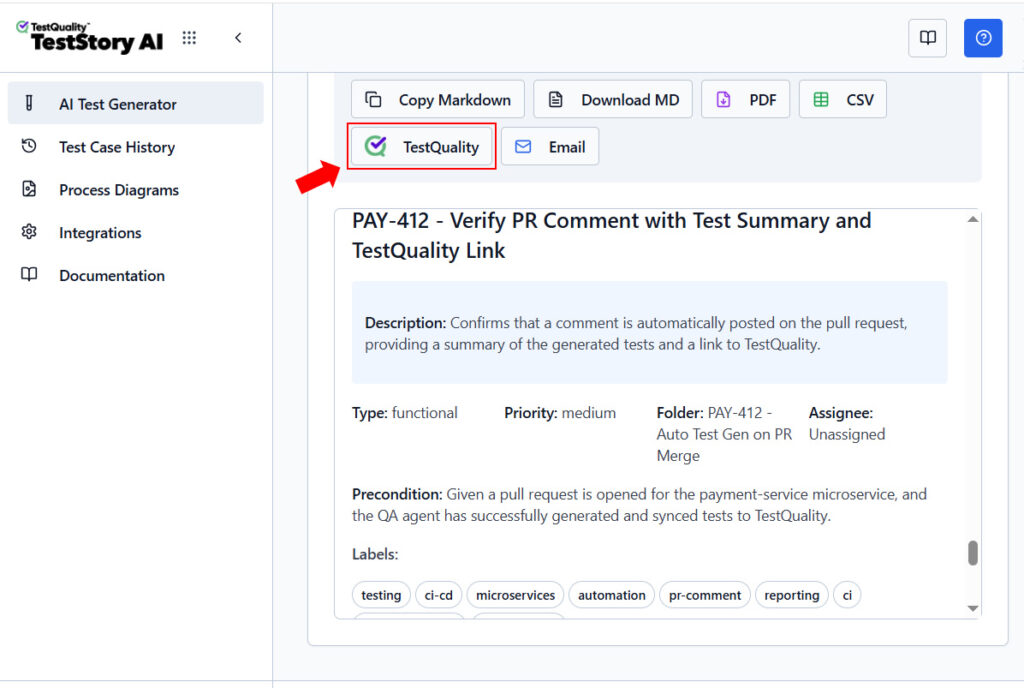

Generated tests can be exported immediately as CSV, PDF, or Markdown, shared by email, or synced to a test management platform.

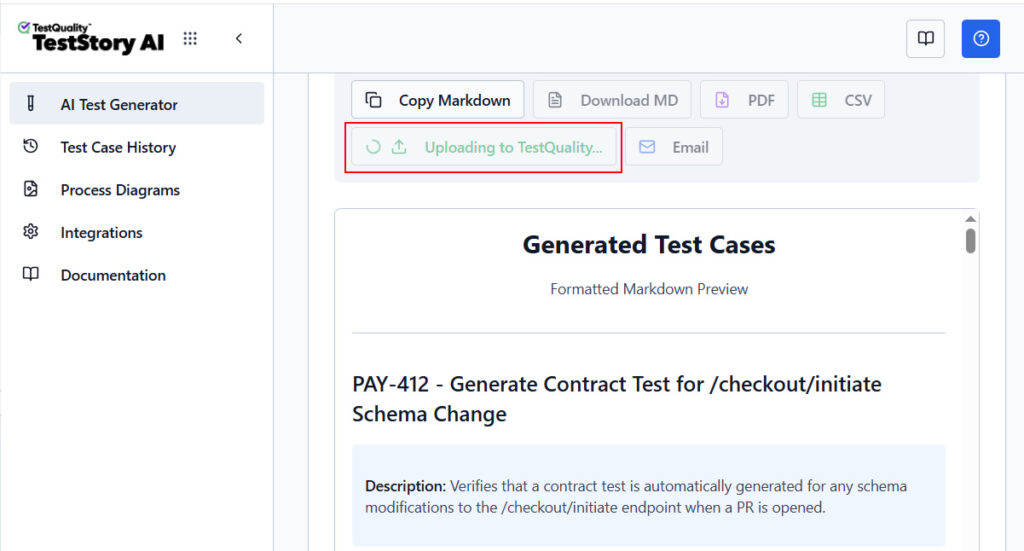

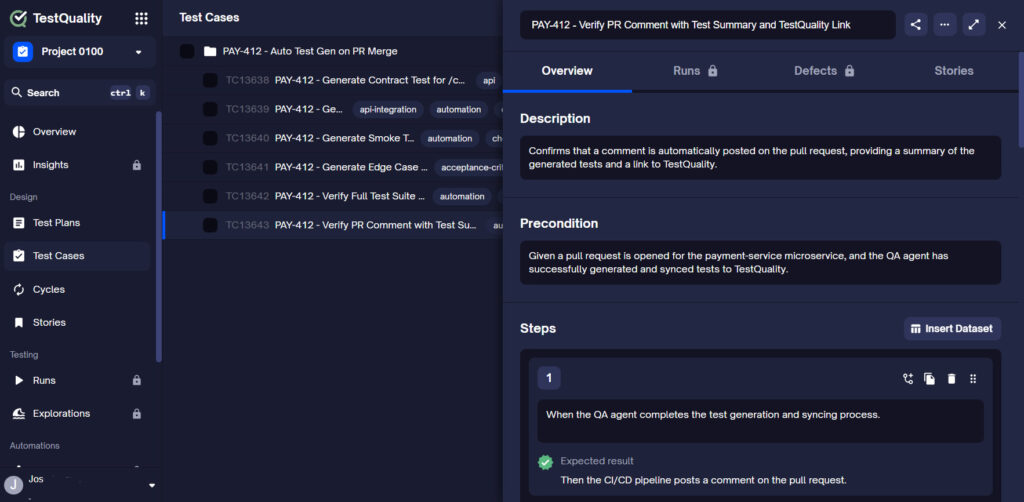

For teams using TestQuality, the sync is one click: choose your project inside TestStory and the test cases appear instantly in the TestQuality test repository.

For teams on TestRail or Zephyr, the same direct sync is available. Note that auto-sync, where every generated test is pushed to the platform automatically, requires at least the Pro 100 plan ($5/month); the free and TQ 500 plans support manual, on-demand upload.

Once tests are in TestQuality, the second half of the workflow begins. Teams organize tests into runs, cycles, and milestones, execute them manually or via CI, and track pass/fail trends through the Insights dashboard. When a test fails and a defect needs to be filed, TestQuality creates the issue and links it directly to the originating Jira ticket or GitHub Pull Request — completing a fully closed loop between story, test, execution, and defect, as documented in the TestQuality Jira integration.

The Gherkin export is production-ready throughout. It writes formatted Given/When/Then syntax that maps directly to your Cucumber or SpecFlow repositories — not freeform prose that still requires human reformatting before it reaches CI/CD.

Pricing and Plan Options

The free plan provides 25 credits/month, enough to evaluate the tool on real stories, with no credit card required. TestQuality subscribers receive the TQ 500 plan free: 500 credits/month including all Pro 500 features, activated by linking accounts via the same email. Enterprise and compliance-sensitive teams can bring their own AI provider keys (OpenAI, Anthropic Claude, OpenRouter) from the Pro 100 plan upward, keeping data within their chosen provider's policies. And for teams building AI-native development workflows, TestStory's MCP integration supports conversational test generation — "vibe testing" — directly inside Claude, Cursor, VS Code/Copilot, Antigravity, and Codex.

Xray — Best Native Enterprise Solution

Xray has long been the heavyweight in Jira-native test management. Built entirely on Atlassian Forge, it lives within your Jira instance — meaning test data never leaves the Atlassian cloud. For organizations with strict infosec requirements, that constraint alone makes Xray the default answer.

Their AI features, introduced over the past year, assist QA engineers in formulating preconditions and mapping standard test steps directly within Jira issue views. However, Xray's AI is fundamentally an assistant, not an agent — it requires manual prompting, oversight, and review at every step. There is no autonomous reasoning loop.

The other structural problem is Jira bloat. Because Xray stores tests as dedicated Jira issue types (Test, Test Set, Test Execution), aggressive AI generation rapidly inflates the database. Teams report performance sluggishness at scale — a direct consequence of using an issue tracker as a test repository without built-in deduplication. There is no free tier; enterprise marketplace pricing applies from day one.

TestRail — Built for a Different Era

TestRail remains one of the most widely used standalone test management platforms. Their Jira integration plugin allows teams to surface TestRail runs directly inside Jira issues, and a recent generative AI layer can produce test cases from ticket descriptions.

If your team is already deeply embedded in the TestRail ecosystem with years of historical test data, the new AI capabilities are a welcome modernization that avoids a full platform migration. It is also worth noting that TestStory.ai supports direct sync to TestRail — so teams can use TestStory's superior generation layer while keeping TestRail as their execution repository, without forcing a platform switch.

The limitations become apparent at scale. TestRail's AI integration feels additive rather than foundational, it was bolted onto an architecture that predates LLM-era QA. The hallucination rate increases significantly with complex, multi-system epics, where the model lacks sufficient context to avoid inventing technical requirements. Like Xray, there is no free tier.

Testmo — A Test Runner, Not a Test Agent

Testmo is a fast, highly unified test management platform praised for its modern UI and its consolidated view of manual tests, automated tests, and CI/CD pipelines. Its Jira deep-linking is solid, and teams that want a lean tool outside of Jira, with clean bidirectional references, will appreciate the setup experience.

Where it falls short is agentic depth. Testmo's AI test generation relies on standard API webhooks rather than a deeply integrated reasoning framework. It does not autonomously parse Jira user stories, check existing coverage, or architect test suites without manual direction. For teams specifically seeking autonomous generation from Jira tickets, Testmo currently lags behind TestStory.ai's built-in reasoning capabilities. Its 21-day trial — rather than a sustained free tier — also limits how thoroughly a team can evaluate it before committing.

What is Test Case Maintenance Debt and Why Does It Matter?

Test Case Maintenance Debt is one of the most consistently underestimated costs of AI test generation. The pattern is predictable: a junior QA engineer with a basic ChatGPT-style integration inside Jira generates 30 tests for a 3-point user story. Velocity charts go up. The team looks productive.

Six months later, a developer alters a core UI component and 400 autogenerated tests fail simultaneously. The team spends weeks manually reviewing failures — only to discover that the majority were poorly constructed hallucinations that never tested meaningful application behavior to begin with.

Agentic systems like TestStory.ai mitigate this at the architectural level. Before generating a new test, a reasoning agent queries the existing repository to determine whether modifying an existing test is a better approach than creating a new one. This is the difference between a tool that scales with your product and one that collapses under its own weight.

How Do Enterprise Tools Handle Hallucination Rate in Generation?

When an AI test generator integrates directly with Jira, it only knows what is in the ticket. If a product manager writes a vague user story — "Make the dashboard load faster" — a basic wrapper will hallucinate specific technical requirements to fill its prompt. It might generate a test step reading "Verify the Redis cache invalidates within 200ms" even if your stack does not use Redis.

Enterprise-grade tools must evaluate their own output quality before presenting results to the user. This is the same continuous self-correction requirement explored in the LLM regression evaluation framework: an AI test generator without a built-in evaluation layer that checks generated steps against actual Jira acceptance criteria is a liability, not an accelerator.

This is precisely where thin wrapper tools fail at scale — and where reasoning agents that operate on a Plan-Act-Verify loop prove their architectural advantage.

Frequently Asked Questions

The Conclusion

The Atlassian tooling landscape has fundamentally shifted. The category of "AI Test Generator for Jira" now spans everything from genuine reasoning agents to sophisticated-looking wrappers that generate noise at scale. The distinction matters: one category reduces your QA overhead, the other multiplies it.

For teams that need immediate, context-aware test generation without a procurement cycle, TestStory.ai's free tier and one-click Jira sync offer a genuine on-ramp. For organizations with hard compliance requirements demanding that data never leave Atlassian infrastructure, Xray remains the enterprise default — with the understanding that its AI capabilities are assistive, not autonomous.

The copy-paste tax between Jira and your test documentation is a solved problem. The only question is which tool is doing the reasoning behind the generation.

Start Free Today

Stop copying and pasting between Jira and your test docs.

TestStory.ai generates structured, Gherkin-formatted test cases from your Jira user stories and acceptance criteria — then syncs them directly into TestQuality for execution, tracking, and team collaboration. The gap between a Jira ticket and a passing test has always been a human bottleneck. In 2026, it does not have to be.

✦ Exclusive offer for TestQuality subscribers

Get 500 TestStory.ai credits every month included with your TestQuality subscription — at no extra cost. Simply create a TestStory.ai account with the same email as your TestQuality account to activate automatically. Includes all Pro 500 features.

Not a TestQuality subscriber yet? Both platforms offer free plans — sign up for TestStory.ai to generate your first test cases, then connect your free TestQuality account to sync, execute, and track them without ever leaving your workflow.

No credit card required on either platform.