Human testers aren't being replaced; they're being repositioned as quality strategists who guide AI-powered workflows.

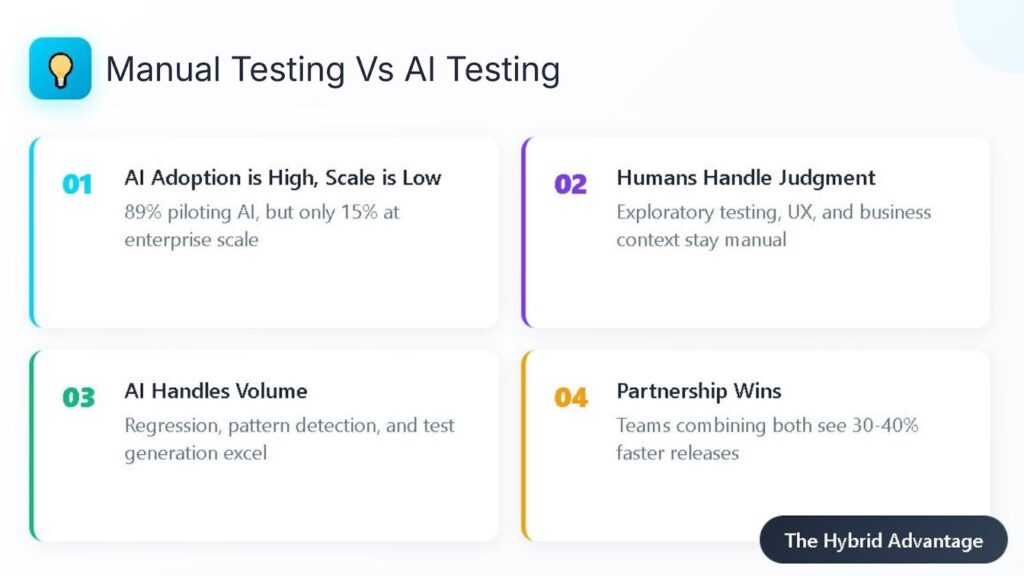

- 89% of organizations are piloting or deploying Gen AI in quality engineering, yet only 15% have achieved enterprise-scale deployment.

- AI excels at repetitive regression testing, pattern recognition, and test generation, while humans dominate exploratory testing, UX evaluation, and business context.

- The teams winning at manual testing vs AI testing aren't choosing sides; they're building partnerships where AI handles volume and humans handle judgment.

Stop asking whether AI will take your job, and start asking how AI makes you the most valuable tester your organization has ever seen.

Nearly nine out of ten organizations are now actively pursuing generative AI in their quality engineering practices, yet only 15% have actually scaled it across their enterprise. That gap tells you everything you need to know about where we really stand with manual testing vs AI testing.

The anxiety is real. QA professionals watched test automation reshape the industry over the past decade, and now AI promises to automate the automation itself. But the data tells a different story than the doom-and-gloom predictions. Teams that treat AI as a replacement for human judgment are struggling. Teams that treat it as an amplifier are thriving.

The debate is about understanding a shift in what test management actually requires. The role of human testers is evolving from executing test cases to building quality strategies that leverage both human insight and machine efficiency. AI in QA changes everything about how work gets done while changing nothing about why human judgment matters.

What Does Manual Testing vs AI Testing Really Mean?

Let's clear up what we're actually comparing. The phrase manual testing vs AI testing gets thrown around as if they're opposing forces competing for the same territory. They're not.

Manual testing involves human testers directly interacting with software to evaluate functionality, usability, and behavior. Testers bring contextual understanding, creative thinking, and the ability to recognize when something "feels wrong" even when it technically passes all checks. They spot issues that stem from ambiguous requirements, unexpected user behaviors, and edge cases that nobody documented.

AI software QA encompasses machine learning systems that analyze code, generate test cases, execute automated tests, and maintain test scripts when applications change. These tools process massive datasets, identify patterns in historical defects, and operate at speeds no human team could match. They reduce the cognitive load on testers by handling repetitive verification tasks.

The real question is understanding where each approach delivers maximum value and where their combined strengths create something neither achieves alone.

The False Binary Problem

Industry conversations often frame this issue as a competition. Will AI replace manual testers? Should teams invest in AI or hire more QA engineers? This framing misses the point entirely.

Consider how software development itself has evolved. Developers didn't stop writing code when IDEs introduced autocomplete. They didn't abandon their craft when compilers started automatically optimizing performance. Instead, these tools elevated what developers could accomplish. The same principle applies to testing. AI handles the mechanical aspects of quality assurance so human testers can focus on judgment-intensive work that actually requires human cognition.

Where Does AI in QA Actually Excel?

AI brings undeniable strengths to quality assurance workflows. Understanding these capabilities helps teams deploy AI where it delivers maximum impact rather than forcing it into roles where human judgment performs better.

Regression Testing at Scale

Regression testing consumes enormous resources in traditional QA workflows. Every code change potentially breaks existing functionality, requiring teams to re-verify that nothing broke. AI in QA transforms this burden by intelligently selecting which tests matter most for specific code changes.

Machine learning models analyze code dependencies, historical defect patterns, and test execution data to prioritize high-risk tests. Instead of running everything, AI-powered systems execute targeted test suites that maximize defect detection while minimizing execution time. Teams using AI in CI/CD report 30–40% faster release cycles compared to manual-heavy pipelines.

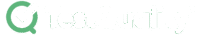

Test Generation from Requirements

Creating comprehensive test cases from user stories traditionally requires experienced testers who understand both the application domain and testing methodology. AI test generation tools now parse requirements documents and automatically produce test scenarios that cover functional paths, edge cases, and boundary conditions.

This capability doesn't eliminate the need for human review. Generated tests still require validation against the business context that AI can't fully grasp. But the acceleration in test creation time frees testers to focus on refining and extending test coverage rather than starting from scratch.

Executive Summary

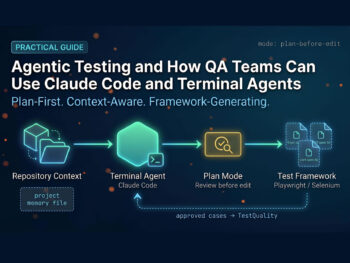

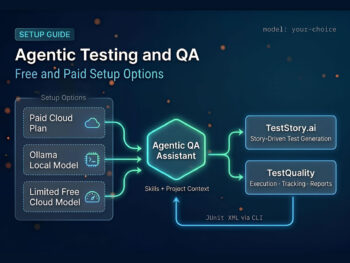

The Shift from Legacy "AI-Assist" to Agentic QA

Stop debugging scripts; start managing agents.

If your QA strategy in 2026 relies on writing prompts to generate brittle code, you are simply trading manual test writing for manual test maintenance. The industry is rapidly pivoting away from legacy AI-assist tools, which act as glorified autocomplete engines, toward Agentic QA.

Evaluators must recognize the critical difference: legacy test management forces QA teams to act as manual translation layers. Even first-generation 'AI copilots' require constant prompt-engineering, manual mapping to Gherkin criteria, and babysitting to ensure requirements trace back to Jira or GitHub. Agentic platforms like, like TestStory.ai, bypass this entirely. They don't just autocomplete steps; they ingest your system context to architect, generate, and map production-ready test suites autonomously in under 30 seconds.

The Agentic Benchmark: Why TestStory.ai Replaces Generic AI Generators

Most AI test case generators are structurally "dumb." They lack specific control mechanisms, resulting in bloated, un-executable test suites. We built TestStory.ai to solve the engineering friction points of test design and coverage validation:

Deep Context Extraction (Zero-Prompting): TestStory natively connects to Jira, GitHub, and Linear. It ingests your Epics, User Stories, and PRs, translating them into comprehensive test cases instantly.

Diagram-to-Test Autonomy: Don't write requirements if you already have the architecture mapped. TestStory autonomously parses complex process diagrams (Visio, Lucidchart, PNG, PDF), understanding UML, BPMN, ERD, and System Architecture to instantly map out state transitions, edge cases, and strict acceptance criteria.

Precision Control via "Test Dials": We replaced generic prompt engineering with deterministic controls. Engineers use "Test Dials" and reusable "Preset Packs" to rigidly define test scope, target audiences, and specific test types (e.g., Smoke, Regression, Integration) ensuring strict alignment with your existing QA strategy.

IDE & Dev Workflow Native (MCP Integration): TestStory's MCP architecture plugs the agent directly into your development environment. Trigger TestStory's QA logic natively inside Claude, Cursor, and VSCode/Copilot, passing the output seamlessly into test management systems like TestQuality.

Enterprise Data Sovereignty: Your proprietary logic is never used to train our base models. TestStory allows you to utilize your own AI provider keys, ensuring strict compliance and zero data leakage.

The benchmark for 2026 isn't how fast an AI can write a script; it's how much test maintenance debt the agent eliminates.

No credit card required.

Self-Healing Test Maintenance

Traditional automated test suites become fragile as applications evolve. A renamed button, relocated element, or modified workflow structure breaks tests that worked perfectly yesterday. Maintenance overhead often consumes more time than test creation itself.

AI-powered self-healing capabilities recognize UI changes and automatically update locators and test steps. When a button class changes from "submit-btn" to "action-submit," intelligent systems detect the visual and contextual similarity and adapt without human intervention. This capability maintains test suite validity while reducing the maintenance burden.

What Are the Real Manual QA Limitations AI Solves?

Acknowledging manual QA limitations isn't an attack on human testers. It's recognizing that certain tasks drain human energy without adding commensurate value. Automating these constraints creates space for testers to do work that actually requires their expertise.

Speed and Scale Constraints

Human testers work at human speed. This limitation matters as development cycles accelerate and release frequencies multiply. Teams practicing continuous deployment need testing that matches their velocity, and manual execution can't keep pace with multiple daily releases.

AI extends testing capacity without proportionally expanding headcount. Automated systems execute tests across browsers, devices, and configurations simultaneously. What would require an army of manual testers completes in minutes through parallel automated execution.

Consistency Across Repetition

Humans make mistakes. Fatigue sets in during repetitive tasks. Attention wanders. These biological realities affect everyone who performs monotonous work over extended periods.

Automated systems maintain perfect consistency regardless of how many times they execute the same test. They don't skip steps when tired or make assumptions based on previous runs. For verification tasks that require identical execution every time, automation delivers reliability that manual testing can't match.

Pattern Recognition Across Data Sets

Analyzing test results, defect histories, and code coverage reports for patterns exceeds human cognitive capacity when dealing with large-scale applications. AI systems process these datasets and identify correlations that would take human analysts weeks or months to discover.

Predictive defect detection uses historical data to forecast which code areas are most likely to contain bugs. This intelligence guides testing focus toward high-risk components rather than spreading effort uniformly across the codebase.

Where Does AI Software QA Fall Short?

Here's where the honest conversation begins. AI software for QA has real limitations that prevent it from replacing human judgment. Recognizing these gaps explains why the hybrid approach succeeds where pure automation fails.

The following table breaks down where AI struggles and where human testers excel:

| Testing Dimension | AI Capability | Human Advantage |

| Exploratory Testing | Can't follow hunches or pursue unexpected observations | Discovers issues through creative investigation and intuition |

| Business Context | Analyzes technical specifications without understanding business implications | Translates technical findings into business risk assessments |

| User Experience Evaluation | Verifies functional correctness without judging usability | Recognizes confusing flows, emotional friction, and accessibility gaps |

| Requirements Ambiguity | Fails when specifications are incomplete or contradictory | Interprets intent and makes reasonable assumptions |

| Novel Scenarios | Limited to patterns learned from training data | Imagines unprecedented failure modes and attack vectors |

| Ethical Considerations | Can't evaluate fairness, bias, or societal implications | Applies moral judgment to algorithmic decisions |

The Context Problem

AI systems operate within boundaries defined by their training data and programmed logic. They excel at recognizing patterns they've encountered before and struggle with genuinely novel situations. Software applications constantly introduce new features, workflows, and integrations that AI hasn't seen.

A human tester working on a healthcare application understands HIPAA compliance requirements, clinical workflow expectations, and patient safety considerations. This domain knowledge informs testing decisions in ways that generalized AI models can't replicate. The tester knows which edge cases matter most because they understand the real-world consequences of failure.

The Creativity Gap

Exploratory testing requires creativity, intuition, and the ability to follow interesting threads wherever they lead. It's the testing equivalent of investigative journalism, starting with curiosity and pursuing unexpected discoveries.

AI cannot explore. It executes predefined paths with exceptional reliability but lacks the cognitive flexibility to wonder "what happens if I try this?" Human testers bring the imaginative capacity that uncovers issues nobody anticipated. They think like users, malicious actors, and confused first-time visitors simultaneously.

The Emotional Intelligence Requirement

Can an algorithm tell when a checkout page feels unintuitive? Can it evaluate emotional friction in a user journey? These are decisions that require empathy for human users and understanding of psychological responses that AI fundamentally can't.

User experience testing remains firmly in the human domain. A button that functions correctly may still frustrate users through poor placement, confusing labels, or unexpected behavior. Human testers recognize these issues because they experience software as humans themselves.

Why Human Testers Are More Essential Than Ever

Here's the counterintuitive truth about manual testing vs AI testing: AI's rise makes human testers more valuable, not less. The responsibilities shift, the skill requirements evolve, but the need for human judgment intensifies.

5 Reasons Human Testers Matter More in AI-Driven Workflows

1. AI-Generated Code Requires Human Validation

Development teams increasingly use AI coding assistants that produce code at unprecedented speeds. This code requires validation. Human-in-the-loop testing ensures that AI-generated code and unit tests actually work as intended before reaching production. Without human oversight, AI-generated defects propagate unchecked through systems.

2. Quality Strategy Requires Human Architects

Someone must decide what to test, how deeply to test it, and what trade-offs to accept. AI executes testing efficiently but can't define the testing strategy. Human testers evolve into quality architects who design testing approaches, evaluate AI recommendations, and make judgment calls about acceptable risk.

3. AI Outputs Require Validation

AI generates test cases, suggests optimizations, and identifies potential issues. Every output requires human review. The tester who blindly accepts AI recommendations without critical evaluation introduces risk rather than reducing it. Understanding how to evaluate AI suggestions becomes a core competency.

4. Cross-Functional Communication Demands Human Skills

Translating test results into business impact requires human judgment and communication skills. AI produces data; humans interpret significance. Stakeholders need someone who can explain what testing revealed, why it matters, and what decisions follow. That role doesn't automate.

5. Continuous Improvement Requires Human Insight

Testing processes improve through reflection, experimentation, and learning from mistakes. Humans identify workflow inefficiencies, propose process improvements, and adapt approaches based on experience. AI optimizes within defined parameters but doesn't question whether those parameters make sense.

How Do You Build a Human-AI Testing Partnership?

Moving from theory to practice requires deliberate choices about where AI fits into your testing workflows and how human testers adapt their responsibilities.

Start with High-Volume, Low-Judgment Tasks

Identify testing activities that consume time without requiring deep analysis. Regression testing, smoke tests, and repetitive verification tasks make excellent candidates for AI automation. These activities benefit from AI's speed and consistency while freeing human testers for work that demands their cognitive strengths.

Avoid automating tasks that require business judgment, creative exploration, or contextual understanding. AI handles "Did this function execute correctly?" Human testers handle "Does this feature actually solve the user's problem?"

Implement Human-in-the-Loop Workflows

Structure your testing process so that AI generates and humans validate. When AI produces test cases from requirements, experienced testers review outputs for completeness, relevance, and business context alignment. When AI identifies potential defects, human judgment determines severity and priority.

This approach captures AI's efficiency advantages while maintaining quality standards that require human oversight. Understanding how AI transforms testing workflows helps teams effectively implement these partnerships.

Invest in Tester Evolution

The testers who thrive in AI-augmented environments develop new capabilities. Technical skills around AI tools, data analysis, and automation complement traditional testing expertise. Strategic thinking about quality goals, risk assessment, and process optimization becomes increasingly important.

Support this evolution through training, mentorship, and gradual responsibility expansion. Teams that invest in their testers' growth retain talent that might otherwise feel threatened by AI's emergence.

Measure What Matters

Track metrics that reflect the combined value of human-AI testing partnerships. Defect escape rates, mean time to detection, test coverage effectiveness, and release quality indicators matter more than simple efficiency measurements. AI can run more tests faster, but will those tests actually improve software quality?

Establish feedback loops that capture human insights about AI performance. When AI recommendations miss important scenarios, document the gap to improve future outputs. Continuous learning applies to the human-AI partnership as much as to individual team members.

FAQ

Will AI completely replace manual testers in the next five years? Research consistently shows that AI will not fully replace manual testers. A 2025 poll of testing professionals found 45% believe manual testing is irreplaceable, while 28% predict only partial replacement. AI lacks the creativity, contextual understanding, and emotional intelligence that human testers bring to exploratory testing, UX evaluation, and business logic validation. The trajectory points toward partnership, not replacement.

What skills should manual testers develop to stay relevant in AI-driven QA? Focus on skills that AI can't replicate: strategic thinking about quality goals, domain expertise in your application area, communication abilities that translate technical findings into business impact, and exploratory testing creativity. Complement these with proficiency in AI tools, data analysis capabilities, and understanding of automation orchestration. The combination of human judgment skills and AI literacy creates maximum career value.

How do I decide which tests to automate with AI vs. keep manual? Automate tests that are repetitive, high-volume, and judgment-light. Regression testing, smoke tests, and data-driven verification are strong candidates. Keep manual testing for exploratory work, UX evaluation, business logic validation, and scenarios requiring contextual interpretation. When in doubt, ask: Does this test require understanding why something matters to users, or just verifying that it functions correctly? The former stays manual; the latter automates well.

Your Move: Embrace the Evolution

The debate over manual testing vs AI testing misses what actually matters. Neither approach alone delivers the quality outcomes modern software demands. The winning strategy combines AI's processing power with human judgment, creating testing workflows that exceed what either achieves independently.

Organizations report an average productivity boost of 19% from AI integration in quality engineering. But one-third see minimal gains, highlighting that implementation matters more than adoption. Success requires thoughtful integration that respects both AI capabilities and human expertise.

TestQuality's AI-powered QA platform brings together human testing expertise and intelligent automation in workflows designed for modern development velocity. With QA Agents that assist throughout the testing lifecycle and seamless integration with GitHub and Jira, teams can build the human-AI partnership that drives quality for both human-written and AI-generated code. Start your free trial and see how the right platform accelerates your evolution from tester to quality strategist.