AI testing tools have shifted from optional productivity boosters to essential components of modern QA workflows.

- Self-healing tests, natural language test creation, and agentic AI are reshaping how teams approach test automation.

- The explosion of AI-generated code, which contains roughly 1.7x more defects than human-written code, makes robust AI-powered quality assurance vital.

- Choosing the right AI testing tools depends on your tech stack, team expertise, and whether you prioritize test creation, maintenance, or execution.

- Enterprise adoption of AI-augmented testing is accelerating, with the vast majority expected to integrate these tools by 2027.

If your team still relies on legacy test automation, 2026 is the year to evaluate AI-native alternatives before your competitors leave you behind.

AI testing tools have moved from experimental features to mission-critical infrastructure, changing how QA teams create, maintain, and execute tests.

Development cycles have compressed to the point where manual testing can't keep pace. And the rise of AI-generated code has introduced new quality challenges that demand equally sophisticated test management solutions. Teams are discovering that AI doesn't just write code faster; it also introduces bugs faster. AI-generated pull requests contain approximately 1.7 times more issues than human-written code on average. Intelligent test automation is a requirement, not a luxury.

This guide breaks down the essential AI capabilities for software testing in 2026, complete with use cases and considerations for building a future-ready QA strategy.

Executive Summary

The Shift from Legacy "AI-Assist" to Agentic QA

Stop debugging scripts; start managing agents.

If your QA strategy in 2026 relies on writing prompts to generate brittle code, you are simply trading manual test writing for manual test maintenance. The industry is rapidly pivoting away from legacy AI-assist tools, which act as glorified autocomplete engines, toward Agentic QA.

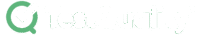

Evaluators must recognize the critical difference: legacy test management forces QA teams to act as manual translation layers. Even first-generation 'AI copilots' require constant prompt-engineering, manual mapping to Gherkin criteria, and babysitting to ensure requirements trace back to Jira or GitHub. Agentic platforms like, like TestStory.ai, bypass this entirely. They don't just autocomplete steps; they ingest your system context to architect, generate, and map production-ready test suites autonomously in under 30 seconds.

The Agentic Benchmark: Why TestStory.ai Replaces Generic AI Generators

Most AI test case generators are structurally "dumb." They lack specific control mechanisms, resulting in bloated, un-executable test suites. We built TestStory.ai to solve the engineering friction points of test design and coverage validation:

Deep Context Extraction (Zero-Prompting): TestStory natively connects to Jira, GitHub, and Linear. It ingests your Epics, User Stories, and PRs, translating them into comprehensive test cases instantly.

Diagram-to-Test Autonomy: Don't write requirements if you already have the architecture mapped. TestStory autonomously parses complex process diagrams (Visio, Lucidchart, PNG, PDF), understanding UML, BPMN, ERD, and System Architecture to instantly map out state transitions, edge cases, and strict acceptance criteria.

Precision Control via "Test Dials": We replaced generic prompt engineering with deterministic controls. Engineers use "Test Dials" and reusable "Preset Packs" to rigidly define test scope, target audiences, and specific test types (e.g., Smoke, Regression, Integration) ensuring strict alignment with your existing QA strategy.

IDE & Dev Workflow Native (MCP Integration): TestStory's MCP architecture plugs the agent directly into your development environment. Trigger TestStory's QA logic natively inside Claude, Cursor, and VSCode/Copilot, passing the output seamlessly into test management systems like TestQuality.

Enterprise Data Sovereignty: Your proprietary logic is never used to train our base models. TestStory allows you to utilize your own AI provider keys, ensuring strict compliance and zero data leakage.

The benchmark for 2026 isn't how fast an AI can write a script; it's how much test maintenance debt the agent eliminates.

No credit card required.

Why Are AI Testing Tools Essential in 2026?

The argument for AI software testing goes beyond efficiency metrics. While speed matters, the real value lies in what these tools enable that was previously impossible or impractical.

Traditional test automation required deep technical expertise and significant time investment. Writing maintainable test scripts, handling element locators, and managing the inevitable maintenance burden consumed resources that could be directed toward exploratory testing and strategic quality decisions. AI-powered platforms automate the tedious parts while augmenting human judgment where it matters most.

Consider the maintenance problem. UI changes historically broke automated tests at scale, creating backlogs that demoralized QA teams and eroded confidence in test suites. Intelligent test automation platforms use machine learning to automatically adapt tests when application interfaces change. Self-healing capabilities that seemed futuristic in 2023 are now table stakes for serious test automation investments.

The quality stakes have also increased. As organizations push AI-generated code into production at unprecedented rates, the need for comprehensive, rapid test coverage has intensified. Software testing benefits multiply when AI tools can generate test cases from requirements in seconds rather than hours.

What Categories of AI Testing Capabilities Should You Know?

Understanding the categories of AI testing tools helps clarify which capabilities match your needs. Modern platforms combine multiple approaches, but knowing the distinctions helps you evaluate what matters most for your workflow.

AI-Powered Test Case Generation

The most impactful AI capability for many teams is automatically generating test cases from requirements, user stories, or feature descriptions. Rather than manually translating specifications into test scenarios, AI analyzes inputs and produces comprehensive test coverage in seconds.

This capability transforms the relationship between requirements and testing. When product managers update a user story, AI can immediately generate corresponding test cases that reflect the changes. Teams struggling with test coverage gaps or facing compressed timelines see dramatic improvements. The technology has matured to the point where AI-generated tests rival human-created ones in quality while taking a fraction of the time.

Advanced systems understand context, identify edge cases humans might overlook, and output tests in formats ready for execution. The best implementations support multiple output formats, including Gherkin/BDD syntax, making generated tests immediately usable in behavior-driven development workflows.

Self-Healing Test Automation

Self-healing platforms use AI to detect when UI elements change and automatically update test scripts to maintain functionality.

The technology works by understanding elements through multiple attributes rather than single locators. When a button's ID changes but its text, position, and surrounding context remain consistent, self-healing AI recognizes the element and adjusts the test accordingly. Organizations with frequently changing interfaces or microservices architectures see the highest returns from this capability.

QA Agents and Autonomous Testing

The newest and most transformative category involves AI agents that proactively assist testers throughout the entire workflow. These QA Agents actively participate in test planning, creation, execution, and maintenance.

Unlike traditional automation that follows predefined scripts, agentic AI can independently explore applications, identify test scenarios, and execute validation based on high-level goals. A tester might instruct an agent to "verify the checkout flow handles edge cases" and receive comprehensive test coverage without specifying every step.

This shift from passive tools to active collaborators is the future of test automation AI. Teams adopting agentic approaches report that AI handles routine coverage while humans focus on strategic quality decisions and complex exploratory testing.

Visual AI Testing

Visual testing tools compare screenshots across browsers, devices, and releases to detect unintended UI changes. AI algorithms distinguish between acceptable rendering differences and actual defects, reducing false positives that waste human review time.

The technology excels at catching issues that functional tests miss entirely. A button that works correctly but renders in the wrong color, or text that overflows its container on certain screen sizes, gets flagged automatically. Teams where visual consistency directly impacts user experience and brand perception see significant value from visual AI capabilities.

Which AI Testing Features Deliver the Most Impact?

Knowing which capabilities exist is useful, but understanding their practical impact helps prioritize your evaluation. The following features consistently differentiate high-performing QA teams from those struggling to keep pace.

Natural Language Test Creation

The ability to write tests in plain English rather than code changes who can contribute to test automation. Business analysts, product managers, and manual testers can describe expected behavior in natural language and receive executable tests without learning programming.

This democratization of test creation addresses a persistent bottleneck. When only developers or automation specialists can write tests, coverage depends on their availability and priorities. Natural language interfaces expand the pool of contributors while maintaining test quality through AI translation to robust automation code.

Chat-Based Test Interfaces

Building on natural language capabilities, chat interfaces allow testers to interact with AI through conversation. Instead of navigating complex tool interfaces, testers describe what they need and receive immediate assistance. They can ask questions, request modifications, and iterate on test designs through dialogue.

This conversational approach reduces the learning curve for new team members and accelerates test development for experienced practitioners. The AI remembers context from earlier in the conversation, enabling progressive refinement of test scenarios without starting over.

Gherkin and BDD Integration

For teams practicing behavior-driven development, AI tools that understand and generate Gherkin syntax provide seamless workflow integration. The Given-When-Then format bridges communication between technical and business stakeholders while serving as executable test specifications.

AI-powered Gherkin test creation automatically transforms user stories into properly formatted scenarios. The technology understands BDD conventions and produces tests that follow best practices for maintainability. Teams already invested in Cucumber, SpecFlow, or similar frameworks can accelerate their workflows without changing their approach.

Native DevOps Integration

The value of AI tools for DevOps multiplies when testing happens automatically within deployment workflows rather than as a separate manual step. Native integrations with GitHub, Jira, Jenkins, and other DevOps staples ensure tests trigger on code changes and results surface where developers already work.

Advanced platforms use AI to optimize which tests run based on code changes. Rather than executing entire regression suites on every commit, intelligent selection analyzes change impact and runs relevant tests dynamically. This approach reduces feedback cycles while maintaining coverage confidence.

Unified Manual and Automated Testing

Many organizations maintain separate systems for manual and automated testing, creating silos that complicate reporting and obscure overall quality status. Modern AI testing tools unify both approaches in a single platform, providing comprehensive visibility regardless of how tests execute.

Effective QA strategies blend automation with human judgment. Exploratory testing, usability evaluation, and edge case investigation require human insight. Regression testing, smoke tests, and repetitive validations benefit from automation. Platforms that support both enable teams to apply the right approach for each situation.

How Quickly Is Enterprise AI Testing Adoption Growing?

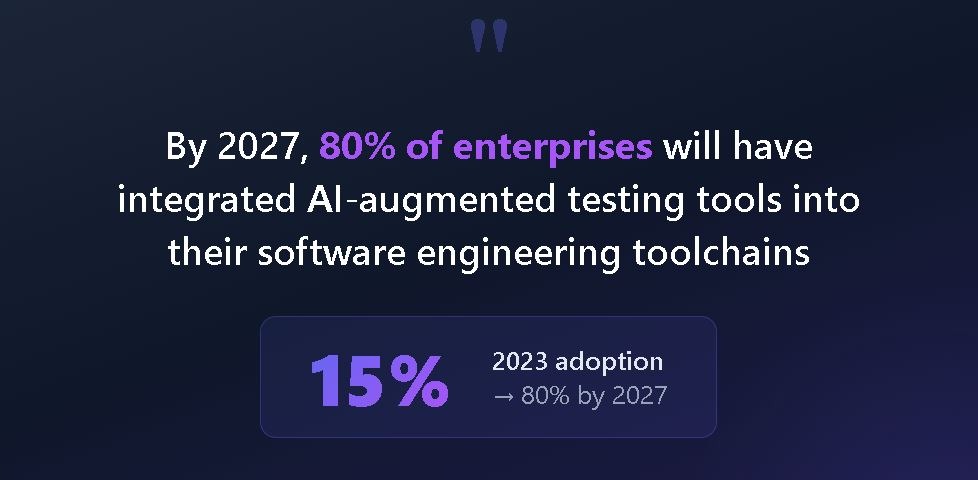

The shift toward AI-augmented testing is happening faster than many anticipated. According to Gartner, 80% of enterprises will have integrated AI-augmented testing tools into their software engineering toolchains by 2027, a dramatic leap from roughly 15% adoption in early 2023.

Several factors drive this acceleration beyond the obvious productivity benefits.

The automation backlog keeps growing. Most organizations have accumulated years of manual tests that should be automated but lack the engineering resources to convert them. AI tools for DevOps that generate automation from existing test documentation or natural language descriptions provide a path to clearing this backlog without massive staffing increases.

Test maintenance costs have become unsustainable. Traditional automation frameworks require constant upkeep as applications evolve. Self-healing capabilities and intelligent element detection reduce maintenance overhead in many implementations, freeing QA teams to focus on coverage expansion rather than script repair.

Quality expectations continue rising. Users expect flawless experiences across devices, browsers, and use cases. Meeting these expectations with manual testing alone is mathematically impossible given release velocity requirements. AI software testing provides the only scalable path to comprehensive coverage.

How Do AI Testing Tool Capabilities Compare?

Understanding how different AI capabilities address specific challenges helps match features to your priorities.

| Capability | Primary Benefit | Best For | Implementation Complexity |

| AI Test Generation | Rapid test creation from requirements | Teams with coverage gaps | Low to Medium |

| Self-Healing Tests | Reduced maintenance burden | Frequently changing UIs | Medium |

| QA Agents | Autonomous test exploration | Mature DevOps organizations | Medium to High |

| Visual AI Testing | UI consistency validation | Brand-sensitive applications | Low |

| Natural Language Creation | Democratized test authoring | Mixed-skill teams | Low |

| Chat Interfaces | Conversational test development | Teams new to automation | Low |

| Gherkin/BDD Support | BDD workflow acceleration | Teams practicing BDD | Low |

| DevOps Integration | CI/CD embedded testing | Pipeline-centric workflows | Medium |

The table reveals that no single capability solves every challenge. AI software testing success depends on combining capabilities that address your pain points and workflow requirements.

What Should You Consider When Choosing Intelligent Test Automation?

Selecting the right AI testing tools requires an honest assessment of your current state and where you want to go. Several factors consistently influence success or failure with these platforms.

Team Technical Depth

Platforms range from requiring significant programming knowledge to truly no-code experiences. Mismatching tool complexity to team capabilities creates frustration and abandonment. If your QA team includes former developers comfortable with code, a more technical platform offers greater flexibility. If business analysts need to contribute tests, prioritize natural language interfaces and chat-based interactions.

Existing Tool Ecosystem

AI testing tools rarely operate in isolation. They need to connect with issue trackers, source control systems, CI/CD pipelines, and reporting dashboards. Evaluate integration quality with your specific tech stack rather than accepting generic integration claims at face value. Deep, bidirectional integrations with platforms like GitHub and Jira prove far more valuable than shallow connections that require manual synchronization.

Test Type Coverage

Some platforms excel at web UI testing but offer limited API or mobile capabilities. Others provide broad coverage with less depth in specific areas. Map your testing needs against platform strengths to avoid capability gaps that force you to maintain multiple tools. The ideal solution handles manual tests, automated scripts, and unit test results in a unified view.

AI Maturity Trajectory

AI capabilities evolve rapidly. Evaluate not just current features but the vendor's investment in AI research and their roadmap trajectory. Platforms actively advancing their AI capabilities will deliver increasing value over time, while those treating AI as a marketing checkbox will stagnate. Look for evidence of continuous improvement in AI features rather than static implementations.

Total Cost of Ownership

Beyond subscription fees, consider the full cost picture. How much time will implementation require? What training investments are necessary? How does the platform affect ongoing maintenance costs? Intelligent test automation should reduce total QA costs over time, but initial investments vary across platforms.

FAQ

What are AI testing tools? AI testing tools are software platforms that use artificial intelligence and machine learning to automate, optimize, and improve software testing activities. They can generate test cases, self-heal broken tests, identify visual defects, and autonomously explore applications to find bugs.

How do self-healing tests work? Self-healing tests use machine learning to detect when application elements change and automatically update test scripts to maintain functionality. Instead of failing when a button's ID changes, the AI recognizes the button through multiple attributes and adjusts the test accordingly.

Are AI testing tools replacing manual testers? No. AI testing tools automate repetitive tasks and handle maintenance burdens, freeing human testers to focus on exploratory testing, edge cases, and strategic quality decisions that require human judgment and creativity.

What should I look for when evaluating AI testing tools? Key factors include integration with your existing tech stack, support for your required test types (web, mobile, API), team accessibility based on technical skill levels, vendor AI investment trajectory, and total cost of ownership, including maintenance.

Take Your Testing Strategy to the Next Level

The proliferation of AI testing tools in 2026 offers QA teams unprecedented options for accelerating quality assurance while maintaining coverage and reliability. Whether your priority is test generation speed, maintenance reduction, visual accuracy, or autonomous exploration, capabilities exist to match your needs.

TestQuality's AI-powered QA platform brings these capabilities together with QA Agents that proactively assist testers throughout the workflow. From AI-driven test creation with TestStory.ai to seamless GitHub and Jira integration, TestQuality helps teams achieve quality goals faster. Start your free trial and experience how intelligent test management transforms your QA process.