AI test case generation promises efficiency gains, but implementation challenges can derail even well-funded QA initiatives.

- Data quality problems and integration gaps remain the most prominent AI test case challenges.

- The "black box" nature of many AI tools creates serious test case builder pitfalls that prevent iterative refinement and domain-specific customization.

- Successful teams treat AI as an augmentation layer rather than a replacement, maintaining human oversight while leveraging automation for repetitive tasks.

The path forward requires choosing platforms designed for collaboration between human expertise and AI capabilities rather than tools that promise fully autonomous testing.

AI is transforming how quality assurance teams approach software testing, but the transition hasn't been seamless. According to Gartner's research, 40% of organizations believe QA daily responsibilities will fundamentally change due to AI adoption over the next three years. With that disruption comes a fresh batch of problems that most teams didn't anticipate when they first signed up for an AI testing pilot.

The hype around AI test case challenges has reached a fever pitch. Every conference keynote promises autonomous testing, self-healing scripts, and test suites that practically write themselves. The reality? Most QA professionals find themselves wrestling with integration headaches, questionable output quality, and tools that don't quite understand their domain. This isn't a condemnation of AI in testing. It's a reality check that helps teams set appropriate expectations and choose solutions that actually deliver value.

Why Are QA Teams Rushing to Adopt AI Test Case Generation?

The pressure driving AI adoption in QA is real and quantifiable. Software release cycles have compressed, with organizations shipping code multiple times daily rather than monthly. Manual test case creation can't keep pace with continuous deployment pipelines. Teams face a brutal math problem where the number of features requiring tests grows faster than the human capacity to write them.

AI testing problems often begin before teams even implement a solution. The promise of reducing test creation time from hours to minutes is seductive. Organizations are increasingly assigning AI-native responsibilities to roles that previously relied entirely on manual processes. QA is no exception. When competitors claim high reductions in test authoring time, it creates organizational pressure to adopt similar tools regardless of readiness.

The legitimate benefits are substantial when implementation goes well. AI can analyze requirements and generate test scenarios covering edge cases that human testers might overlook. Pattern recognition across large codebases enables the identification of test coverage gaps. Automated maintenance reduces the burden of updating test suites when application interfaces change. These advantages explain why adoption continues despite the challenges that follow.

What Are the Biggest AI Test Case Challenges Teams Face Today?

Understanding where AI test case generation typically struggles helps teams prepare for obstacles before they derail projects. The challenges fall into several categories, each requiring different mitigation strategies.

Data Quality Creates the Foundation for Failure

The principle of "garbage in, garbage out" applies forcefully to AI-generated test cases. Models trained on incomplete requirements, inconsistent naming conventions, or outdated documentation produce test cases that miss critical scenarios.

Historical test data presents its own complications. Defect logs with inconsistent severity labels confuse AI models attempting to prioritize test coverage. Test results without proper context fail to teach models which scenarios matter most. Teams often underestimate the data preparation work required before AI tools can function effectively in their environments.

Integration Complexity Derails Implementation

Standalone AI test generation creates data silos that undermine traceability. When generated test cases live in one platform while execution results and defect tracking happen elsewhere, teams lose visibility into the complete quality picture. The connection between a requirement, its generated test, the execution result, and any discovered defects breaks down when systems don't communicate.

CI/CD pipeline integration adds complexity. Test cases generated by AI tools need to execute within existing automation frameworks. Format incompatibilities, authentication requirements, and environment configurations all create friction points. Teams report spending more time on integration than they saved through automated generation during their first implementation year.

Skills Gaps Limit Effective Utilization

Traditional QA expertise doesn't automatically translate to AI tool proficiency. Evaluating whether AI-generated test cases adequately cover requirements demands understanding both the application domain and how language models interpret specifications. Many teams lack personnel who can effectively bridge this gap.

The skills challenge extends to prompt engineering when teams use general-purpose AI models. Each small adjustment to prompts can bring comprehensive improvements, but discovering effective prompts requires significant iteration. Teams without this expertise often accept suboptimal output rather than investing in refinement.

Privacy and Security Concerns Block Adoption

Test cases frequently reference sensitive business logic, proprietary algorithms, and customer data patterns. Feeding this information to cloud-based AI services raises legitimate security questions. Regulated industries face additional compliance requirements around data handling that many AI testing tools weren't designed to accommodate.

Organizations must evaluate whether AI vendors process data in ways that comply with internal policies and external regulations. The due diligence required often delays adoption, especially when legal and compliance teams lack familiarity with AI tool architectures and data handling practices.

How Does the "Black Box" Problem Create Test Case Builder Pitfalls?

Many AI test generation platforms operate as closed systems where users provide input and receive output without visibility into the reasoning process. This creates several test case builder pitfalls that undermine practical utility.

When AI generates a test case, QA professionals often can't determine why specific assertions were included or why certain scenarios were prioritized. This opacity makes it difficult to assess whether output aligns with actual testing requirements. A generated test might check superficial functionality while missing the critical business logic that determines whether a feature actually works correctly.

The lack of explainability prevents meaningful iteration. When teams don't understand why AI made particular decisions, they can't effectively guide it toward better output. Instead of refining generation parameters based on understanding, teams resort to trial and error. Some organizations discard AI-generated tests entirely and rewrite them manually because understanding the AI's reasoning would take longer than starting fresh.

Test case builder pitfalls also emerge from limited customization options. Generic AI models trained on broad datasets produce generic test cases. An e-commerce platform and a healthcare records system have vastly different testing requirements, yet many tools apply the same patterns to both. Domain-specific terminology, industry regulations, and unique business rules rarely factor into off-the-shelf AI generation.

The most effective test automation approaches balance automation capabilities with human oversight and customization. Platforms that treat test generation as a collaborative process between AI and human expertise avoid many black box problems by design.

What QA Blockers with AI Prevent Meaningful Human Oversight?

A critical gap in many AI test generation tools is treating human interaction as an afterthought. Platforms often implement generation as a one-way process. AI creates test cases, humans accept or reject them, and the cycle repeats without learning. This approach wastes the institutional knowledge that experienced testers bring to quality assurance.

QA blockers with AI frequently stem from the inability to meaningfully edit and refine generated content. When teams can't adjust AI suggestions to match organizational testing standards, add context from domain expertise, or incorporate historical knowledge about application quirks, the generated tests remain superficial. Experienced testers know which edge cases matter most for their specific application. AI tools that don't capture this knowledge produce technically correct but practically limited test cases.

Feedback mechanisms often prove inadequate. Marking a generated test as "incorrect" provides minimal information for model improvement. Teams need ways to explain why output missed the mark, whether it was inadequate coverage, incorrect assumptions about user behavior, or a misunderstanding of feature requirements. Without structured feedback channels, the same generation errors recur indefinitely.

Collaboration features rarely receive sufficient attention in AI-focused tools. Testing is a team activity involving developers, QA specialists, product managers, and business stakeholders. AI generation tools that don't support review workflows, commenting, and collaborative refinement create bottlenecks where individual reviewers become gatekeepers for all AI-generated content.

Organizations practicing behavior-driven development face particular challenges. BDD emphasizes collaboration and shared understanding through scenarios written in natural language. AI tools that generate Gherkin syntax without supporting the collaborative review process that makes BDD valuable undermine the methodology's core benefits.

Which AI Testing Problems Stem from Over-Reliance on Automation?

The promise of AI often leads to unrealistic expectations about what automation can achieve. Treating AI as a complete replacement for human judgment creates its own category of AI testing problems.

Exploratory testing remains fundamentally human. The creative investigation of software to discover unexpected behaviors requires intuition, curiosity, and the ability to recognize when something "feels wrong," even when it technically passes defined criteria. AI excels at consistently executing predefined scenarios but lacks the capacity for genuine exploration. Teams that eliminate exploratory testing in favor of AI-generated suites inevitably miss entire categories of defects.

Evaluating the user experience presents similar limitations. AI can verify that buttons render correctly and workflows complete successfully. It cannot assess whether an interface is intuitive, whether error messages communicate clearly, or whether the overall experience meets user expectations. These judgments require human perception and empathy that current AI capabilities don't replicate.

The maintenance burden often shifts rather than disappears. AI-generated tests still require updates when applications change. Self-healing features help with minor UI modifications but fail when fundamental workflows evolve. Organizations that eliminated QA headcount based on AI promises sometimes find themselves scrambling to rebuild expertise when generated tests become obsolete.

Effective test management recognizes that manual and automated approaches serve different purposes. AI augments human capabilities rather than replacing them entirely. The teams achieving the best results use AI for repetitive generation tasks while preserving human involvement for strategic test planning, exploratory investigation, and judgment-intensive evaluation.

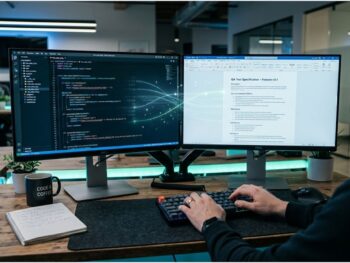

Common AI Test Case Challenges and Solutions at a Glance

Understanding the challenges helps teams prioritize their mitigation efforts. The following table summarizes the most prevalent issues and practical approaches to addressing them.

| Challenge | Impact | Mitigation Strategy |

| Poor training data quality | Irrelevant or incorrect test cases | Implement data profiling and establish documentation standards before AI adoption |

| Integration with existing tools | Broken traceability and data silos | Choose platforms with native integrations for your test management and CI/CD stack |

| Skills gaps in AI/ML | Inability to evaluate or improve output | Invest in training programs and establish feedback loops between QA and AI teams |

| Privacy and compliance concerns | Delayed or blocked adoption | Conduct vendor security reviews early; consider on-premise or hybrid deployment options |

| Black box generation | No insight into AI reasoning | Select tools offering explainable output or generation transparency features |

| Limited human oversight | Generic tests missing domain context | Prioritize platforms supporting collaborative review and iterative refinement |

| Over-reliance on automation | Missed UX issues and exploratory gaps | Maintain dedicated resources for manual testing alongside AI-assisted generation |

| Evolving requirements | Outdated tests requiring constant updates | Implement continuous feedback mechanisms linking requirements changes to test updates |

How Can Teams Overcome These AI Test Case Challenges?

Successfully navigating AI adoption in QA requires deliberate strategy rather than tool-first thinking. The following approaches help teams extract value while minimizing common pitfalls.

Start with data preparation before selecting a tool. AI performance depends entirely on input quality. Audit existing requirements documentation, test case repositories, and defect logs before evaluating tools. Standardize naming conventions, ensure consistent severity labels, and document tribal knowledge that currently exists only in team members' heads. This foundation determines whether any AI tool can succeed in your environment.

Evaluate integration capabilities rigorously. Demo environments rarely reveal integration complexity. Request proof-of-concept implementations that connect to your actual test management workflows, CI/CD pipelines, and defect tracking systems. The effort required to maintain integrations often exceeds the effort saved through generation.

Establish human review workflows from day one. Even the best AI-generated tests require validation. Build review processes that capture domain expertise and feed corrections back to improve future generation. Treat AI output as first drafts that accelerate work rather than finished products ready for execution.

Preserve exploratory testing capacity. Resist pressure to eliminate manual testing entirely. Allocate dedicated time for exploratory investigation that AI can't replicate. The bugs discovered through creative exploration often represent the highest business impact issues.

Create feedback mechanisms that actually inform improvement. Simple approve/reject interfaces provide minimal learning signal. Implement structured feedback that explains why generated tests miss the mark. Track patterns in corrections to identify systematic generation weaknesses.

Plan for ongoing skills development. AI tools evolve rapidly. Today's best practices may become obsolete within months. Budget for continuous learning and establish relationships with tool vendors who provide effective training resources.

Measure outcomes rather than activity. The goal isn't generating more tests. It's finding more defects before customers do while maintaining release velocity. Track defect escape rates, test maintenance burden, and cycle time rather than simply counting generated tests.

Frequently Asked Questions

Can AI test case generation completely replace manual test creation?

No. AI excels at generating tests for repetitive scenarios and identifying coverage gaps in existing test suites. However, exploratory testing, user experience evaluation, and tests requiring deep domain knowledge still require human involvement. The most effective approaches use AI to handle routine generation while preserving human capacity for judgment-intensive testing activities.

How much training data do AI test generation tools require to be effective?

Data requirements vary by tool and use case. General-purpose large language models can generate tests from minimal input but often produce generic results. Tools trained on specific application domains or organizational patterns typically require several months of historical test cases, requirements documentation, and defect logs to generate contextually appropriate output. The quality of training data matters more than quantity.

What security measures should teams require from AI testing vendors?

Critical security considerations include data encryption in transit and at rest, clear data retention and deletion policies, compliance certifications relevant to your industry, options for on-premise or private cloud deployment, and contractual guarantees about training data usage. Teams handling sensitive business logic or regulated data should conduct thorough vendor security assessments before adoption.

Build Your AI Testing Strategy on Solid Ground

The challenges surrounding AI test case generation are real but not insurmountable. Teams that approach adoption with realistic expectations, proper preparation, and appropriate human oversight extract significant value from these tools. The key insight is that AI augments rather than replaces human expertise in quality assurance.

The most successful implementations treat AI as a collaborative partner rather than an autonomous replacement. They invest in data quality and maintain human review workflows that capture domain expertise. They choose platforms designed to support iterative refinement rather than one-shot generation.

Modern AI-powered QA platforms offer the balance of automation efficiency and collaborative control that makes AI adoption practical. TestQuality's approach combines intelligent test management with integration capabilities that connect AI-generated content to your existing workflows. Start your free trial and experience how AI-augmented testing works when human expertise remains at the center.