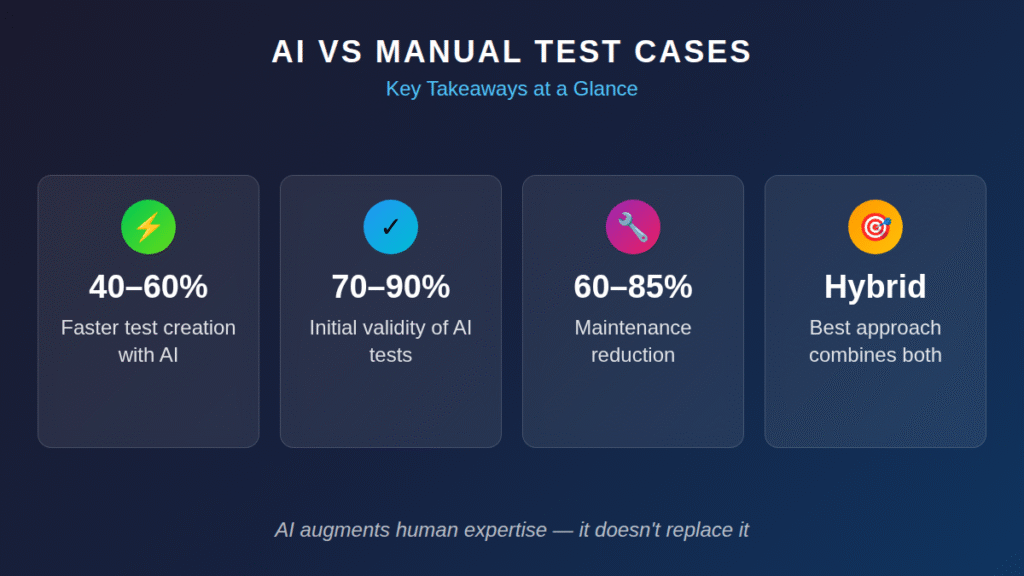

AI test case generation delivers measurable ROI when paired with human expertise, but manual writing remains essential for exploratory scenarios and complex business logic.

- Teams using AI test case generation report 40–60% reductions in test creation time when requirements are well-documented.

- Manual test writing provides irreplaceable value for edge cases, exploratory testing, and domain-specific validation.

- The best approach combines AI-generated baseline coverage with human-crafted tests for critical paths.

- Calculate your ROI by comparing hours saved against tool costs, training investment, and maintenance overhead.

Organizations achieving the best results treat AI as an augmentation layer rather than a replacement for QA expertise.

Test case creation has always been the unglamorous workhorse of software quality. It's the work nobody wants to do but everybody needs done well. A single enterprise feature can demand 100 or more test scenarios, each requiring 15–30 minutes of careful documentation when written by hand. Multiply that across sprints, and QA teams find themselves buried in repetitive documentation work instead of catching the bugs that actually matter.

The World Quality Report 2024 found that 68% of organizations are now either actively using generative AI in quality engineering or have implementation roadmaps in place. AI test case generation has moved from experimental curiosity to mainstream consideration. But when does AI make sense for your testing workflow, and when does manual writing still deliver better test management outcomes?

This concept isn't a simple either/or decision. The real ROI comes from understanding exactly where each approach excels and building a strategy that leverages both.

What Is AI Test Case Generation?

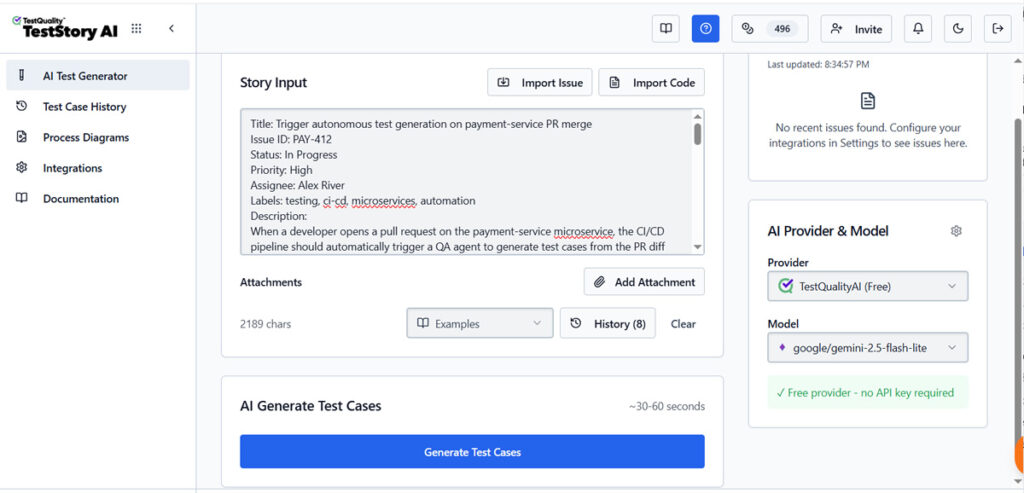

AI test case generation uses natural language processing and machine learning to transform requirements, user stories, and feature descriptions into structured test scenarios. Rather than a QA engineer manually parsing a specification document and translating each acceptance criterion into test steps, AI tools handle that initial conversion automatically.

You feed the system a requirement like "users should be able to reset their password via email," and it outputs multiple test cases covering the happy path, error states, edge conditions, and validation rules. Advanced tools generate output in BDD formats like Gherkin, making scenarios immediately usable in automation frameworks.

In a traditional setup, humans act as the orchestration layer — reading a Jira ticket, writing a script, and triggering a build. In a 2026 agentic architecture, tools like TestStory.ai assume this role. The orchestration layer decouples the intent (what needs to be tested) from the execution (how the browser clicks), allowing the AI to manage the holistic lifecycle of a test suite.

This architectural shift has a measurable operational consequence: test coverage is no longer gated on human availability. The pipeline never sleeps, and neither does coverage.

What makes modern AI automation testing different from template-based generation is contextual understanding. These systems interpret intent, identify implied scenarios, and predict failure modes that human reviewers might overlook during an initial pass. When requirements are clearly documented, current AI tools generate test cases with 70–90% initial validity, though human review remains essential before execution.

Instead of spending hours on documentation, engineers focus on the analytical work that actually requires human judgment.

Why Does Manual Test Case Writing Still Exist?

If AI can generate tests from requirements, why would anyone still write manual test cases by hand? The answer lies in the limitations of any automated approach and the irreplaceable value of human domain expertise.

Manual test writing excels in situations where requirements are ambiguous, incomplete, or rapidly evolving. A skilled QA engineer brings context from previous projects, an understanding of user behavior patterns, and intuition about where systems typically fail. That institutional knowledge doesn't transfer easily into prompts or requirement documents.

Exploratory testing is another domain where manual approaches dominate. When investigating how an application behaves under unusual conditions, testers follow hunches and investigate unexpected responses. AI-generated tests are inherently scripted and predetermined. The creative investigation that uncovers hidden defects requires human curiosity and adaptability.

Complex business logic also favors manual crafting. Financial calculations, regulatory compliance scenarios, and multi-step workflows with interdependent conditions often require test cases built from a deep understanding of business rules that aren't captured in user stories. A tester who understands why the system works a certain way writes more effective validation than one who only knows what the system should do.

How Do AI and Manual Test Cases Compare on Core Metrics?

Understanding the practical differences between manual and AI-powered testing requires examining how they perform across the metrics that matter most to QA teams and engineering leadership.

| Metric | AI Test Case Generation | Manual Test Writing |

| Time to create | Minutes per feature | Hours to days per feature |

| Initial coverage breadth | High (captures obvious scenarios) | Variable (depends on tester experience) |

| Edge case detection | Moderate (misses domain-specific cases) | High (when tester has domain expertise) |

| Consistency | Very high across team | Varies by individual |

| Maintenance burden | Lower with self-healing tools | Higher (manual updates required) |

| Upfront investment | Tool costs + training | Hiring/training testers |

| Business logic accuracy | Requires human validation | Built-in when tester understands domain |

| Scalability | Excellent | Limited by headcount |

The comparison reveals why hybrid approaches outperform pure strategies in either direction. AI provides speed and consistency at scale, while manual expertise delivers accuracy where it matters most.

What Are the Pros and Cons of AI Test Case Generation?

Understanding the genuine advantages and limitations of AI-powered test creation helps teams set realistic expectations and avoid both underutilization and over-reliance.

Advantages of AI Test Case Generation

Dramatic time savings on routine work. Teams report completing in minutes what previously took days. When requirements documents are well-structured, AI handles the mechanical translation into test scenarios with impressive efficiency. QA engineers can focus on test strategy, automation architecture, and defect analysis.

Consistent output quality. Human testers have varying levels of experience, attention to detail, and familiarity with documentation standards. AI-generated tests follow consistent formatting, naming conventions, and coverage patterns regardless of who triggers the generation. This consistency simplifies review processes and improves test suite maintainability.

Expanded coverage identification. AI tools often identify scenarios that human reviewers miss on first pass, particularly negative test cases and boundary conditions. The systems are trained on patterns from millions of test scenarios and can surface coverage gaps that might not be obvious to someone focused on a specific feature.

Faster iteration cycles. When requirements change mid-sprint, regenerating affected test cases takes minutes rather than hours. This agility helps teams maintain test coverage even when facing the constant requirement shifts that characterize agile development.

Try It Now

See the Plan-Act-Verify loop in action — in under 60 seconds.

Paste any user story into TestStory.ai and watch the orchestration layer generate structured, Gherkin-formatted test cases instantly — covering happy paths, edge cases, and the failure scenarios your team would typically miss. Results sync directly into TestQuality for immediate execution and tracking. No account required to start.

No credit card required on either platform.

Limitations to Consider

Hallucination and accuracy concerns. AI tools sometimes generate test steps that reference non-existent UI elements, assume incorrect preconditions, or include logically impossible scenarios. Human review remains mandatory before execution.

Domain knowledge gaps. Off-the-shelf AI tools don't understand your specific business rules, compliance requirements, or system architecture. Tests generated from a generic understanding may miss the scenarios that actually matter for your application.

Initial investment and learning curve. Despite vendor promises of quick implementation, realistic timelines for mature production use often extend to 18–24 months from initial evaluation. Teams need training, integration work, and process adjustments before achieving meaningful ROI.

What Are the Pros and Cons of Manual Test Writing?

Manual test creation carries its own set of trade-offs that become clearer when examined against the AI alternative.

Advantages of Manual Test Writing

Deep domain accuracy. Testers who understand the business context write tests that validate what matters to users and stakeholders. They know which edge cases represent real-world scenarios versus theoretical possibilities, and they prioritize accordingly.

Exploratory capability. Manual processes allow testers to investigate unexpected behaviors, follow intuition about potential problems, and adapt testing approaches based on what they discover. This flexibility uncovers defects that scripted tests would never find.

No tool dependency. Manual testing requires minimal infrastructure investment. Teams can start immediately without procurement cycles, integration work, or specialized training. For smaller teams or projects with limited budgets, this accessibility matters.

Limitations Worth Acknowledging

Scalability ceiling. Manual test creation doesn't scale linearly with application complexity. As systems grow, maintaining comprehensive coverage requires proportionally more tester hours, creating a bottleneck that compounds over time.

Inconsistency across team members. Different testers interpret requirements differently, document at varying levels of detail, and bring different biases to coverage decisions. Without strong standards and review processes, test suite quality becomes unpredictable.

Maintenance overhead accumulates. When applications change, every affected manual test case requires individual review and update. The maintenance burden grows faster than the test suite itself, eventually consuming time that should go toward new coverage.

How Do You Calculate ROI for AI Test Case Generation?

Moving from qualitative comparison to financial justification requires concrete ROI analysis. Here's a practical framework for evaluating whether AI test case generation makes financial sense for your organization.

Step 1: Measure current state costs. Calculate hours spent on test case creation across your team over a representative period. Include initial writing, review cycles, and revisions from requirement changes. Apply your fully-loaded labor costs to get a baseline expenditure.

Step 2: Estimate AI-enabled time savings. Conservative estimates suggest a 40–60% reduction in creation time for well-documented requirements. Apply this reduction to your baseline hours, accounting for the reality that not all requirements will be AI-suitable.

Step 3: Add tool and implementation costs. Include licensing fees, integration development, training time, and the productivity dip during adoption. Don't forget ongoing maintenance and administration overhead.

Step 4: Factor maintenance improvements. Tools with self-healing capabilities can reduce test maintenance overhead by 60–85% in environments with frequent UI changes. Quantify your current maintenance hours and apply appropriate reduction estimates.

Step 5: Consider coverage quality impacts. Improved coverage catches defects earlier when they're cheaper to fix. Fixing bugs in production costs more than addressing them during development. Estimate the value of defects caught earlier through expanded AI-generated coverage.

Organizations achieving the strongest ROI share common characteristics: clearly documented requirements, stable development processes, and realistic expectations about AI's role as augmentation rather than replacement.

When Should You Use AI vs Manual Test Writing?

The decision becomes clearer when you match each approach to the scenario type.

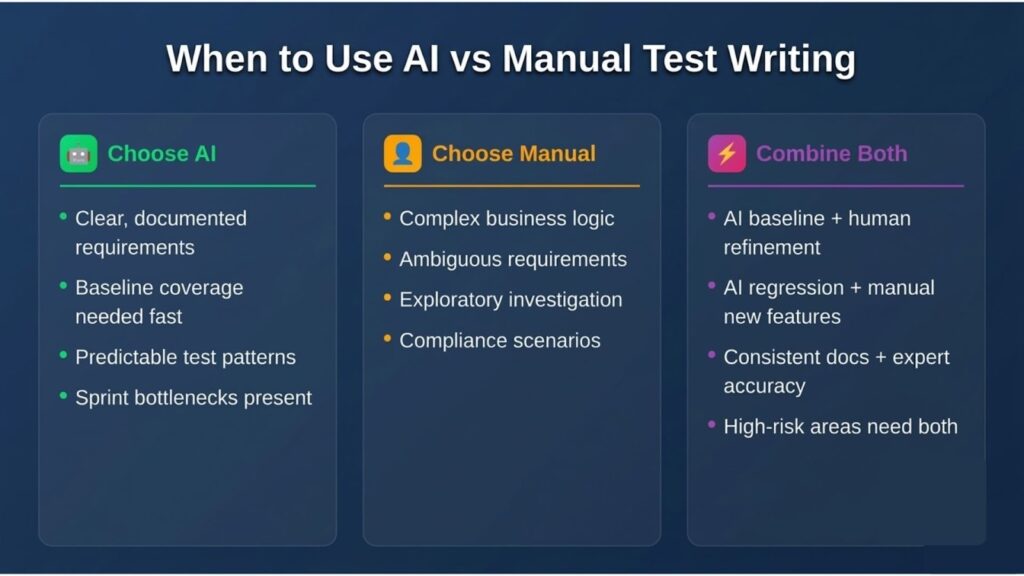

Choose AI test case generation when:

- Requirements are well-documented with clear acceptance criteria

- You need baseline coverage quickly for new features

- Test scenarios follow predictable patterns across similar features

- Your team struggles with test creation bottlenecks during sprint cycles

- Maintenance overhead consumes excessive tester time

Choose manual test writing when:

- Business logic requires deep domain expertise to validate correctly

- Requirements are ambiguous or actively evolving

- You're testing novel functionality without established patterns

- Exploratory investigation is needed to understand system behavior

- Regulatory or compliance scenarios require documented human judgment

Combine both approaches when:

- AI generates initial coverage while humans refine critical paths

- Baseline tests come from AI, edge cases from manual exploration

- AI handles regression scenarios while testers focus on new feature validation

- You want consistent documentation with expert-level accuracy on high-risk areas

The most successful teams don't debate AI versus manual. They use both strategically, allocating each approach to the scenarios where it delivers maximum value.

FAQ

How accurate are AI-generated test cases compared to manually written ones? AI-generated test cases achieve 70–90% initial validity when requirements are clearly documented, but they require human review before execution. Manual test cases written by experienced testers typically have higher accuracy for complex business logic but take longer to create.

What's the typical ROI timeline for AI test case generation tools? Realistic timelines for mature production implementation run 18–24 months from initial evaluation, though teams often see measurable time savings within the first few months of adoption. The strongest ROI comes from organizations with well-documented requirements and high test volume.

Can AI test case generation replace manual testing entirely? No. AI excels at generating baseline coverage from documented requirements but cannot replace human judgment for exploratory testing, complex domain validation, or scenarios requiring real-time adaptation. The best approach combines AI efficiency with human expertise.

What types of teams benefit most from AI test case generation? Teams with high test volume, well-documented requirements, and repetitive test patterns see the strongest benefits. Organizations struggling with test creation bottlenecks or maintenance overhead are particularly good candidates for AI augmentation.

Build Your Testing Strategy with AI-Powered Quality

The choice between AI test case generation and manual test cases isn't binary. It's about building a testing strategy that leverages the strengths of each approach while compensating for its limitations. Teams that treat AI as a replacement for QA expertise consistently underperform compared to those who view it as an amplification layer for skilled testers.

Modern test management platforms have evolved into active intelligence layers that support both AI-generated and human-crafted tests within unified workflows. TestQuality's AI-powered capabilities, including TestStory.ai for generating test cases from requirements, integrate seamlessly with existing quality workflows while maintaining the flexibility teams need for manual test creation and management. Start your free trial and experience how QA Agents can transform your testing workflow.